Some Guy offers a rare, unvarnished look at the collision between high-stakes enterprise AI deployment and the crushing weight of family caregiving, arguing that the true bottleneck in automation isn't technology, but the human capacity to translate business logic into code. While the tech industry obsesses over model parameters, this piece reveals that the most critical work happens in the messy gap between a developer's intuition and a subject matter expert's reality. It is a stark reminder that the future of work depends less on sleek interfaces and more on the endurance of individuals trying to build reliable systems while their own lives teeter on the edge.

The Human Cost of the AI Frontier

The author, Some Guy, frames the current state of enterprise AI not as a revolution, but as a period of dangerous stagnation where energy is being spent on upskilling without a clear path to deployment. He writes, "The whole corporate world is in in something like the period where the temperature of water reaches boiling but remains a liquid. A certain amount of input is needed before the particles can break free and become a gas." This metaphor effectively captures the frustration of leaders who see the potential for automation but are blocked by a workforce that lacks the specific, untaught skills to execute it. The argument lands because it shifts the blame from the technology itself to the organizational inertia that refuses to invest in the unglamorous foundations of data pipelines.

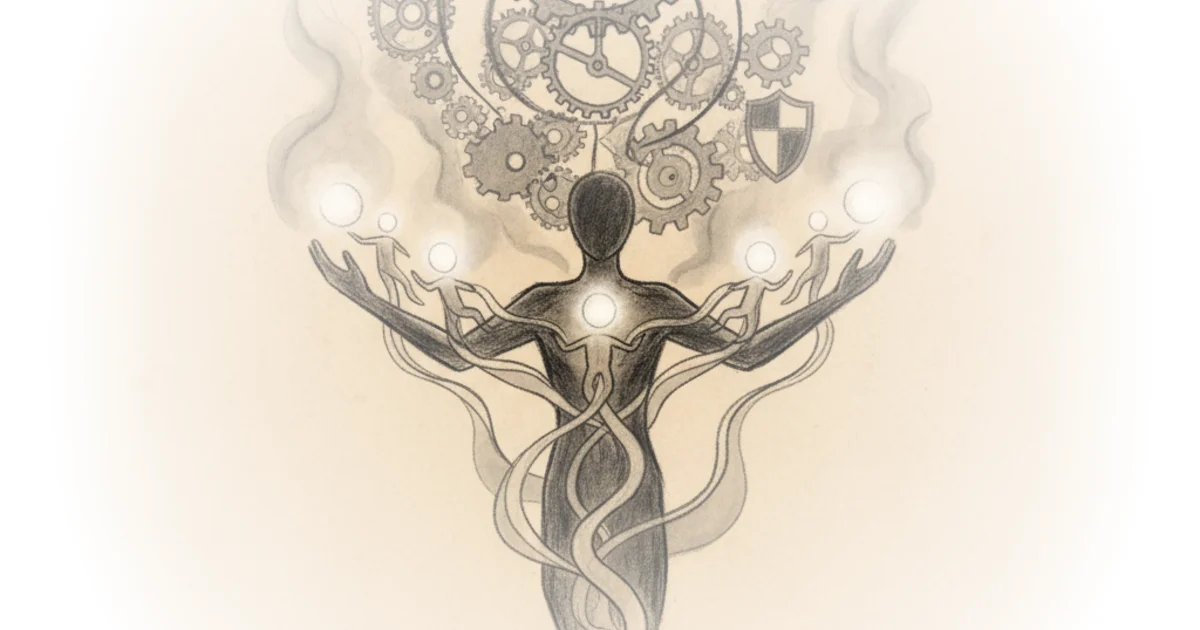

Some Guy highlights a critical disconnect in the industry: the people who can build models are often the least equipped to apply them to real business problems. He notes, "The Venn Diagram of people who can make AI models and people who can deploy AI models for business value is not the near perfect circle I thought it would be." This observation is crucial for any executive reading this; it suggests that hiring more data scientists without training them in business context is a recipe for wasted capital. The author's experience in rewriting prompts and reordering code to achieve results that developers deemed impossible underscores a vital truth: large language models require a level of "computer psychology" that standard engineering training ignores.

The Venn Diagram of people who can make AI models and people who can deploy AI models for business value is not the near perfect circle I thought it would be.

Critics might argue that this view places too much burden on individual leaders to bridge the gap, rather than calling for a systemic overhaul of how AI is taught in universities. However, given the speed of technological change, the author's insistence on practical, on-the-ground adaptation feels more realistic than waiting for academic curricula to catch up. The piece also touches on the historical context of ensemble methods; just as the field of machine learning moved from single-model reliance to combining multiple models to reduce error, the author argues that business deployment requires a similar "ensemble" of human expertise and technical execution.

The Personal Toll of the Double Shift

The narrative takes a darker turn as Some Guy connects his professional struggles to his role as a father to an autistic son. He describes his parenting philosophy as a direct counter to his own upbringing, stating, "One thing I never got from my own parents was a sense that I was important to them personally, so I try to give as much of that to my children as possible." This emotional anchor transforms the piece from a tech commentary into a meditation on legacy and survival. The author is not just building software; he is racing against time to secure a financial future for a child who may require "very substantial support" for the rest of his life.

The author's description of his son's condition as "a disorder in the economization of attention" is a striking, if controversial, reframing of autism. He writes, "If autism is a disorder in the economization of attention, forcing him to pay regular attention and respond to other attention-paying entities while his brain is still developing seems like the best therapy." This perspective challenges the reader to view the child's behavior not as a deficit, but as a different processing architecture that requires specific, active intervention to fine-tune. It draws a parallel to the concept of "fine-tuning" in large language models, suggesting that human development and AI training share a fundamental need for targeted, high-quality data input during critical windows.

The physical and mental toll of this dual existence is laid bare. Some Guy admits to a schedule that would break most people: "My already packed calendar of about 120 meetings per week has skyrocketed to 130 meetings per week... I declined the other 120, for which I am still responsible and delegated one of my direct reports to handle all of them." He describes the health consequences with brutal honesty, noting he was drinking "around six diet cokes a day for the caffeine" and had gained thirty pounds. The author's admission that "if you don't have a spare moment to yourself, you have to find a way to just survive and all of your bullshit is going to leak out because you don't have any place to hide" serves as a warning to the corporate world about the limits of human endurance.

If you don't have a spare moment to yourself, you have to find a way to just survive and all of your bullshit is going to leak out because you don't have a place to hide.

This section reveals the fragility of the "hustle culture" often glorified in tech. The author's attempt to build a "Trust Assembly"—a system for communal memory in an age of AI-generated content—is stalled not by technical hurdles, but by the sheer exhaustion of his daily life. He writes, "I don't know what a good future for people looks like in the world of infinite AI slop and automation if something like this doesn't get built." This highlights a paradox: the very people who could solve the problem of AI reliability are too burned out to do the work.

The Bottom Line

Some Guy's most powerful argument is that the future of AI depends not on better algorithms, but on the resilience of the humans who must bridge the gap between code and reality. The piece's greatest vulnerability is its reliance on the author's own unsustainable work ethic as a model for success, which risks romanticizing burnout rather than solving the systemic issues that cause it. Readers should watch for whether the industry can develop the "intermediate language" and training programs the author invents alone, or if the human cost of this transition will continue to be paid by a few overworked individuals. The ultimate test, as the title suggests, is whether we can build systems that don't require us to be told we are taking a test to survive.