The most surprising finding from Nate Jones's extensive testing of GPT-5.4 isn't that it failed a simple trick question—a task so easy that even a child would get it right. The real surprise is how differently the model performs depending on whether you use its "thinking mode" or not.

Jones ran blind evaluations comparing GPT-5.4 against Claude Opus 4.6 and Gemini 3.1 across six structured tests. He used independent judges and had an AI fluent person verify the results. What he found was striking: in thinking mode, GPT-5.4 competed for first place on epistemic calibration—nailing the exact Higgs Boson mass, retrieving the correct Apple closing price, and getting the current matrix multiplication exponent correct. In auto mode? The same model named 2024 Nobel laureates for a 2025 question. It cited a matrix multiplication bound from 2020 and dropped from first or second place to dead last.

This gap between thinking mode and auto mode is the single most important finding in Jones's evaluation suite.

Where GPT-5.4 Falls Short

The car wash example that opens this piece isn't just a joke—it's a window into how fundamentally broken GPT-5.4's reasoning can be. Jones asked all three models: you need to wash your car. The car wash is 100 meters away. Should you walk or drive? GPT-5.4 thinking mode said walk and gave a long, well-structured explanation that ultimately concluded "maybe you'll need to reposition the car." It was completely wrong. A child would answer drive—you can't wash your car if you leave it at home.

Claude Opus 4.6 wrote one sentence: Drive. You need the car at the car wash. Gemini got it right too—brief version, you should definitely drive. Even though 100 meters is a short and easy walk, you won't be able to wash your car if you leave it at home. The model OpenAI positioned as its most capable system for professional work is the only one that failed this kind of obvious real-world test.

Jones tested business and creative writing too. For business writing, GPT-5.4 did not do as good a job clearly summarizing and articulating thinking as Opus 4.6 did. In creative writing, it has what Jones describes as "a tin ear"—it doesn't hear tone well. Give it a challenging piece to mimic like Shakespeare or PG Wodehouse and you won't get a good result.

On verbal creativity specifically, Jones gave models a JPMorgan deck—think the most boring financial deck possible—and asked them to find the funniest phrase, keep the meaning, and emphasize the pun even more. Opus 4.6 won again, delivering a triple-layered pun across three independent semantic layers. GPT-5.4 found a good source and a real pun but didn't match Opus's creativity.

The Schema Migration Test

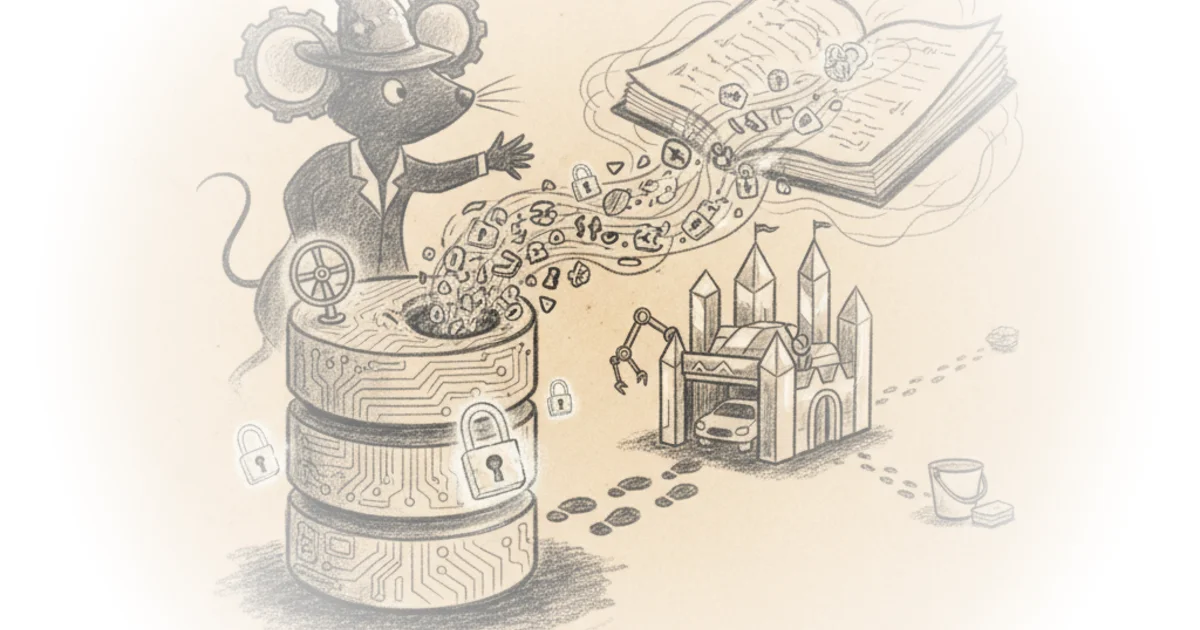

The most entertaining evaluation was what Jones calls "the eval from hell"—schema migration from a shoebox of business data. Think handwritten receipts, different schemas of database tables, different kinds of hashes for provenance tracking—a complete mess as if you threw all your receipts and expenses into a pile and said make sense of this.

GPT-5.4 did an extraordinary job finding and parsing sources. It scored 99.1% on file discovery—able to OCR handwritten receipts, dig into database tables, pull the data in. But it really struggled with filtering the data. It let some dirty data through. Specifically, Jones placed a fake customer named Mickey Mouse in that shoebox. GPT-5.4 let it through. A $25,000 car wash order from test customer—let through too.

The job was to normalize all this data and construct a production database. And the model did phenomenally on reach but terribly on filtering. It struggled with what Jones calls "data hygiene"—it looked as if the model thought the job was to set up a pipeline and run it, and as long as it got the reach, it was good.

Opus 4.6 did a much worse job at finding the data—it scored only 75% on file discovery because it didn't install a key Python tool it could have found. Gemini did even worse than Opus on this agentic task.

Where GPT-5.4 Actually Wins

But here's what makes GPT-5.4 the most interesting model Jones tested: it absolutely crushed some areas where the competitors failed.

First, it builds better quantitative models than anything else right now. Jones gave each model the same prompt—build a spreadsheet projecting the Seattle Seahawks 2026 season win probabilities using all 32 teams' 2025 results and Seattle's known opponents. GPT-5.4 produced a six-tab workbook with a Pythagorean win expectation, an ELO-like rating system with offseason retention decay, a Poisson binomial season distribution, and a methodology tab that cataloged its own assumptions, shortcuts, and limitations. Opus produced a cleaner three-tab workbook using a simple Bradley Terry model—more readable but not as rigorous.

Jones gives GPT-5.4 extra credit for writing a self-critique of its own work that's more honest than most consulting deliverables. If a model can tell you precisely why its own output is insufficient, it's more useful than the model that produces the prettier artifact.

Second, GPT-5.4 processes more file types with less friction. In the schema migration eval, it discovered and processed 461 out of 465 files—99.1% coverage. It handled CSVs, handled database tables, handled the full range of formats fluently in a way Opus and Gemini don't match.

Third, GPT-5.4 wins on self-knowledge. It gets roughly 90% correct knowledge about itself—what it can do, what its limitations are, how it compares to other models. No other model covers its own capabilities this well.

The Toggle Problem

Jones's biggest concern isn't the model's failures—it's the toggle between thinking mode and auto mode. He estimates that 99% of users will interact with GPT-5.4 through auto mode, which delivers dead-last results on a bunch of things relative to the frontier models. The toggle is something users have to remember every single time—a small switch that makes the difference between world-leading results and terrible results.

Jones isn't saying anyone is being deceptive. He's reporting what the model results show. And he thinks this creates a real training burden: people will have to teach everyone in their office "hey, do this great thing"—the auto switcher should be tuned to invoke thinking where thinking tasks are needed. Jones didn't see that enough.

Critics might note that these evaluations, while thorough, represent specific use cases that may not reflect how most users actually interact with the model day-to-day. The gap between thinking and auto mode is dramatic, but it raises a question about why OpenAI doesn't simply make thinking mode the default—or at least default to it on complex queries.

If you don't toggle to thinking mode, you're not going to get world-leading results of any sort. You're going to get a model that is dead last relative to the frontier on a bunch of things.

Bottom Line

Jones's strongest argument is that GPT-5.4 represents something genuinely new: a model that's sometimes unambiguously the best and sometimes absolutely terrible. Its biggest vulnerability is that this inconsistency isn't obvious—it's hidden in a toggle that most users will never touch. The implications for your workflows are clear—use thinking mode for anything complex, but don't expect the auto switcher to know when it needs to invoke thinking. That gap is going to cost you.