The Missing Skill in AI Mastery

Most AI skill discussions focus on generation. Prompting, workflow design, tool selection, multimodal orchestration — all aimed at producing more, faster. But Nate B Jones argues this is precisely where the bottleneck has shifted.

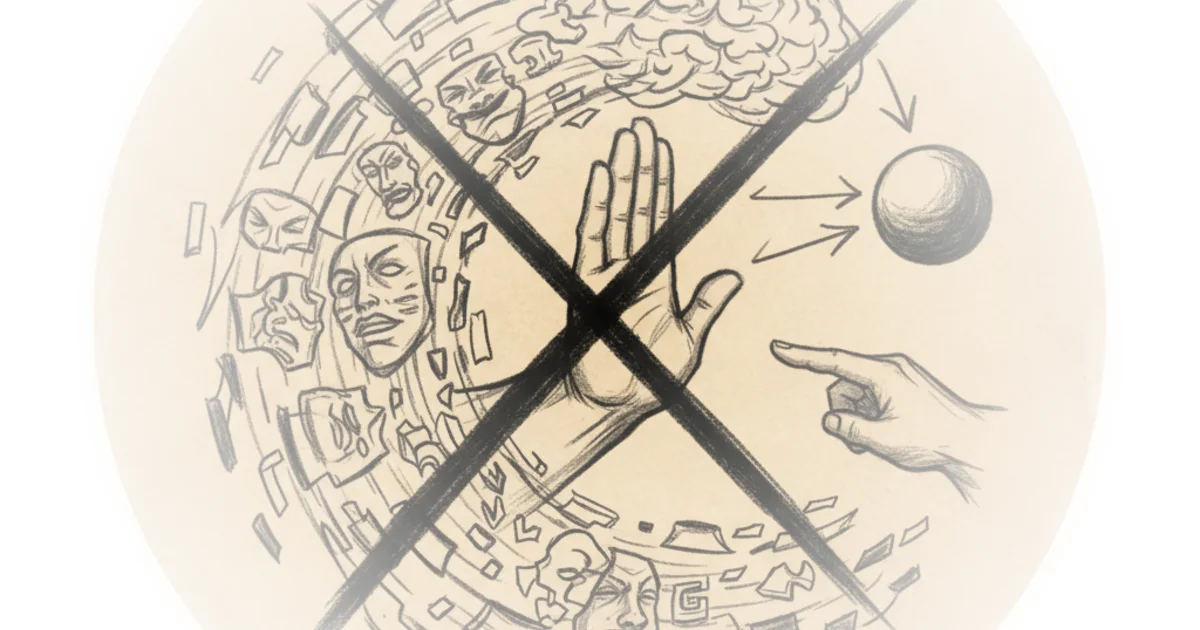

The real differentiator isn't what you generate. It's what you reject.

Jones rejects far more AI output than he accepts. The work arrives with wrong framing, sloppy reasoning, confident-sounding analysis that wouldn't survive contact with someone who actually knows the domain. He sends it back. Explains why. And he's convinced this rejection capability — the ability to look at AI output and say "this is wrong and here's why" — is one of the missing skills nobody's talking about.

The 70% Problem

A recent study by GDP val provides the most rigorous measurement available against actual knowledge work. Frontier models beat or tie professionals with an average of 14 years of experience — 70% of the time on head-to-head comparisons, and they do it 100 times faster for less than 1% of the cost.

This statistic gets read as either a story of AI capability or workforce demise. Both readings miss something more interesting.

If AI matches your best people's output 70% of the time on well-specified tasks, what determines the rest? What happens to the 30% where the output looks right on the surface but leaves the lab and doesn't hit production correctly? The answer is the same in both cases: someone has to look at the output and know.

And knowing requires saying no. A lot.

Recognition vs. Multiplication

Jones identifies two distinct dimensions of rejection skill.

Recognition — the ability to detect that something is wrong — depends entirely on domain experience. Junior analysts won't catch flawed regulatory assumptions without the deep experience senior analysts have. Loan officers might not spot covenant logic errors because they haven't seen enough deals. A strategy partner reviewing AI-generated competitive analysis can immediately spot where proprietary insight on customer switching costs is missing.

The person who's reviewed 2,000 deals and can just feel when something is off is becoming the most important person in the building — not despite AI, but because of it. Recognition is the dimension most enhanced by AI access. A domain expert with very strong recognition and access to AI tools can evaluate 10 times the output they could before.

But this only works inside the boundary of their expertise. Outside that boundary, AI multiplies confidence, not expertise. And that's worse.

Articulation — explaining why something is wrong in a way that produces a usable constraint — is the second dimension. "This isn't right" is just rejection. "This isn't right because you're treating all these requirements identically and the PRD actually needs to be structured this way" — that's a constraint.

It's the difference between taste that stays in someone's head and taste that can be encoded and shared with the team, applied at scale.

Encoding Creates Structural Advantage

Jones points to Epic Systems as proof of concept. The healthcare company didn't win by having better technology. It won by spending decades encoding clinical workflows from thousands of hospitals into a deeply integrated platform — visiting sites, shadowing doctors, absorbing domain constraints. The result: over 300 million patient records and switching costs that are structural.

The mode isn't the software. It's the encoded judgment about what the software needs to get right. Built rejection by rejection, workflow by failure across thousands of hospitals.

When you have a system built out of encoded taste at scale — to the point where it becomes structural for businesses — it's very difficult to rip out.

The Infrastructure Gap

The capture mechanism hasn't existed. Most organizations are generating rejections at grassroots levels every day. Almost none of them are being captured durably.

Jones argues the solution isn't a separate tool, spreadsheet, or database. People won't context switch to log their rejections. The capture has to happen inside the conversation as a side effect of the rejection you're already performing — and he built something for exactly that purpose.

Critics might note that framing rejection as the core AI skill assumes human judgment is always superior to algorithmic evaluation. Some researchers argue that AI systems can develop reliable quality detection through training data alone, without human intervention. The measurement problem remains unsolved — rejection is inherently hard to quantify.

"The ability to look at AI output and say this is wrong and this is why is a huge component of how you identify quality."

Bottom Line

Jones's strongest argument: generation skills are now commoditized. What distinguishes quality work from commodity output is the expert who can articulate what went wrong — and encode that constraint so it compounds across the organization.

The vulnerability: almost nobody is building this infrastructure deliberately. The rejections fall on the floor, living in email threads and Slack messages instead of becoming institutional knowledge. The companies capturing these constraints will buildflywheels that competitors can't replicate by simply subscribing to the same AI APIs. }