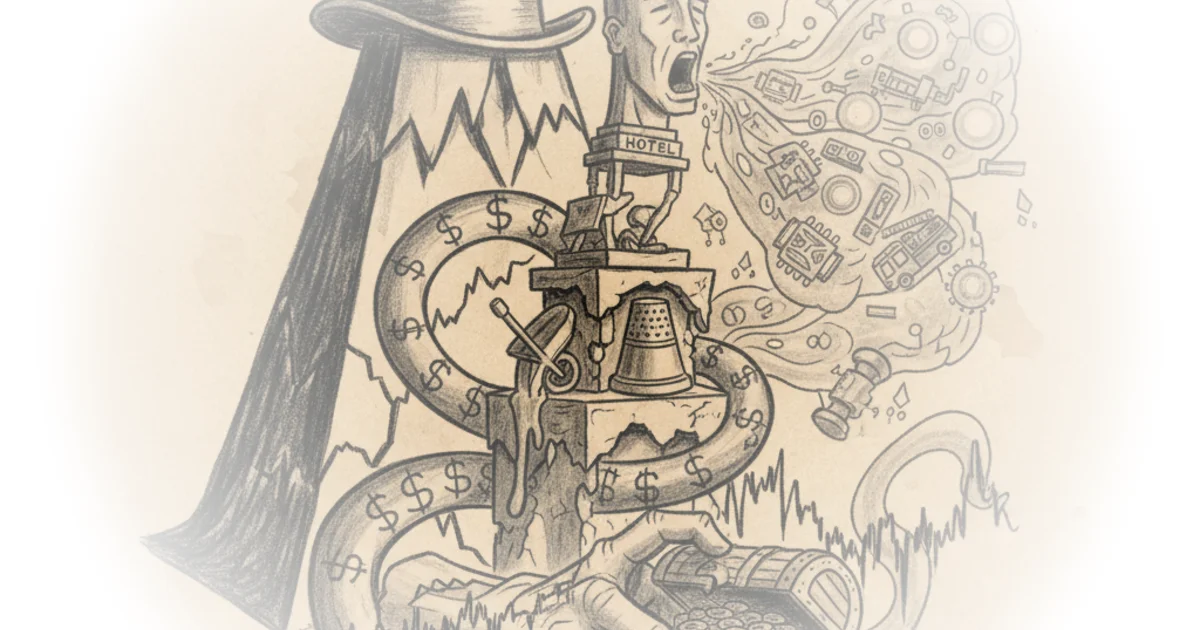

Matt Stoller cuts through the fog of technological mysticism to reveal a far more mundane, yet dangerous, reality: the claim that artificial intelligence has achieved sentience is a strategic narrative designed to justify monopolistic power and evade democratic oversight. While Silicon Valley elites preach about a coming singularity, the actual evidence points to a coordinated effort to reframe corporate theft as inevitable progress. This is not a story about the future of humanity; it is a story about the present of corporate greed.

The Theology of Profit

The piece opens by dismantling the sudden, aggressive push by tech executives to declare their creations "God-like." Stoller zeroes in on Anthropic CEO Dario Amodei, who recently claimed on a podcast that we are "nearing the exponential" and about to become "a country of geniuses in a data center." Stoller finds this framing deeply suspicious, noting that these grandiose claims coincide with massive capital raises and a push for "AI safety" regulations that would effectively lock out competitors. The author points out the irony that while Amodei speaks of moral quandaries regarding warfare, his firm is simultaneously closing a $30 billion funding round and lobbying politicians to protect their interests.

"Anthropic CEO Dario Amodei has invented God, but God has not yet told him sweater vests are a terrible look."

This satirical jab underscores Stoller's central thesis: the "sentience" argument is a marketing product rollout, not a scientific breakthrough. He argues that if these tools were truly as powerful as claimed, the market would reflect that certainty, yet companies are still spending millions on political lobbying to secure their position. The narrative of inevitability is a shield. As Stoller observes, "If you push the juvenile theological arguments to the side, there are very interesting political questions around machine learning and AI." The shift from a religious argument to a political one is crucial because it reclaims the power to regulate these tools.

The Illusion of Displacement

The commentary then pivots to the economic claims, specifically the prediction that white-collar work will vanish within 18 months. Microsoft's head of AI, Mustafa Suleyman, predicted that tasks performed by lawyers, accountants, and project managers will be "fully automated by an AI within the next 12 to 18 months." Stoller dismantles this by looking at the stock market. If white-collar workers were truly being replaced en masse, the companies that rely on their spending power would be crashing. Instead, luxury goods companies like Tapestry, which owns Coach and Kate Spade, are at record highs.

"Wall Street has gone wobbly on the data center build-out, but if you've invented God or the Devil, that's a great investment thesis."

Stoller argues that the real goal of this rhetoric is to avoid the "obviously necessary regulation that a democracy needs to manage this kind of innovation." By framing AI as a force of nature that cannot be tamed, proponents hope to bypass the messy work of legislation and antitrust enforcement. This is a classic monopolistic tactic: convince the public that the market is too complex for human intervention. Critics might note that technological disruption often outpaces market signals, and stock prices may not immediately reflect structural shifts in employment. However, Stoller's point stands that the current narrative is being used to justify firing workers not to improve efficiency, but to cut costs while maintaining output quality.

The Reality of Price-Fixing

Perhaps the most damning section of the piece is the revelation of how AI is actually being used in the real world. Far from a utopian singularity, Stoller highlights evidence that AI is being deployed to "cheat and raise prices." He cites financial analyst Herb Greenberg, who found that hospitals and private equity firms use generative AI to "lie to insurance companies and the government to squeeze out more money." The technology is not replacing radiologists to save lives; it is being used to justify firing them, leading to worse patient outcomes and higher costs.

"AI isn't really 'replacing' customer service agents, it's just making customer service worse."

Stoller connects this to the broader issue of AI agents becoming public utilities. He warns that as companies like Google seek to become the "central planner of the economy" through personalized agents, the conflict of interest becomes insurmountable. If an agent is funded by advertising, it is not working for the user. The author draws a parallel to the ByteDance incident, where a Chinese firm released an AI capable of generating hyper-realistic video, leading to immediate lawsuits and regulatory pushback. This, Stoller argues, is the only healthy response: "That's… politics. That's good. It's what should happen."

Bottom Line

Stoller's most potent contribution is reframing the AI debate from a theological question of "can machines think?" to a political question of "who owns the data and who benefits?" The argument is strongest when it exposes the contradiction between the "God-like" claims of CEOs and their desperate lobbying for protection. The biggest vulnerability is the potential for rapid, unforeseen technological leaps that could outpace the regulatory frameworks Stoller advocates for. However, the piece serves as a vital reminder that we must not surrender our democratic institutions to a narrative of technological inevitability. The fight over AI is not about the future of the species; it is about the present of corporate power.