Erik Hoel claims to have solved a problem that has haunted complex systems theory for decades: how to mathematically identify and even design the hidden layers where reality becomes more than the sum of its parts. This is not merely an abstract exercise in information theory; Hoel argues that we can now engineer the very structures that allow brains, computers, and biological networks to function with clarity despite the noise of their underlying components. For anyone trying to understand how intelligence or life itself emerges from chaos, this new framework offers a potential roadmap that was previously invisible.

The Dream of Rock and Math

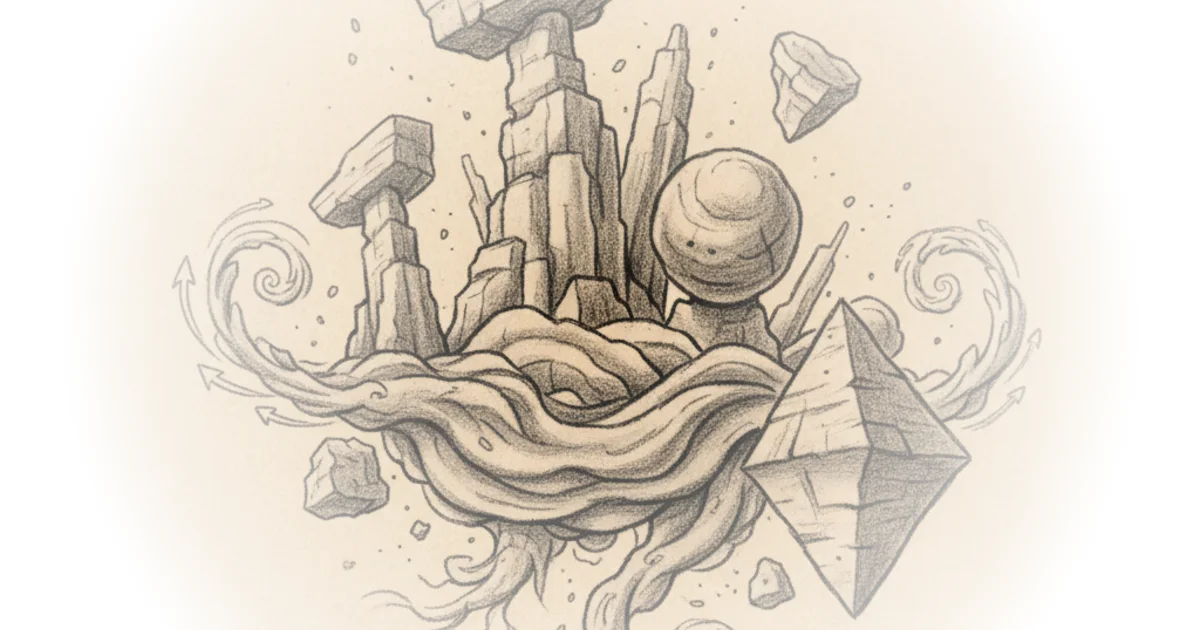

Hoel anchors his argument in a personal, almost mystical origin story, describing a dream where he visualized complex systems as "weathered rock faces with differing topographies." He writes, "They reminded me of rock formations you might see in the desert: some were 'ventifacts,' top-heavy structures carved by the wind and rain, while others were bottom-heavy, like pyramids." This vivid imagery serves as a metaphor for the mathematical landscape he has since mapped. The core of his new work, titled "Engineering Emergence," is the assertion that these "rock formations" are not just poetic flourishes but rigorous, calculable structures within any system.

The author revisits his 2013 theory of "causal emergence," which posited that higher-level descriptions of a system (macroscales) often possess a causal power that lower-level descriptions (microscales) lack. Hoel explains that this happens because macroscales are "multiply realizable," meaning they can "correct errors in causal relationships in ways their microscale cannot." This is a crucial distinction. It suggests that the "noise" inherent in the microscopic world—whether it's the jitter of individual neurons or the thermal vibration of atoms—is actually filtered out at higher levels of organization, creating a clearer, more deterministic signal.

"Conditional probabilities... can be much stronger between macro-variables than micro-variables, even when they're just two alternative levels of description of the very same thing."

This framing is powerful because it challenges the reductionist view that understanding the smallest parts is the only path to truth. However, critics might note that while the math is elegant, applying it to real-world biological or social systems remains fraught with the difficulty of defining what constitutes a valid "partition" or grouping of states. The theory assumes we can cleanly separate scales, but nature is often messy and continuous.

From Abstract Blobs to State Machines

To make his theory accessible, Hoel strips away the jargon and defines a "system" in its most fundamental etymological sense: "I cause to stand together." He simplifies complex networks into "Markov chains," or state machines, using the game of Snakes and Ladders as a relatable analogy. In this model, the current state of the board solely determines the next, independent of the history of moves. Hoel writes, "Being a 'Markov chain' is just a fancy way of saying that the current state solely determines the next state... Lots of board games can be represented as Markov chains."

The innovation in this new paper, co-authored with Abel Jansma, is the ability to "squish" these state machines into different scales. Hoel describes this process as taking a "wellspring" of microstates and grouping them into larger chunks to form a macroscale. He notes that "some squishings are superior to others," distinguishing between a "junk" scale that introduces noise and a "good" scale that preserves causal clarity. The goal is to find the specific grouping where the system's behavior becomes most predictable and deterministic.

"The microscale is the wellspring from which the multiscale structure emerges."

This section is particularly effective because it moves from the abstract to the operational. Hoel doesn't just say emergence exists; he provides a method to find it. He introduces metrics of "determinism" and "degeneracy" to score these scales. Determinism measures how concentrated the probabilities are (is the outcome certain?), while degeneracy measures how many different causes lead to the same effect. A high score in both indicates a robust emergent layer. This is a significant step forward, as it turns a philosophical debate into a calculable engineering problem.

Engineering the Future

The most provocative claim in Hoel's piece is the shift from observation to engineering. He asserts that we are no longer limited to discovering emergent properties; we can now design them. "One of our coolest results is that we figured out ways to engineer causal emergence, to grow it and shape it," Hoel writes. This implies that if we understand the mathematical rules of how scales interact, we could theoretically design better AI architectures, more resilient biological systems, or more efficient communication networks by intentionally creating the "rock formations" that filter out noise.

However, the leap from a six-state toy model to the complexity of a human brain or a global economy is vast. While the math holds for the simplified systems Hoel presents, the "Bell number" of possible partitions grows explosively with system size. As Hoel admits, "even for small systems there are a lot of them." The computational cost of finding the optimal scale in a system with billions of states is currently prohibitive. A counterargument worth considering is that the theory might be mathematically sound but practically intractable for the very complex systems where emergence matters most.

"And, God help us all, I'm going to try to explain how we did that."

Despite the complexity, Hoel's confidence is infectious. He frames the work as a completion of a decade-long journey, stating, "This latest paper... completes a new and improved 'Causal Emergence 2.0' that keeps a lot of what worked from the original theory a decade ago but also departs from it in several key ways." The inclusion of "negating older criticisms" suggests a robustness that previous iterations lacked, particularly regarding the existence of multiple viable scales rather than just one.

Bottom Line

Erik Hoel's new framework successfully transforms the elusive concept of emergence into a tangible, calculable metric, offering a potential toolkit for designing more robust complex systems. While the leap from abstract state machines to real-world biological and social networks remains a significant hurdle, the ability to mathematically identify where "noise" becomes "signal" is a profound scientific advance. The strongest part of the argument is the move from passive observation to active engineering, but the biggest vulnerability lies in the computational scalability of the method for systems of immense complexity.