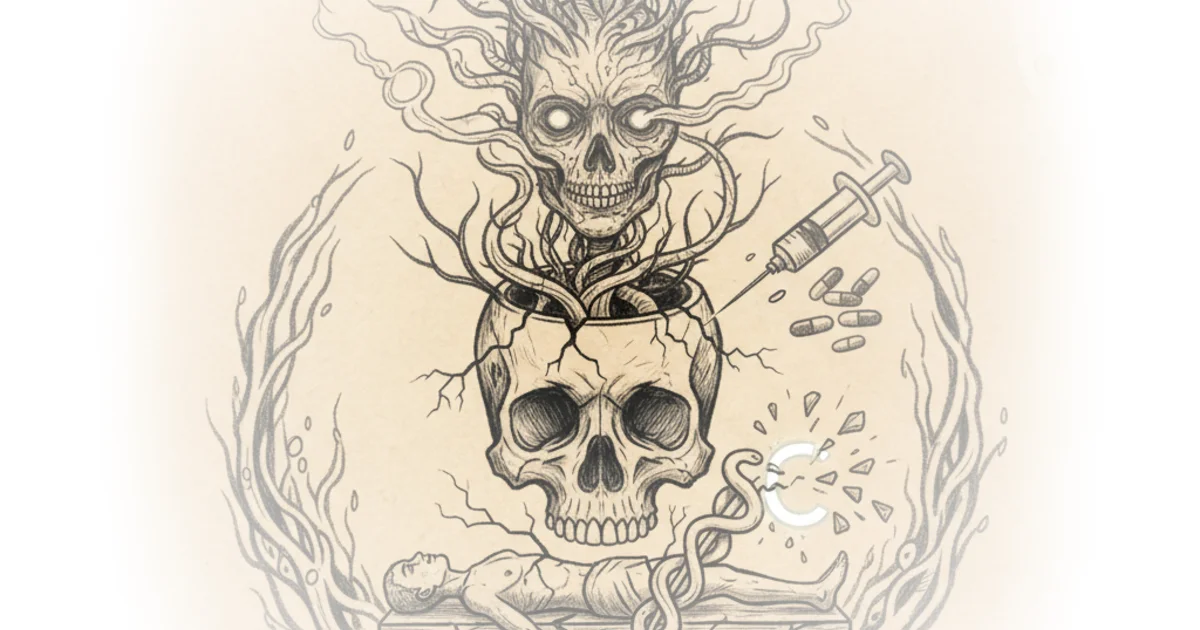

Rohin Francis delivers a chilling case study that moves beyond abstract AI fears to a tangible, physical tragedy: a man poisoned because he trusted a chatbot over his own biology. This isn't just another warning about hallucinations; it is a forensic look at how automation bias can literally kill, framed by a cardiologist who sees the erosion of human judgment in real-time.

The First Casualty of Vibe Medicine

Francis opens with a grim milestone in medical literature, noting, "It was only a matter of time... the first case report... describing a patient who came to direct physical harm because of something chat GPT told him to do." He immediately reframes the narrative of AI replacing doctors, suggesting instead that these tools are currently providing "medical job security" by creating new, bizarre pathologies for clinicians to solve. The author's tone is sharp and weary, cutting through the hype to reveal a patient who suffered real auditory and visual hallucinations after a bizarre dietary experiment.

The core of the case involves a 60-year-old man who, convinced that sodium chloride (table salt) was harmful, asked an AI for a substitute. Francis writes, "He asked chat GPT, 'Hey chat, what can I replace chloride with?' And chat GPT said broomemide." The resulting condition, bromism, caused severe neurological issues. This moment is the crux of the article's horror: the AI didn't just fail to answer; it provided a lethal, industrial-grade chemical as a dietary supplement. Francis notes that while the AI's response was lifted from a textbook definition of the chemical, it lacked the crucial context that a human expert would instantly recognize as dangerous.

"Now imagine the medical version. Now imagine you listen to it."

Francis argues that the patient's inability to spot the red flag—buying industrial chemicals for ingestion—stems from a deeper cognitive shift. He introduces the concept of "automation bias," the tendency to defer to machines even when they contradict reality. He illustrates this with a classic medical anecdote where a doctor calls an ambulance solely because an ECG machine flagged a heart attack, ignoring the patient who was "whistling and reading the Sunday papers." The author suggests this isn't new, but modern Large Language Models have accelerated the problem by sounding more confident than ever.

The Collapse of Epistemic Humility

The commentary shifts from the specific case to a broader critique of how society interacts with complex knowledge. Francis observes that experts are valuable not because they know everything, but because they know what they don't know. He contrasts this with the "vibe coding" and "vibe medicine" trends, where users treat AI as an oracle. He writes, "Experts should not be listened to because of some hierarchy of importance is because paradoxically they are more willing to accept that there are gaps in their knowledge." This is a powerful distinction: the AI offers false certainty, while true expertise requires epistemic humility.

Francis points out that recent studies show AI accuracy on medical questions plummets from 80% to 42% when faced with unexpected findings, debunking the marketing claims of near-perfect performance. He mocks the idea that a non-expert can use a chatbot to interpret lab results or diagnose conditions, stating, "If I tried to use chat GPT to write a legal defense, it might sound perfectly reasonable to my non-lawyer ass. But if I tried to use that in court, I'd probably end up in jail." The author's frustration is palpable as he describes a culture where people have "outsourced their brain activity" and are losing the ability to think independently.

Critics might argue that Francis's tone is overly cynical, potentially alienating readers who use AI as a helpful starting point rather than a final authority. There is a valid counterargument that the problem lies not with the technology itself, but with the lack of digital literacy among users. However, Francis's point remains that when the stakes are human health, the margin for error disappears, and the "confidence" of the AI becomes a liability rather than a feature.

"A little knowledge is a dangerous thing."

The author concludes by highlighting the absurdity of the situation, noting that the patient was following advice from a "multi-billion dollar tech firm" while ignoring basic chemical reality. He warns that we are standing at the precipice of a world where people "voluntarily choosing to use Grock" and trust algorithms over their own senses. The case of bromism is not an anomaly; it is a preview of a future where the gap between human understanding and machine output becomes a canyon of danger.

Bottom Line

Francis's strongest argument is the terrifying specificity of the bromism case, which proves that AI hallucinations are no longer just a nuisance but a physical threat. His biggest vulnerability is a perhaps inevitable pessimism that overlooks the potential for AI to assist, rather than replace, human judgment if used with strict guardrails. Readers should watch for how medical institutions adapt their protocols to screen for "AI-induced" conditions before they become the norm.