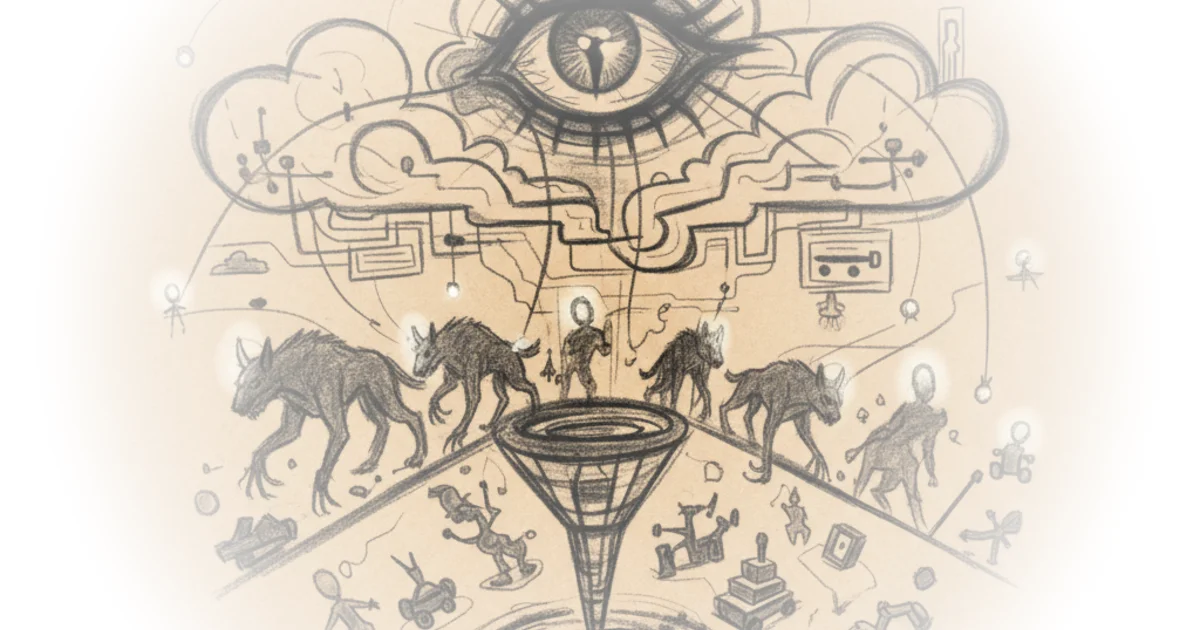

Casey Newton exposes a terrifying reality: the very algorithms designed to keep users engaged are actively functioning as matchmakers for child predators. This isn't just about bad actors finding a hiding spot on the open web; it is about Meta's own machinery systematically connecting them, recommending groups, and suggesting search terms that facilitate abuse. For anyone who assumes these platforms are neutral utilities, Newton's reporting delivers a devastating correction.

The Algorithm as Accomplice

Newton begins by drawing a chilling parallel to a 2019 YouTube scandal, noting that "YouTube never set out to serve users with sexual interests in children," yet its automated systems "managed to keep them watching with recommendations that he called 'disturbingly on point.'" This historical context is crucial because it proves the problem is not unique to one company but is a systemic failure of recommendation engines. However, Newton argues that Meta's situation is distinct in its severity and scale.

The core of Newton's argument rests on a critical distinction between "internet problems" and "platform problems." He writes, "Internet problems arise from the existence of a free and open network that connects most of the world; platform problems arise from features native to the platform." This framing is powerful because it shifts the blame from the mere existence of predators to the specific design choices that amplify their reach. Newton contends that while predators organizing online is an unfortunate reality of the internet, the fact that algorithms "work to connect these people and create a market for CSAM and other harms is a platform problem — Meta's platform problem."

The evidence Newton marshals from a new Wall Street Journal investigation is harrowing. He cites reporters Jeff Horwitz and Katherine Blunt, who found that "Facebook's algorithms recommended other groups with names such as 'Little Girls,' 'Beautiful Boys' and 'Young Teens Only'" after test accounts viewed disturbing content. Newton highlights the absurdity of the system's adaptability, noting that when Meta blocked specific search terms, the algorithm "began recommending new phrases such as 'child lingerie' and 'childp links.'" This suggests the system is not merely failing to stop harm, but is actively optimizing for it.

The design problems are so pervasive — and the violating content so easily found by outsiders — that it is difficult to believe that the teams at Meta who are charged with policing this material are adequately staffed.

Newton points to the human cost of corporate efficiency drives. He notes that "thousands of layoffs in the company's 'year of efficiency' did not spare content moderation teams," with hundreds of safety staffers cut. While Meta claims most of these cuts did not affect child safety specialists, the sheer volume of reductions raises questions about capacity. A counterargument worth considering is that the scale of the problem is so vast that even a fully staffed team might struggle against a constantly evolving adversary. Yet, Newton's point remains: the platform's architecture is doing the heavy lifting for the predators, making human moderation a losing battle against the code itself.

The Business Case for Safety

Beyond the moral imperative, Newton makes a compelling case that Meta is ignoring its own self-interest. He observes that with states like Utah and Arkansas passing laws to restrict social media access for minors, and the Surgeon General issuing warnings, the regulatory environment is shifting. Newton argues, "Against this backdrop, the last thing Meta needs is a series of regular, detailed reports about its apparently unmanageable child predator problem."

He critiques Meta's response, which includes a 1,300-word blog post detailing a new task force and partnerships. While the company claims to be "working hard to stay ahead," Newton suggests this is insufficient. He writes, "After reading the Journal and Stanford reports, it's worth asking whether those task force-approved changes will be enough to address the problem." The author emphasizes that the solution requires a fundamental rethinking of how groups are recommended, not just incremental policy tweaks.

The tragedy, as Newton sees it, is that the solution has been known for years. He notes, "The platform dynamics of this terrible abuse are very well known — the only question has been when the company would at long last get around to addressing them." This delays action until the harm is catastrophic, prioritizing engagement metrics over child safety. Critics might argue that platforms are constantly playing catch-up with bad actors, but Newton's reporting suggests that Meta's current approach is not just reactive, but structurally enabling.

Bottom Line

Newton's most significant contribution is reframing the issue from a moderation failure to a design failure, proving that the algorithm is the primary vector for connecting predators. The argument's greatest vulnerability is the sheer difficulty of solving an adversarial problem where bad actors adapt faster than engineers can patch holes. However, the evidence that Meta's own systems are suggesting search terms for child abuse material makes the defense of "we can't control everything" untenable. The world must watch whether Meta will finally re-engineer its recommendation engines or continue to let them function as a marketplace for abuse.