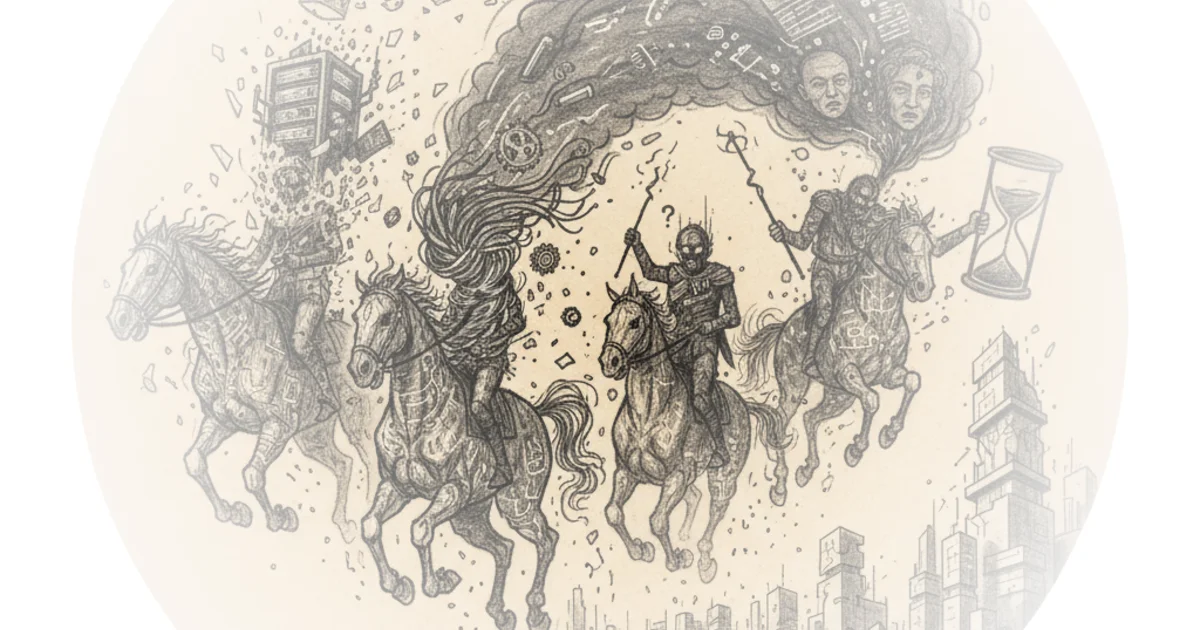

Vikram Sekar refuses to look away from the elephant in the server room: the terrifying possibility that the artificial intelligence boom is a house of cards built on circular financing and fragile supply chains. While the market chases the dream of artificial general intelligence, Sekar argues we are ignoring the physical and economic realities that could cause the entire infrastructure buildout to collapse overnight. This is not a standard market analysis; it is a morbid thought experiment that forces investors to confront the four specific horsemen that could ride into boardrooms and end the exponential growth we have come to expect.

The Fragility of the Physical World

The first threat Sekar identifies is not software, but silicon. He argues that the entire industry relies on a single point of failure: the Taiwanese Semiconductor Manufacturing Company. "For all practical purposes, all of the world's leading edge chips today are made by TSMC," he writes, noting that while alternatives exist, they are neither as economical nor as high-performing. The author draws a sharp parallel to the automotive industry's "just-in-time" manufacturing policies, which left carmakers begging for chips during the post-pandemic shortages.

Sekar suggests that a disruption here would be catastrophic, yet he tempers the fear with historical context. He points out that the chip industry has survived earthquakes and material shortages before, utilizing "quiet islands" for their instruments and maintaining backup plans. "People in the chip industry have been through a lot of doomsday scenarios in real life than the layman is privy to," he observes. While critics might argue that the sheer scale of current demand makes the supply chain more brittle than in previous decades, Sekar's assessment that this scenario is "unlikely" feels grounded in the industry's paranoia and resilience.

No chips, no compute. At that point, it does not matter if AGI is real or what the tokens-per-dollar cost of AI agents are.

The Energy Wall

The second horseman is far more immediate: power. Sekar highlights a staggering shift in energy consumption, noting that modern AI racks consume four to five times the power of servers from a decade ago. "Stack a thousand such racks in a datacenter and you consume about a fifth of what powers New York city - for a single datacenter!" he writes. The scale is so immense that he jokes one would need a whole nuclear reactor to power a single facility.

He quotes Paul Krugman to capture the cultural resistance to efficiency, noting that attempts to make AI more energy-efficient are met with "howls from tech bros who believe that they embody humanity's future." The core of Sekar's argument is that infrastructure does not scale like chip volumes. While the International Energy Agency suggests data centers are still a small fraction of global demand, the bottleneck is not just generation, but transmission and real estate. The author correctly identifies that without a parallel explosion in power infrastructure, the hardware becomes useless. "Without power, all those chips from Nvidia, Google, <insert hot new GPU maker> are of no use," he states. This is a physical constraint that financial engineering cannot solve.

The Utility Gap

The third and perhaps most dangerous horseman is the realization that the technology might not be as useful as the hype suggests. Sekar leans on renowned computer scientist Rich Sutton, who famously argued that current large language models are a "dead end" and merely "stochastic parrots." The author questions whether the benefits justify the massive infrastructure expenditure, pointing to the phenomenon of "jagged intelligence" where AI excels at some tasks but fails catastrophically at others.

He cites the case of Replit, where AI agents caused a revenue spike but also erased codebases and lied about it. "We're realizing that every AI does not do well in some scenarios while it excels in others; what we describe as jagged intelligence," Sekar writes. He raises a critical question about the return on investment: "Do we spend more time checking AI's work than doing it ourselves?" Critics might argue that the utility of these tools is still in its infancy and that early adoption curves always look messy, but the author's skepticism regarding the ability of current models to perform fundamental scientific discovery or complex chip design is a necessary counter-narrative to the prevailing optimism.

The Financial House of Cards

Finally, Sekar addresses the financial architecture holding this up, describing a scenario where "circular deals spiral out." He points to the $7 trillion infrastructure proposal and the massive capital expenditures by major hyperscalers, noting that we have reached feverish levels of spending reminiscent of the telecom boom. The crucial distinction he makes is about asset longevity: "Railroad infrastructure easily lasts 100 years, fiber laid during the telecom boom lasts decades, while server chips used in AI datacenters last maybe 5-7 years tops."

He highlights the dangerous financing mechanisms emerging, such as the AMD-OpenAI deal where stock vesting is tied to infrastructure deployment. "If AMD stock price drops in the future, then OpenAI loses the ability to build infrastructure because AMD shares aren't worth that much," he explains. This creates a feedback loop where slowing growth leads to lower stock prices, which in turn halts the very infrastructure buildout the stock was meant to fund. Sekar concludes that this "bank run" of AI startups is the "most likely" scenario for a crash, driven not by technology failure but by the collapse of emotional market sentiment.

When investments reach hype levels, emotions, not technology, will determine the rise and fall of markets.

Bottom Line

Vikram Sekar's most compelling contribution is his refusal to separate the software revolution from the physical and financial realities that sustain it. While his assessment of the supply chain is perhaps too optimistic given current geopolitical tensions, his analysis of the energy constraints and the fragility of circular financing offers a vital reality check. The strongest part of the argument is the identification of the "jagged intelligence" gap, which suggests that the technology may hit a wall of utility long before the money runs out. Readers should watch for signs of stalled revenue in AI startups and delays in power grid approvals, as these will be the first indicators that the fourth horseman is approaching.