When Evidence Can Be Deepfaked, How Do Courts Decide What's Real?

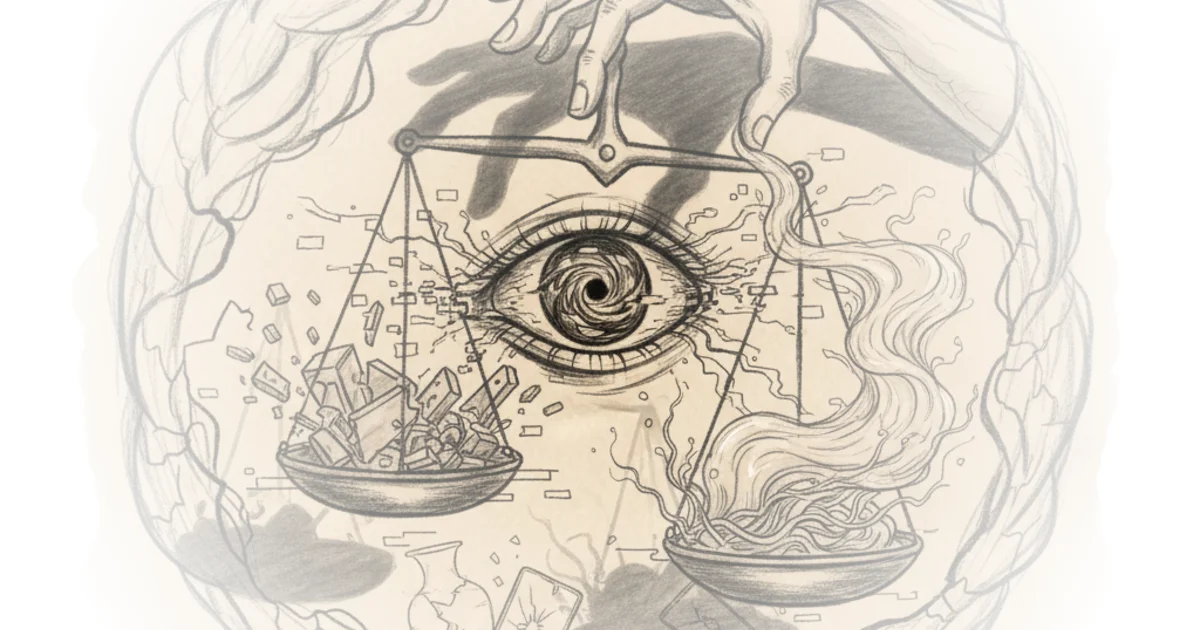

The Walrus tackles one of the most unsettling collisions of our moment: the criminal justice system, built on eliminating reasonable doubt, meets artificial intelligence, a factory for manufacturing it. When photographs and audio recordings can be synthesized in minutes, the entire foundation of evidentiary trust begins to crack.

The Erosion of Visual Truth

For years, a woman in Edmonton kept a box labeled "insurance" on a back shelf of her house. Inside were not paperwork but smashed phones, broken eyeglasses, and photographs of bruises left by her husband's violence. "I had a circle of blue bruises around my mouth because he would cover my mouth and smother me," she told The Walrus. Such evidence carries enormous weight in court. The Walrus writes, "Photographs, videos, and audio recordings are highly persuasive to judges and juries." One study cited shows combining visual and oral testimony can increase information retention among jurors by 650 percent.

Yet this evidentiary advantage is vanishing. Criminal defence lawyer Emily Dixon notes that clients showing exonerating photos are not expected to run analytic tests before submission—it is reasonable to assume a photo is real. For now. As The Walrus puts it, "But in a year," digital forensics expert Simon Lavallée told the author, "we won't." Specialists can still spot anomalies distinguishing AI-generated images from real photos, but that window is closing.

Maura R. Grossman, a lawyer who has promoted advanced technologies for legal document review, believes Canada's evidence laws require overhaul. The Walrus writes, "Before, if I wanted to fake your signature, I had to have some talent," she told the author. "In this day and age, you could make a deepfake of my voice in two minutes." Grossman has written that juries may increasingly become skeptical of all evidence. For complainants like P, this erosion carries a high cost: left with only doubt, courts may rely on instincts indistinguishable from prejudices.

"The risk of impacts on people's lives is catastrophic," Ryan Fritsch, the lawyer who headed the Law Commission of Ontario's initiative, told The Walrus.

The LCO brought together police officers, defence attorneys, prosecutors, judges, and human rights advocates to formulate recommendations for the Ontario government. The Walrus describes the project as confronting "a head-on collision between two epistemic systems: the criminal justice system, which rests on the elimination of reasonable doubt, and artificial intelligence, a factory for doubt dissemination."

Critics might note that forensic science has always faced authenticity challenges—photographs were once suspect, DNA evidence faced scrutiny, and each technology developed verification methods. The assumption that deepfakes will outpace detection may underestimate the adaptive capacity of digital forensics.

Algorithmic Risk Assessment and Its Blind Spots

P tells The Walrus she is excited about criminal risk assessments performed by artificial intelligence. Justice system workers seemed overly swayed by her husband's charm. The Walrus quotes her: "These people are trying to predict outcomes, going off their imagination, their feelings, their gut instinct," she said. "And AI takes all of that out."

Since the 1990s, statistical risk assessments have sorted offenders by security level and parole eligibility across Canadian jurisdictions. Algorithmic models quickly rivalled expert psychologist assessments. But prisons and parole boards do not explain decisions with the granularity courts require.

When algorithmic risk assessment tools entered sentencing in the United States during the 2010s, problems emerged. In 2016, ProPublica published a landmark study finding racial bias in COMPAS, a widely used tool. Studies of similar Canadian tools, such as the Level of Service Inventory, found comparable results. Models rely on historical arrest data, and racialized communities are more heavily policed than white neighbourhoods. The Walrus explains, "algorithmic predictions tend to overstate the likelihood of racialized people committing future crimes and understate these risks for white offenders."

Equivant, the company owning COMPAS, disputes the tool is racially biased. Some subsequent independent studies found ProPublica's results overblown. Yet the structural problem persists: because poor, mentally ill, or racialized people are disproportionately arrested, belonging to these categories can make someone appear more likely to commit crimes.

The Cross-Examination Problem

The Walrus poses a fundamental question: "How do you cross-examine an algorithm?" Gideon Christian, the University of Calgary's research chair of AI and law, and lawyer Armando D'Andrea proposed this problem in a paper commissioned by the LCO. If a risk assessment is classified as expert opinion, the expert proffering it would typically be expected to appear in court. With AI tools, even a software engineer who designed the program could not vouch for what the program was actually doing.

The Walrus quotes Tim Brennan, who originally designed COMPAS: "You should be able to explain why a person got the score and what the score means... An AI technique like random forests"—a statistical sampling method involving hundreds of decision trees branching into further decision trees—"is a great way to do prediction. But you cannot, for your life, explain it to a judge."

AI is weakest where the justice system prizes strength most: meeting standards of evidence and reasoning. AI systems occlude information or processes, failing to provide full disclosure the law requires. For defendants hoping to appeal sentences, this missing information could be key to overturning decisions that deprive them of liberty or life. The inner workings of AI software are proprietary information owned by private companies. In the United States, courts have sided with corporations arguing they cannot be compelled to disclose trade secrets—even when products are used to send people to death row.

The Self-Representation Gap

In a 2025 British Columbia Civil Resolution Tribunal case, tribunal member Eric Regehr posited that both parties must have used AI to write their submissions. The Walrus quotes his reasoning: "there is no way a human being" could have made some of the errors these submissions contained. He did not consider himself obliged to respond to every specious argument the software threw his way.

The Walrus writes, "I accept that artificial intelligence can be a useful tool to help people find the right language to present their arguments, if used properly," he wrote. "However, people who blindly use artificial intelligence often end up bombarding the CRT with endless legal arguments."

The promise of AI for self-representation is tantalizing. Lawyers are expensive. Hourly rates for criminal defence attorneys can hit $400 an hour, and many legal aid applicants are denied. The number of people representing themselves in court is growing, most markedly in family law cases. Depending on the case nature, self-represented Canadians using Microsoft CoPilot or ChatGPT may face opposing counsel using more sophisticated tools. An inventory compiled in June 2025 found 638 generative AI tools available in the "legaltech" field.

Within Canada, non-profits attempt to level the playing field. Beagle+ is a free legal chatbot trained on British Columbia law, built by People's Law School and powered by ChatGPT. The Conflict Analytics Lab at Queen's University built OpenJustice, an open-access AI platform drawing from Canadian, US, Swiss, and French law. Samuel Dahan, the law professor overseeing the project, noted the idea was to build a Canadian large language model from scratch. The Walrus quotes him: "The sad reality of universities right now is that there's no university in the world that has the resources to build a language model," Dahan told the author.

Grossman suggested AI could serve average people in civil contexts. When earrings she bought online arrived with a bent post, the seller responded abusively. Grossman made her case in the website's online dispute resolution system. It scanned her photo, looked at item value, tabulated comparable transactions, and awarded her $4.38. Grossman asked readers to imagine she was a tenant, the other party a landlord illegally withholding her security deposit. The online adjudication system might not be perfect; it could award less than the full amount. But the case might be over in a week.

Critics might argue that online dispute resolution systems already exist without AI and function adequately for small claims. The assumption that AI adjudication inherently speeds resolution overlooks the complexity of many disputes requiring human judgment.

Bottom Line

The Walrus exposes a foundational vulnerability: the justice system depends on evidence that can be verified, explained, and cross-examined. AI threatens all three. Deepfakes undermine verification. Algorithmic opacity blocks explanation. Proprietary systems prevent cross-examination. The catastrophic risk Fritsch names is not abstract—it is the difference between liberty and deprivation for people whose cases hinge on evidence that cannot be trusted, scores that cannot be explained, and systems that cannot be questioned.