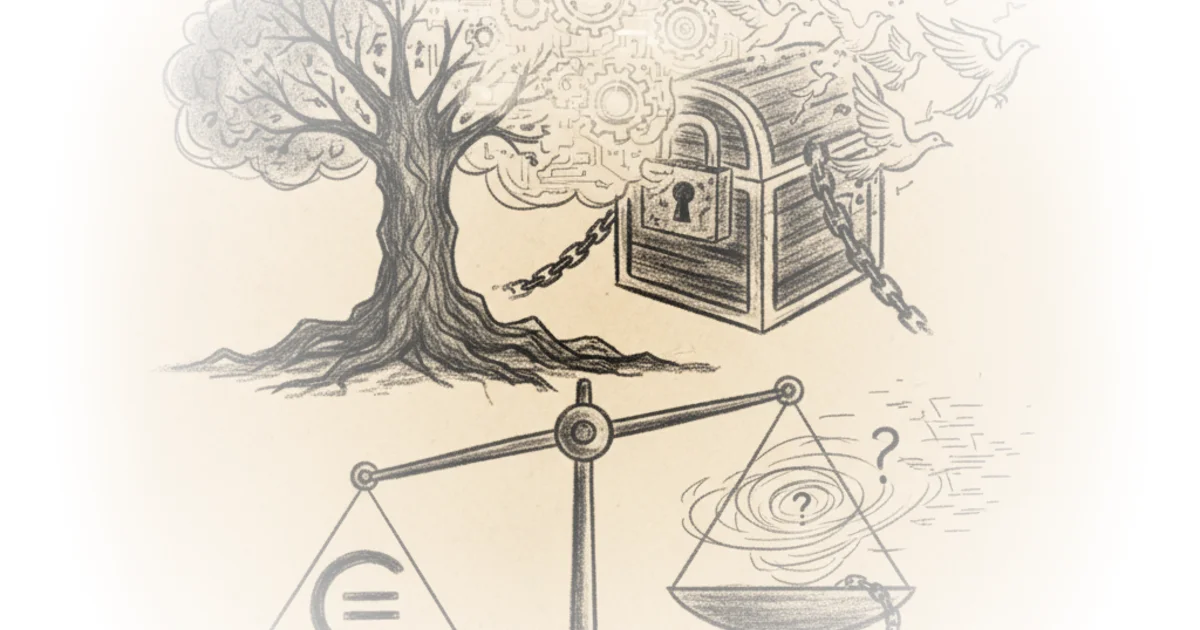

Cory Doctorow exposes a paradox at the heart of open source: a service promising to liberate corporations from copyleft obligations may actually liberate the code itself. Malus.sh claims to use AI clean-room reimplementation to produce proprietary versions of free software. Doctorow's counterintuitive conclusion: the output cannot be proprietary at all.

The Clean-Room Precedent

Doctorow opens with the legal architecture underlying Malus. "Malus relies on a legal precedent set in 1982, in which IBM brought a copyright suit against a small upstart called Columbia Data Products for reverse-engineering an IBM software product." The Columbia defense used two isolated teams: one examined IBM's code and wrote specifications; the other built from specifications without seeing original code. This is the historical foundation of clean-room design — a technique that enabled everything from PC BIOS compatibility to GNU/Linux reimplementing AT&T Unix.

Malus pairs two LLMs in the same pattern. "The first of which analyzes a free software program and prepares a specification for a program that performs the identical function. The second program receives that specification and writes a new program." The Malus FAQ states plainly: "Legally distinct code with corporate-friendly licensing. No attribution. No copyleft. No problems."

This framing is effective because it names the corporate desire explicitly. Companies want the commons without the social contract.

The Real Threat Isn't AI

Doctorow argues the enclosure threat predates AI. "Most of the foundational free software projects were created under older licenses that did not contemplate cloud computing and software as a service." When code runs on corporate servers and never reaches users, copyleft obligations never trigger. "Big companies have 'software freedom' and we've got 'open source' — the impoverished right to look at the versions sitting on Github."

There's also tivoization: distributing free software in hardware while using digital locks to prevent modification. Doctorow notes Section 1201 of the DMCA makes circumventing these locks a felony. "It becomes a crime to use modified software on your own device." This echoes the Xerox 914 copier history — machines so prone to fire they shipped with extinguishers, yet customers remained locked into service contracts. Hardware control has always been the enclosure vector.

A counterargument worth considering: Doctorow minimizes the demoralization risk. Even if corporations can't legally enforce proprietary claims on AI output, a flood of public-domain clones could confuse users and fragment communities. The chaos itself has cost.

The Fatal Flaw

Doctorow's central insight rests on copyright law: "Software written by AI is not eligible for a copyright, because nothing made by AI is eligible for copyright." The US Copyright Office has affirmed this repeatedly. "Copyright is awarded solely to works of human authorship. This fact has been repeatedly affirmed by the US Copyright Office, which has fought appeals of this principle all the way to the Supreme Court."

This means Malus output is born in the public domain. "You can't stick a license agreement or terms of service between me and the product that binds me to pretend that your public domain software is copyrighted — that's also not allowed under copyright." If a corporation pays Malus to clone free software, they cannot stop competitors from copying their clone. "I can make a competing product that reproduces all of your code and sell it at a 99% discount. There's nothing you can do to stop me, any more than you could stop me from giving away the text of a Shakespeare play you sold me."

This is the piece's strongest move: transforming the threat into its own antidote.

If corporations are foolish enough to reimplement their code using an LLM, and in so doing, create a vast new commons of public domain software, well, that's not exactly the freesoftwarepocalypse, is it?

Doctorow reframes reimplementation itself as foundational to free software. "GNU/Linux itself is a reimplementation of AT&T Unix. Free software authors re-implement each other's code all the time." He cites a recent Raspberry Pi PIO module reimplementation escaping patent encumbrances as evidence that clean-room work can be liberating.

Critics might note this assumes corporations will actually release AI output rather than keep it internal. If the clone never distributes, copyright status matters less than the enclosure already achieved.

Bottom Line

Doctorow's core argument holds: AI-generated code cannot be copyrighted, so Malus cannot deliver proprietary software as promised. His strongest evidence is the Copyright Office's consistent human-authorship requirement. The vulnerability: he understates how cloud distribution and hardware locks already enable enclosure without needing copyright. The real fight isn't about AI reimplementation — it's about updating copyleft for the service era. Watch for license reforms targeting SaaS distribution, not AI output.