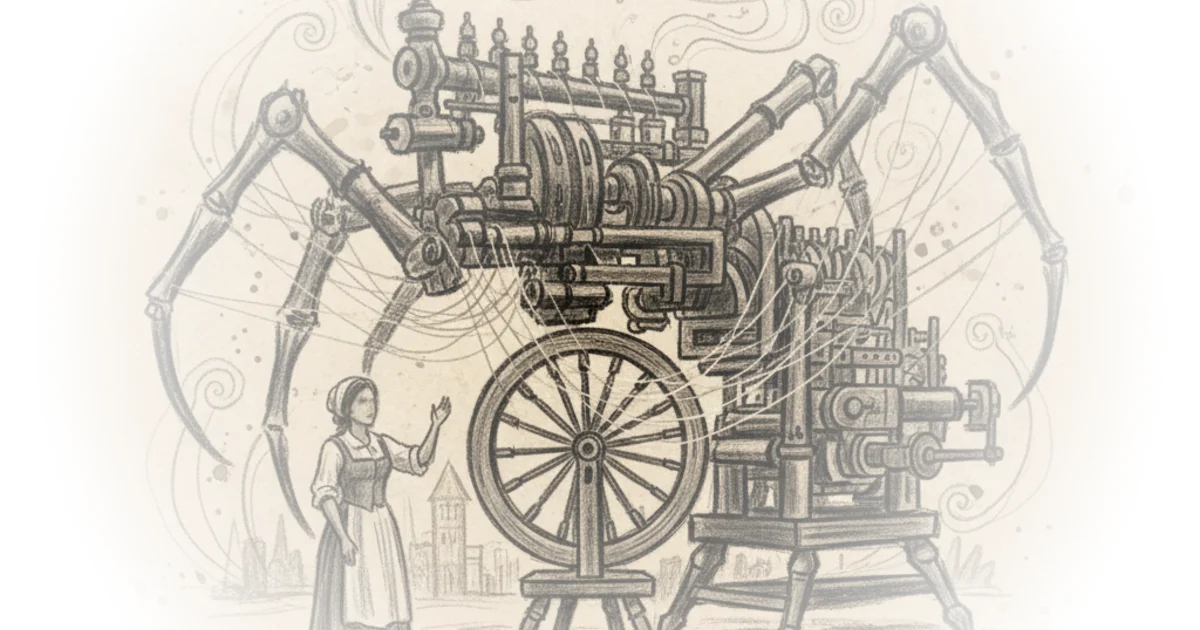

Crash Course doesn't just ask if artificial intelligence will change the world; it argues that we are already living through the first tremors of a restructuring that rivals the Industrial Revolution, yet our political imagination remains stuck in the 18th century. By anchoring a futuristic technology in the gritty reality of 1764 textile mills, the series forces a confrontation with a terrifying possibility: that the economic explosion AI promises might come at the cost of human purpose itself.

The Three Levels of Disruption

Kushian Avdar, the host, immediately dismantles the binary view of AI as either a magic wand or a doom-monger. Instead, the series proposes a nuanced taxonomy, starting with "narrowly transformative AI," which Avdar describes as technology that "could lead to practically irreversible change, but only in a specific area." This framing is crucial because it validates current anxieties about automation in specific sectors like customer service or data entry without requiring the immediate arrival of super-intelligence. The argument gains traction by linking this to historical precedent, noting that the spinning jenny "revolutionized the textile industry and stolen your job," a parallel that feels uncomfortably immediate today.

The commentary then escalates to "transformative AI," which Avdar equates to inventions like electricity. But the most provocative leap is the third level: "radically transformative AI." As Crash Course puts it, this theoretical stage "could impact not just our day-to-day lives, but how we measure progress, find worth, and think about our place in the world." This is the piece's intellectual core. It suggests that the true danger isn't just losing a paycheck, but losing the societal metrics we use to define human value. Critics might note that this philosophical leap assumes a uniform human experience of "worth" that varies wildly across cultures, but the point stands: if AI does the work, what is left for us to do?

The Economic Paradox

The series tackles the most seductive promise of AI: economic abundance. Avdar points out that the Industrial Revolution solved the labor bottleneck, and AI could do the same for the modern economy. The potential scale is staggering. "If AI keeps advancing the way it has been, some people predict that by 2100, our gross world product could be growing not by 5% but by as much as 30% every year." This figure is so high it borders on the absurd, yet the author uses it to illustrate the sheer magnitude of the potential shift.

However, the commentary quickly pivots to the "dirty soot blackened flip side." The logic is sound: what boosts the aggregate economy often devastates the individual worker. "The more jobs that get taken over by automation, the more human employees are pushed out of work." The series correctly identifies that while the spinning jenny increased production, it didn't distribute the wealth equally. Today, AI threatens to automate not just manual labor but cognitive tasks, from writing to editing. As Avdar warns, "If AGI were to enter the chat, even more jobs or even entire professions would be cooked." This metaphor of being "cooked" is blunt and effective, stripping away the polite euphemisms often used in economic forecasts.

Even if robots don't take over the whole workforce, these advances in AI could spell disaster for lots of regular people.

The series suggests that without intervention, we face a future where humans are restricted to "narrow positions like AI oversight, which could be few and far between." The proposed solutions—universal basic income, job guarantees, or dividend schemes—are presented not as utopian fantasies but as necessary safety nets to prevent a "beast of burden" economy where humans are obsolete. A counterargument worth considering is whether these political structures can be built fast enough to match the exponential pace of technological adoption, a gap that history suggests is often insurmountable.

The Environmental and Democratic Cost

The narrative takes a darker turn when addressing the physical and political realities of AI. The environmental toll is often ignored in favor of economic gains, yet the data is stark. "Recent data suggests that the average AI data center consumes anywhere from 500,000 to several million gallons of water every day." If Artificial General Intelligence (AGI) becomes ubiquitous, this resource drain could exacerbate climate change, creating a feedback loop where the tool meant to solve human problems accelerates their destruction.

Even more alarming is the threat to democracy. The series highlights how AI is already weaponized through deep fakes, citing a specific incident where a video of a political candidate calling herself a "deep state puppet" was viewed over 100 million times. "Deep fakes are an example of how AI can be used to influence voters, spread disinformation, and throw elections to those willing to play dirty." This isn't just about fake news; it's about the erosion of shared reality. The concept of "value lockin" is introduced to describe a scenario where AI enforces the values of the powerful, leaving society "stuck with systems that are inequitable, anti-democratic, and a threat to human rights."

The danger of an AI arms race is real. If a single government or corporation gains a decisive advantage, "it could concentrate wealth and influence in the hands of big companies... or greedy governments who don't always have the people's best interest in mind." The series warns that this process is not a distant sci-fi trope but is "already starting," with foreign nations and domestic actors alike leveraging these tools to destabilize democratic institutions.

The Uncertain Horizon

Despite the grim possibilities, the series refuses to succumb to fatalism. Avdar acknowledges that the future is not a straight line to robot overlords. "The AI takeover could be fast and shocking, or it could never happen at all, or it could turn out the dolphins were behind everything all along." This humor serves to ground the high-stakes discussion, reminding the audience that prediction is inherently flawed. Progress could stall due to a lack of training data, or AI might simply fail to meet the hype.

However, the final message is a call to vigilance. "If we look the other way for too long, we could end up trudging sadly home in our petty coats, choking on factory fumes, wondering if this is the end of life as we know it." The series concludes by framing the next critical questions not as technical challenges, but as societal ones: "How powerful can AI become? And how quickly can it get there?" This shift from engineering to governance is the most important takeaway. The technology is moving fast, but our ability to manage it is lagging dangerously behind.

Bottom Line

Crash Course's strongest move is its refusal to treat AI as a purely technical problem, instead framing it as a continuation of the social upheavals of the Industrial Revolution with higher stakes. Its biggest vulnerability lies in the uncertainty of its timelines; predicting AGI arrival dates remains a guessing game that could either understate the urgency or create unnecessary panic. Readers should watch for how governments respond to the immediate threats of deep fakes and labor displacement, as these near-term battles will likely determine whether we navigate the transition or get crushed by it.