This article makes a disturbing argument: the strike that killed 165 girls in Iran wasn't a mistake -- it was the system working exactly as designed. The evidence comes from satellite imagery, UN human rights reports, and Pentagon documents obtained by The Intercept. And it connects directly to a 1988 incident the U.S. has spent four decades pretending didn't happen.

The Strike

On February 28, 2026, a U.S. missile struck the Shajareh Tayyebeh girls' primary school in Minab, Iran during school hours. At least 165 girls between the ages of 7 and 12 were killed. Large parts of the building were destroyed.

When asked whether U.S. forces hit the school, White House press secretary Karoline Leavitt replied: "Not that we know of." She then added, firmly, "The United States of America does not target civilians."

They never do. Not officially.

The Pentagon's Explanation

The initial explanation for the Minab strike followed a familiar script: the school was near a military complex, a "pattern of life" analysis flagged the area as a high-threat zone, and the targeting system identified a suspicious signature at the site.

Satellite imagery confirmed that the Shajareh Tayyebeh school operated as an independent civilian facility with no access from military checkpoints. The compound included playground equipment, sports fields, and brightly colored murals on the walls. None of that was classified information. And yet the school was struck.

The nearby clinic, also on the same block as the military complex, was not hit -- a detail the New Lines Institute noted as evidence that attackers were capable of precise target discrimination. The strikes were precise enough to bypass a clinic, but somehow we are meant to believe they were not precise enough to avoid a school full of children.

According to the Associated Press, whose analysis was based on satellite imagery, expert assessments, and a U.S. official who spoke anonymously, the strike was likely carried out by the United States.

Three independent experts told the AP that the damage pattern -- strikes clustered within the walled compound, direct hits on buildings, no craters in the surrounding neighborhood -- was consistent with multiple simultaneous air-to-surface munitions.

The strikes were precise enough to bypass a clinic, but somehow we are meant to believe they were not precise enough to avoid a school full of children.

Legal analysis from the New Lines Institute declares that the attack violated the foundational principles of international humanitarian law: distinction, proportionality, and military necessity. According to U.N. human rights experts, schools are expressly protected under treaty and customary international humanitarian law, and intentionally targeting educational buildings that are not military objectives constitutes a war crime under Article 8 of the Rome Statute.

U.N. Secretary-General Antonio Guterres said as much during an emergency Security Council meeting, stating that the U.S.-Israeli airstrikes violated international law.

The Technology of Targeting

Responsible Statecraft reports that the U.S. military leveraged AI targeting tools to strike over 1,000 targets in Iran during the first 24 hours of the war. Homeland Security Today reports that the Pentagon deployed Anthropic's Claude large language model as part of its operational support infrastructure during the Iran strikes.

Claude is central to Palantir's Maven Smart System, which provided real-time targeting for military operations against Iran -- proposing hundreds of targets, prioritizing them in order of importance, and providing location coordinates, helping the U.S. carry out attacks at a speed and scale not seen since the opening hours of the Iraq War in 2003.

This is what "pattern of life" targeting looks like at scale.

The Guardian writes that AI in this conflict was "identifying and prioritising targets, recommending weaponry and evaluating legal grounds for a strike."

An Israeli intelligence source quoted by the Guardian -- where the same targeting logic was applied in Gaza -- claims that one officer described spending 20 seconds assessing each AI-generated target, stating: "I had zero added-value as a human, apart from being a stamp of approval."

A stamp of approval. That is the sum total of human oversight in a system that struck over a thousand targets in a single day.

According to Farzin Nadimi, a senior fellow at the Washington Institute for Near East Policy who studies Iran's military, the most likely explanation for the school strike is that the targeting system "detected and tracked" activity in the area but "weren't aware or didn't have an up-to-date database that a girls' school was there."

In other words: the algorithm flagged the zone, a human spent who-knows-how-long reviewing it, and the missile was fired.

Peter Asaro, associate professor of media studies at The New School and vice chair of the Stop Killer Robots campaign, told Japan Times: "You can rapidly produce long lists of targets much faster than humans can do it by automating that process. The ethical and legal question is: To what degree are those humans actually reviewing the specific targets that have been listed, verifying their legality and their value militarily before authorizing?"

Brianna Rosen, a senior fellow at Just Security and the University of Oxford, says "Even with a human fully in the loop, there's significant civilian harm because the human reviews of machine decisions are essentially perfunctory."

There is one more detail that deserves its own paragraph. According to Responsible Statecraft, the operation that deployed Claude in Iran came one day after the U.S. government formally declared Anthropic a supply chain risk and a national security concern, and President Trump directed the government to cease working with the firm.

The Pentagon used the AI anyway, and Claude won't be phased out until the DoD finds a replacement. The political directive to stop using the technology was simply overridden by the operational reality that the military had become dependent on it.

The machine chose the target. The human stamped it. The girls died. And the company that built the machine had already told the Pentagon it didn't want it used this way.

They've Been Doing This Since 1988

On July 3, 1988, the USS Vincennes shot down Iran Air Flight 655 over the Strait of Hormuz. Records compiled by Iran Chamber Society show all 290 people on board killed, including 66 children. The ship was equipped with the most advanced radar system in the world. It still managed to "see" a commercial Airbus A300 as a military fighter jet.

The Navy's own data tapes show that Flight 655 was squawking the correct civilian transponder code, climbing steadily, and communicating in English with air traffic control seconds before the missiles were fired.

According to the Iran Chamber Society account, officers aboard the Vincennes had simply convinced themselves otherwise, consistently misreporting the plane's transponder signal as military-coded. A warning that the contact might be a commercial aircraft was acknowledged by the commanding officer and, as the record shows, essentially ignored.

The USS Sides, a nearby vessel, had already identified the aircraft as non-hostile and turned its attention elsewhere only seconds before the Vincennes launched its missiles.

A 1990 Washington Post report cited by Iran Chamber Society says that the Legion of Merit was presented to Captain Rogers and Commander Lustig for their performance that day. Commander Lustig also received the Navy Commendation Medal for "heroic achievement," praised specifically for his "ability to maintain poise and confidence under fire." Neither citation so much as acknowledged Iran Air Flight 655.

One month after the shootdown, Vice President George H.W. Bush offered the country his philosophical position on the matter, as quoted in Newsweek: "I will never apologize for the United States of America, ever. I don't care what the facts are."

The Pattern Is the Point

By the time the Obama administration formalized the "signature strike" program, the U.S. had spent two decades perfecting the art of killing by inference.

According to classified military documents obtained and published by The Intercept in 2015, signature strikes targeted people not because of who they were, but because of what they appeared to be doing -- metadata, behavioral patterns, proximity to known locations, threat scores assigned by analysts who often did not even know their targets' names, referring to them instead as "selectors."

According to those same documents, during one five-month stretch of Operation Haymaker in Afghanistan, nearly 90 percent of people killed in airstrikes were not the intended targets. The military classified all of them as "enemies killed in action" regardless.

The internal Pentagon review underlying those documents stated plainly that kill operations "significantly reduce the intelligence available" -- meaning the U.S. kept eliminating people who might have provided useful information, because execution was easier than certainty, and certainty required actually knowing who someone was.

The whistleblower who provided the documents to The Intercept shares that the internal view within special operations was that targets "have no rights. They have no dignity. They have no humanity to themselves. They're just a 'selector' to an analyst."

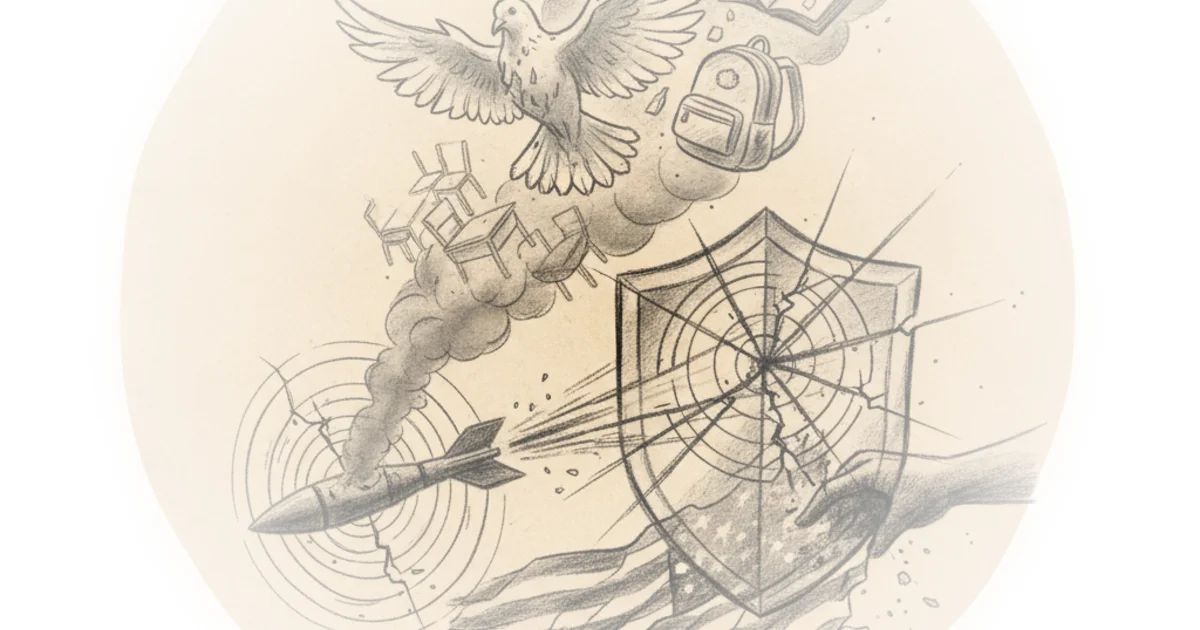

The Minab strike is not a malfunction of this system. It is the system working as designed.

When the U.S. shot down Iran Air Flight 655, it eventually settled with Iran in 1996 for $61.8 million in compensation. According to Iran Chamber Society, the payment came with an explicit clause: it did not constitute an admission of legal liability or responsibility. The men who carried out the strike had already been decorated.

When the Obama-era drone program killed hundreds of unintended targets, they were retroactively classified as combatants and the program moved on. The Intercept's source states that official government statements minimizing civilian casualties were "exaggerating at best, if not outright lies."

The machine is new. The response is not. In 1988 the technology was a radar. In 2015 it was a targeting algorithm. In 2026 it is a large language model. In every case, when the technology kills the wrong people, the U.S. investigates, offers condolences, pays no legal liability, and decorates the operators.

In a system where the entire architecture of targeting -- from the Aegis radar in 1988 to the pattern-of-life algorithms in 2026 -- is designed to convert human beings into data points and those data points into acceptable losses, apology is structurally impossible. You cannot apologize to someone you decided, in advance, was not really a person.

Critics might note that this analysis assumes deliberate intent rather than acknowledging the genuine complexity of modern warfare, where decision cycles are compressed and targeting data flows at unprecedented speeds. A reasonable counterargument is that the systems described here -- including AI targeting tools -- were deployed under extraordinary time pressure during active combat operations, which may explain but does not excuse civilian harm.

Bottom Line

The strongest argument in this piece is the consistent pattern across four decades: when technology fails to distinguish between civilians and combatants, the U.S. investigates, decorates operators, pays no legal liability, and denies responsibility. The historical parallel between 1988's radar failure and 2026's AI targeting isn't about the technology -- it's about impunity. The biggest vulnerability is that this pattern extends far beyond Iran, raising uncomfortable questions about every civilian death the U.S. has classified as "acceptable collateral" for decades.