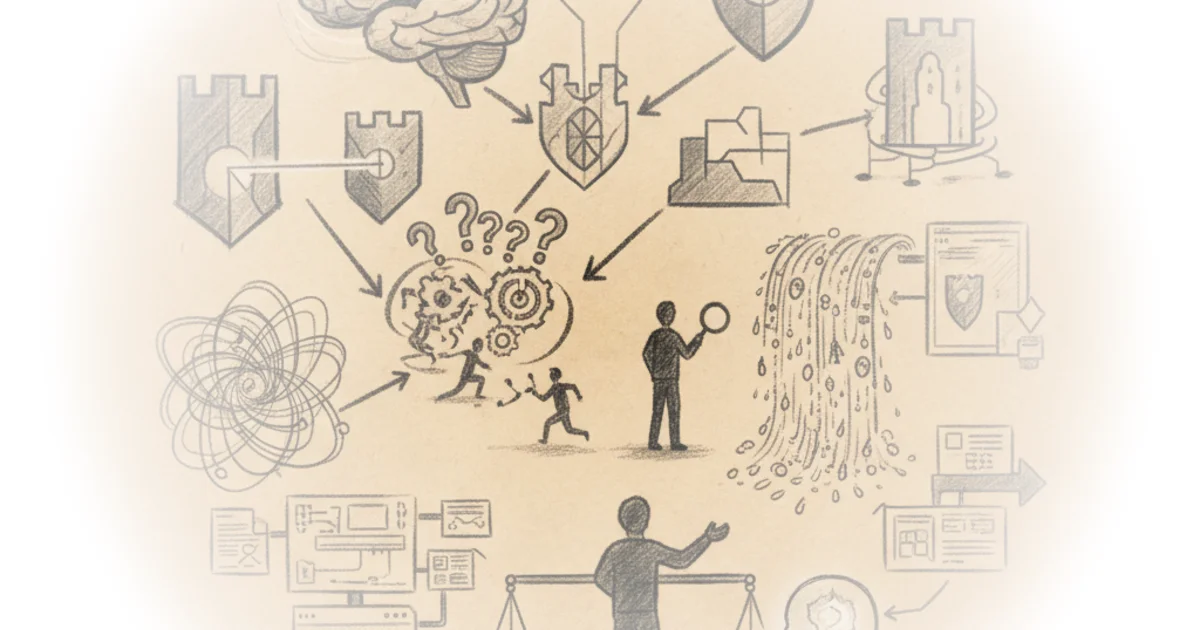

Ross Haleliuk challenges a foundational myth of the industry: that cybersecurity is primarily a technical problem solvable with better code and faster firewalls. Instead, he argues that the most critical vulnerabilities are not in the software, but in the predictable, irrational patterns of the human mind. For busy leaders managing risk, this reframing is essential because it shifts the solution from endless training to intelligent system design.

The Human Firewall Fallacy

Haleliuk begins by dismantling the popular notion that users can be trained into perfect defenders. He observes that "Most of the time when I hear security people discuss human behavior, the conversation is centered around how we need to train, educate, and test people, or even more fun, turn them into 'human firewalls'." This framing is crucial because it exposes the futility of relying on human perfection in a system designed for exploitation. The author argues that the human brain is not a rational processor but a pattern-matching engine that relies on shortcuts, or heuristics, to survive complexity.

The core of the argument rests on the idea that "An individual's construction of reality, not the objective input, may dictate their behavior in the world." Haleliuk suggests that attackers do not need to break sophisticated encryption; they simply need to trigger these cognitive shortcuts. This is a powerful insight because it moves the blame from the individual's lack of vigilance to the system's failure to account for human psychology. Critics might note that training still has a role in building baseline awareness, but Haleliuk's point stands: you cannot train away a biological instinct.

"The human brain relies on shortcuts (often called heuristics) to quickly make sense of complex information. These shortcuts allow us to navigate the uncertainty and complexity of our surroundings without having to think hard about every single action we take."

Weaponized Psychology

The piece then details how specific biases are weaponized in social engineering. Haleliuk explains that authority bias leads people to comply with requests from perceived leaders, while the halo effect causes us to trust a single positive trait, like a professional tone, over actual verification. He notes that "Emails 'from the CEO' or 'from the CFO', fake IT helpdesk calls, or well-designed login pages rely on these instincts to put us at ease." The solution proposed is not more awareness, but "deliberate, useful friction." By forcing a pause or an out-of-band verification, systems can break the automatic flow of compliance.

Similarly, the author tackles present bias and urgency. Attackers exploit the human tendency to prioritize immediate threats over future consequences. Haleliuk writes, "When we see signals that something is urgent, we feel forced to act fast, thinking that there's no time to think." He points out that ransomware demands and countdown timers are not just threats; they are psychological triggers designed to bypass rational thought. The editorial strength here is the practical recommendation: "introducing deliberate, useful friction on high-risk actions. This may be a 60-second hold-to-confirm prompt, or a short verification delay, anything that buys a person time for reflection."

The article also highlights how the rise of artificial intelligence exacerbates these issues. Haleliuk warns that "with AI, it's getting even worse because language is no longer a barrier, and attackers can use LLMs to tailor messages to individuals more accurately than ever before." This is a sobering reminder that as technology evolves, the psychological attacks become more personalized and harder to detect. The author argues that consistency in organizational messaging is the best defense against framing and fluency biases, noting that "Security warnings should be written in plain language, and alerts like account notifications should frame issues around facts people can verify, not emotional hooks that drive urgency."

The Blind Spots of Defenders

Perhaps the most surprising section of the piece is the pivot to the defenders themselves. Haleliuk argues that security teams are just as susceptible to cognitive biases as the users they protect. He identifies "availability and recency biases" as major pitfalls, where analysts prioritize threats that are currently in the news rather than those most likely to breach the company. "This leads to skewed prioritization, where threats of the day that happen to make headlines get more attention than the issues that are much more likely to get the company breached." This is a critical observation for leadership, suggesting that a security team's focus may be driven by media cycles rather than actual risk data.

The author also addresses the danger of overconfidence, citing the Dunning-Kruger effect and the illusion of validity. He writes, "Some fall into this trap when they see data that fits neatly into a coherent story, and others simply don't know enough to see their own limitations." This self-reflection is rare in the industry, where confidence is often mistaken for competence. Furthermore, Haleliuk warns of "automation bias," where humans trust machine outputs even when they are questionable. As systems become more automated, "Security teams should demand transparency from their vendors and make sure that machine recommendations always include an accompanying explanation that can be verified."

"In practice, the best we can do is to maintain awareness that we are all subject to biases that can distort our view of the world and impact our decisions."

Bottom Line

Haleliuk's strongest contribution is the shift from blaming users to redesigning systems that account for human fallibility. The argument is compelling because it offers concrete, actionable strategies like friction and secure defaults rather than vague calls for better training. However, the piece's biggest vulnerability is the assumption that organizations will willingly introduce friction into their workflows, a move that often conflicts with the demand for speed and convenience. Leaders should watch for how their own security teams prioritize threats, ensuring they are not just chasing headlines while the real risks go unaddressed.