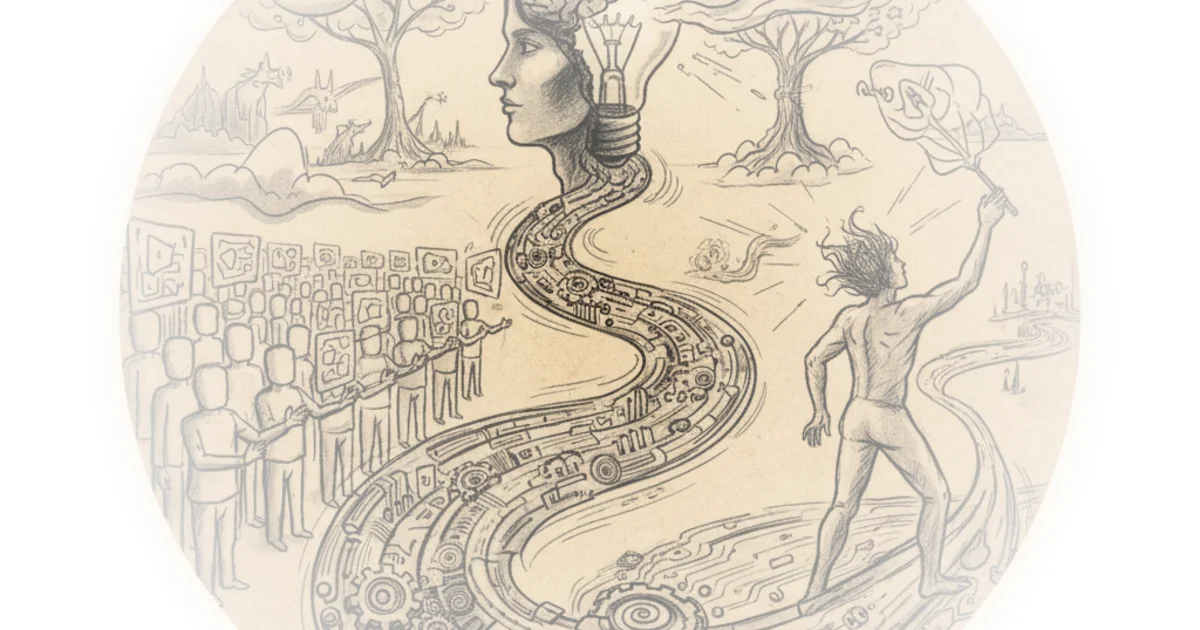

Johnny Chang's latest dispatch cuts through the hype cycle to ask a question that keeps educators awake at night: does the ease of generative AI actually erode the very creative muscles students need to strengthen? While the industry celebrates speed, Chang forces a reckoning with the potential cost to human connection and cognitive depth, offering a rare, balanced look at the trade-offs between efficiency and genuine learning.

The Illusion of Speed

Chang opens by dismantling the romanticized view of the creative process, noting that "The days of endlessly scrolling through stock photos are over!" This is an undeniable shift in workflow, yet the author quickly pivots to the central tension. "While AI makes creativity more accessible, could it also limit original thinking by offering too many ready-made solutions?" This framing is crucial because it refuses to treat the technology as purely benevolent. The argument suggests that when the barrier to entry for visual storytelling vanishes, the barrier to deep, original thought might inadvertently rise. Critics might note that accessibility is often a prerequisite for creativity, and that tools have always lowered barriers before new forms of expression emerged. However, Chang's concern about "metacognitive laziness"—a specific term drawn from recent academic research—gives the argument teeth.

"Students relying on ChatGPT showed signs of metacognitive laziness, meaning they engaged less in self-regulated learning."

The piece leans heavily on a study from Peking University and Monash University to support this. The research found that while AI-assisted students produced higher essay scores, they failed to show improvements in intrinsic motivation or knowledge transfer. Chang paraphrases this finding effectively, highlighting that the tool "should supplement, not replace, human guidance." This is a vital distinction for busy leaders to grasp: the metric of success isn't just the final grade, but the mental process used to get there. If the process is outsourced, the learning is hollowed out.

The Erosion of Trust and Memory

Beyond the classroom, Chang explores how this technology is reshaping the social fabric of Gen Z. Citing an interview with 18-year-old Amy Wong, the author writes, "One of the most compelling points she makes is the urgent need for media literacy education from a young age." The stakes here are high; the article notes that this mistrust "isn't confined to the internet, but also affects how teens perceive authority and information in their daily lives." This is a profound observation. When students cannot distinguish between human and machine output, the foundation of shared reality begins to crack.

Chang also touches on the cognitive implications, referencing a Nature study that questions whether "brain rot" is a real phenomenon or a misdiagnosis of how we adapt. The author writes, "Researchers argue that the impact is more nuanced, with limited evidence of broader memory decline." This nuance is refreshing. It suggests that the problem isn't the tool itself, but how it is wielded. The danger lies in "cognitive offloading," where the brain stops exercising its memory muscles because the device is always there to recall facts. As Chang puts it, experts emphasize "the importance of balancing technology use with active learning and critical thinking to preserve and enhance cognitive abilities."

The Human Cost in the Creative Economy

Perhaps the most poignant section of the piece is the interview with Sean Amaso, a graduate student in professional writing. Amaso offers a sobering perspective on the future of the creative industries, warning that "AI can limit that sense of humanity and human connection with other people." He argues that when companies replace human creators with algorithms to cut costs, they strip away the "souls and our entire beings" that go into art. "We put our souls and our entire beings into what we create so that we can connect with others," Amaso states, a line that resonates deeply in an era of automated content.

Chang uses this interview to ground the abstract policy debates in real-world anxiety. Amaso's advice to the public is to "Approach it with an open and cautious mind," avoiding both demonization and blind acceptance. This middle path is the article's guiding philosophy. It acknowledges that while "the creative field is quite competitive, and AI is going to shake up the industry," the solution isn't to ban the technology but to understand its limits.

"Don't be fully assumed with it, because you'll lack the awareness of how it's affecting people."

The piece also highlights institutional responses, such as the California State University system integrating ChatGPT Edu to build workforce skills, and Palm Beach County schools using Khanmigo to tutor students. These examples show that the executive branch and local districts are moving toward integration rather than prohibition. However, a study from Deakin University cited by Chang reveals the friction this causes: "Both students and teachers struggle to determine clear boundaries for AI use in academic assessments." The phrase "drawing the line" is described as "absurd" by the participants, suggesting that current policies are failing to provide the clarity needed for ethical implementation.

Bottom Line

Chang's strongest contribution is the synthesis of student voices with hard data on cognitive performance, proving that the efficiency of AI comes with a hidden tax on deep learning and human connection. The piece's biggest vulnerability is its reliance on early-stage studies regarding long-term cognitive decline, which may not yet reflect how future generations adapt their learning strategies. Readers should watch how institutions like the California State University system balance workforce preparation with the preservation of critical thinking skills in the coming years.

"We put our souls and our entire beings into what we create so that we can connect with others."