Ethan Mollick challenges a foundational assumption of corporate AI strategy: that you must first fix your messy processes before deploying artificial intelligence. Instead, he argues that the very chaos organizations fear might be irrelevant if AI can be trained solely on desired outcomes, rendering decades of operational refinement potentially obsolete.

The Illusion of Control

Mollick opens by invoking a classic study by Ruthanne Huising, where teams mapping their own company's workflows discovered a startling reality. The process revealed "entire processes that produced outputs nobody used, weird semi-official pathways to getting things done, and repeated duplication of efforts." The emotional core of this discovery is captured when a manager shows the map to the CEO, who "sat down, put his head on the table, and said, 'This is even more fucked up than I imagined.'" The executive realized his grasp on the organization was "imaginary."

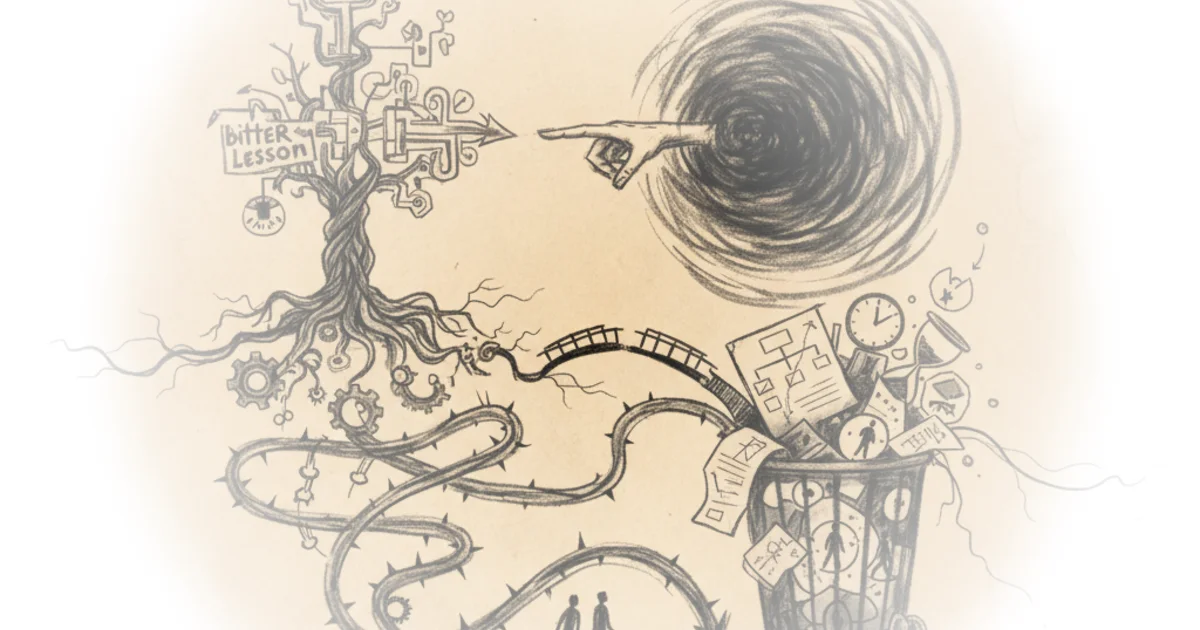

This anecdote sets the stage for the "Garbage Can Model" of organizational theory, which views companies not as rational machines but as chaotic bins where problems and solutions collide randomly. Mollick notes that this messiness is precisely why scaling AI is so difficult; traditional automation requires clear rules, yet "even though 43% of American workers have used AI at work, they are mostly doing it in informal ways, solving their own work problems." The prevailing wisdom suggests companies must spend months untangling these knots before they can automate. Mollick finds this approach intuitive but potentially wrong.

"The effort companies spent refining processes, building institutional knowledge, and creating competitive moats through operational excellence might matter less than they think."

The Bitter Lesson

The pivot in Mollick's argument comes from computer scientist Richard Sutton's "Bitter Lesson," a concept suggesting that human attempts to encode expertise into AI are often less effective than simply throwing more computing power at the problem. Mollick illustrates this with chess: early attempts to beat humans involved hard-coding centuries of strategy, but "Deep Blue... used some chess knowledge, but combined that with the brute force of being able to search 200 million positions a second." Later, AlphaZero beat humans with "no prior knowledge of these games at all," learning purely by playing against itself.

Mollick argues that this pattern is about to collide with the workplace. He contrasts two types of AI agents: those built with "carefully crafted" rules and those trained via reinforcement learning on outcomes. He tested both by asking them to create a graph comparing chess ratings. The hand-crafted agent, Manus, followed a rigid, human-designed to-do list. The outcome-trained agent, ChatGPT agent, "charted whatever mysterious course was required to get me the best output it could." The result? The outcome-trained agent produced a working Excel file and found more credible sources, while the hand-crafted version failed.

The implication is stark. If the Bitter Lesson holds, the path to better AI isn't better engineering of the agent's internal logic, but simply "more computer chips and more examples." Mollick writes, "Decades of researchers' careful work encoding human expertise was ultimately less effective than just throwing more computation at the problem." This suggests that the "bespoke knowledge" companies hoard as a competitive advantage may soon be worthless.

Critics might note that this view assumes all organizational tasks are as solvable as chess. Unlike a game with clear rules and a definitive win state, business problems often involve ambiguity, ethical nuance, and human relationships that brute force computation cannot easily navigate. A counterargument worth considering is that without understanding the "why" behind a process, an AI might optimize for the wrong metric or create dangerous shortcuts.

Navigating the Chaos

Mollick concludes by flipping the script on the despairing CEO. If the Bitter Lesson applies to work, the executive doesn't need to fix the broken process; they just need to define the output. "Instead of untangling every broken process, he just needs to define success and let AI navigate the mess." In this future, the undocumented workflows and informal networks that plague organizations become invisible to the AI, which simply learns to produce the desired result regardless of the path taken.

"In a world where the Bitter Lesson holds, the despair of the CEO with his head on the table is misplaced."

This reframing suggests a radical shift in management strategy. Companies that spend years mapping processes might be outpaced by competitors who simply define quality and feed data to powerful models. The competitive moat shifts from "how well we know our own operations" to "how clearly we can define success and how much data we have."

Bottom Line

Mollick's most compelling insight is that the obsession with process optimization may be a distraction in the age of outcome-trained AI, potentially rendering traditional operational excellence obsolete. However, the argument's greatest vulnerability lies in assuming that complex, human-centric organizational problems can be solved as easily as a chess game, ignoring the risks of opaque decision-making in high-stakes environments. Readers should watch for early evidence of whether outcome-trained agents can truly navigate the ethical and logistical gray areas of the real world without human oversight.