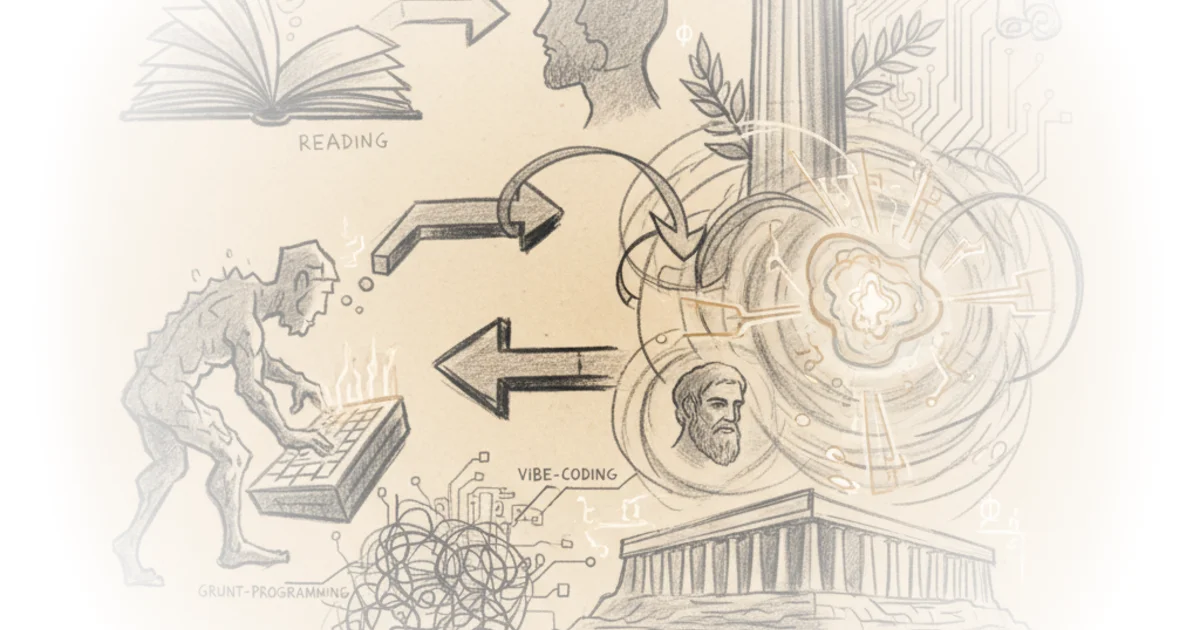

Brad DeLong does something rare in the age of artificial intelligence: he refuses to treat the technology as a novel disruptor and instead frames it as the latest iteration of a 2,400-year-old anxiety about how we store and process knowledge. By anchoring the modern panic over "vibe-coding" and AI-assisted programming in Plato's Phaedrus, DeLong argues that the danger isn't that machines will replace us, but that we will outsource our own cognitive sovereignty to them. This is not a Luddite screed; it is a sophisticated plea for maintaining the "living, breathing discourse" of human understanding against the seduction of external, silent tools.

The Ancient Warning Revisited

DeLong's central move is to treat the current debate over Modern Advanced Machine-Learning (MAML) not as a fresh crisis, but as a footnote to the writings of Aristokles son of Ariston, known as Plato, from the years 400 to 345 BCE. He posits that the "us" in the current conversation is actually the collective human mind, which he terms the "Anthology Super-Intelligence," and that we are risking our role as its calculation nodes. To illustrate this, he turns to a modern case study of a software engineer named Josh Anderson, who found himself helpless after relying exclusively on AI to build a product for three months.

"I forced myself to use Claude Code exclusively to build a product. Three months…. It worked. I was proud…. Then… I needed to make a small change and realized I wasn't confident I could do it…. Twenty-five years of software engineering experience, and I'd managed to degrade my skills to the point where I felt helpless looking at code I'd directed an AI to write."

DeLong uses this anecdote to bridge the gap between a specific tech failure and a philosophical principle. The engineer's realization—that he had become a "passenger" rather than a creator—mirrors the ancient warning of King Thamus to the god Theuth in Plato's dialogue. DeLong writes, "You have not discovered a potion for remembering, but for reminding; you provide your students with the appearance of wisdom, not with its reality." This framing is powerful because it shifts the conversation from "will AI take my job?" to "will AI take my mind?"

However, DeLong is careful not to accept Plato's critique wholesale. He acknowledges the irony that we only know Plato's argument against writing because Plato wrote it down. He notes that while Plato preferred the "living discourse" of dialectic, almost everyone who engages in deep reading disagrees with the idea that the written word is inherently inferior. The text serves as a stable reference point, a "reminder" that allows us to return to complex ideas repeatedly, something oral tradition struggles to do.

"The offsprings of painting stand there as if they are alive, but if anyone asks them anything, they remain most solemnly silent. The same is true of written words. You'd think they were speaking as if they had some understanding, but if you question anything that has been said because you want to learn more, it continues to signify just that very same e thing forever."

This observation about the silence of the written word is particularly poignant when applied to AI code. Unlike a human colleague who can explain why a function was written a certain way, the AI-generated code is a static artifact that "roams about everywhere, reaching indiscriminately those with understanding no less than those who have no business with it." DeLong suggests that without the ability to interrogate the source of the logic, we are left with a product that we do not truly own.

The Farmer and the Garden of Adonis

The most striking metaphor DeLong deploys comes from the latter half of the Phaedrus, where Socrates compares the serious pursuit of knowledge to farming. He contrasts the sensible farmer, who plants seeds in the right season for a harvest months later, with the frivolous gardener who plants in the "gardens of Adonis" just to watch them sprout and wither in a week. DeLong uses this to distinguish between using AI as a tool for genuine understanding versus using it for the "amusement" of rapid, shallow output.

"Therefore, he won't be serious about writing them in ink, sowing them, through a pen, with words that are as incapable of speaking in their own defense as they are of teaching the truth adequately."

DeLong argues that the "gardens of Adonis" are the modern equivalent of "vibe-coding," where the goal is immediate gratification and a quick prototype rather than a robust, defensible system. The reference to the Adonia festival, a historical event in ancient Athens where women planted fast-growing seeds in shallow pots to celebrate the death of Adonis, adds a layer of cultural depth that underscores the transience of such efforts. DeLong writes, "Such a man, Phaidrus, would be just what you and I both would pray to become," referring to the person who plants seeds in the soul of the listener rather than in the shallow soil of a digital prompt.

Critics might argue that this distinction is too binary. In a fast-moving technological landscape, the ability to prototype quickly and iterate—even if it means some code is "shallow"—is often a competitive necessity. The "gardens of Adonis" might be the only way to survive in a market that rewards speed over depth. Yet, DeLong's point remains that without the underlying knowledge to defend the work, the creator is ultimately vulnerable.

"It is a discourse that is engraved down, with knowledge, in the soul of the listener; it can defend itself, and it knows for whom it should speak and for whom it should remain silent."

This ideal of the "living discourse" is the ultimate counter to the "grunt-programming" of AI. It suggests that true expertise is not just about producing output, but about internalizing the logic to the point where it becomes part of one's own cognitive architecture. DeLong implies that the administration of code, like the administration of laws, requires a deep, internalized understanding of justice and function, not just a superficial ability to generate text.

The Verdict on Ownership

DeLong concludes by returning to the question of ownership, citing the warning that "100% [AI] looks like… failure" if the human operator cannot explain or modify the result. The core of his argument is that the gap between who we are and who we pretend AI makes us is dangerous. He suggests that the only way to close this gap is to use AI as a "training partner" rather than a replacement, ensuring that the "Anthology Super-Intelligence" remains a collective of human minds rather than a collection of black-box outputs.

"Who owns that product, Josh? You or Claude Code?" The answer was Claude Code. I'd abdicated ownership while telling myself I was being innovative…. [There is] a gap between who you are and who you've been pretending AI makes you."

This is a stark warning for any professional relying on generative tools. The argument holds up because it addresses the psychological reality of skill atrophy, not just the technical limitations of the software. DeLong's synthesis of ancient philosophy and modern engineering creates a unique lens through which to view the AI revolution: it is not a battle of man versus machine, but a test of whether we can remain the masters of our own intellectual gardens.

Bottom Line

Brad DeLong's piece succeeds by reframing the AI anxiety as a timeless struggle for cognitive autonomy, using Plato's Phaedrus to expose the hollowness of "vibe-coding" without genuine understanding. While the argument risks underestimating the utility of rapid prototyping in a fast-paced economy, its core insight—that true ownership of a product requires the ability to defend and modify it—is a vital check against the seduction of convenience. The reader is left with a clear imperative: use these tools to deepen your knowledge, not to bypass the hard work of thinking.