Andreas Matthias dismantles the most pervasive myth of our technological moment: that Artificial Intelligence is an alien force invading our world. Instead, he argues that our fear of AI is merely a mirror reflecting our own anxieties, biases, and predictable patterns of speech. For busy leaders navigating the rapid integration of large language models into their workflows, this piece offers a crucial pivot from sci-fi panic to human accountability.

The Projection of Fear

Matthias begins by exposing the structural emptiness of our current AI panic. He points out that the apocalyptic narratives surrounding machine intelligence are not unique to the technology itself. "Interestingly, every one of these sentences could, just by changing one word, be made to address other common fears in our societies," he writes. Whether the subject is China, immigrants, or AI, the script remains identical: they will take our jobs, they are unreliable, and their values do not align with ours.

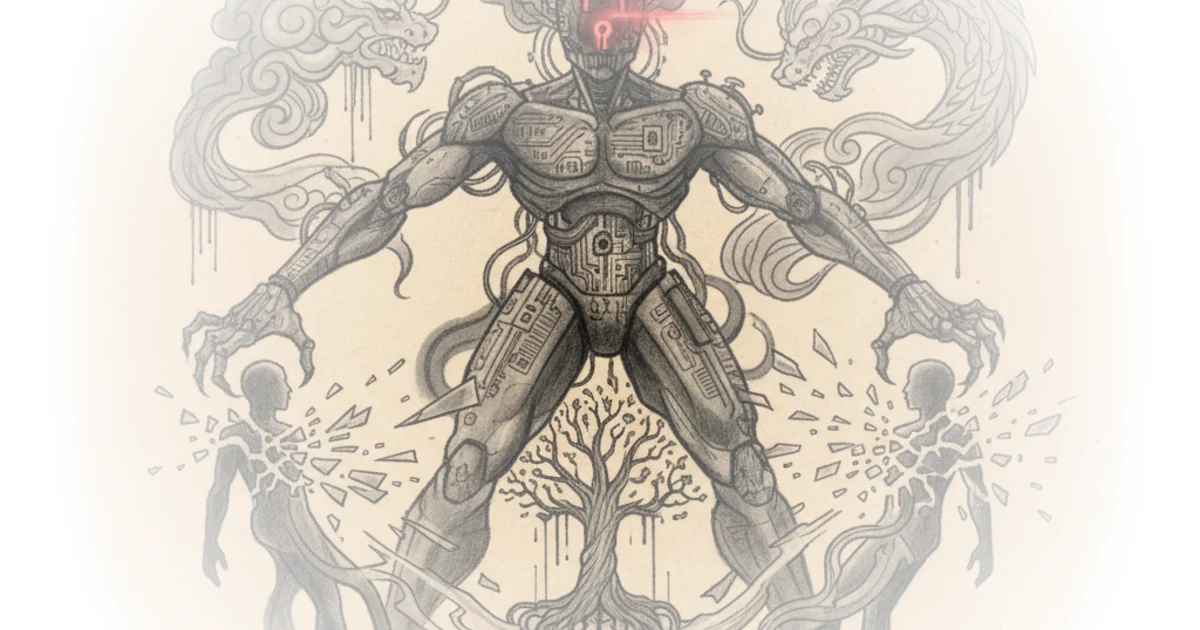

This reframing is potent because it shifts the locus of the problem from the external threat to our internal psychology. Matthias suggests that we are not reacting to the specific capabilities of algorithms, but rather projecting our deep-seated fears onto any "suitable and available other." He challenges the reader to consider if the machine is truly the monster, or if we are simply using it as a vessel for our own insecurities about obsolescence. This is a necessary corrective to the breathless coverage that treats AI as a singular, autonomous agent of doom.

The Paradox of Intimacy

The article then moves to a striking observation about human behavior: we are simultaneously terrified of AI and deeply dependent on it. Matthias notes that while we fear these systems, we also use them to write legal letters, craft dating messages, and seek career advice. He highlights a survey finding that "more than half of survey participants (52%) would prefer to meet someone in real life than to create a perfect partner in a virtual world," yet implies that the very act of using AI for romance blurs the line between the two.

This contradiction reveals that we do not treat AI as a cold, inanimate tool. "We relate to AI as we relate to other human beings," Matthias asserts. He draws a fascinating parallel to ancient mythology to explain this dynamic. Just as the sculptor Pygmalion fell in love with his statue, or the 16th-century Rabbi Loew animated the Golem to protect his community, humanity has a long history of imbuing artifacts with life and agency. The fear we feel today is not new; it is the echo of the "primal fear of every living being, destined to die and be overcome by one's offspring."

Fearing AI means fearing ourselves. And that may be a sensible response, all things considered.

Critics might argue that this anthropomorphism is dangerous, as it obscures the very real risks of algorithmic bias and the lack of genuine understanding in these systems. However, Matthias's point is that the fear itself is the human element, not the machine's intent.

The Statistical Mirror

The core of Matthias's argument rests on a technical reality that is often glossed over in popular discourse: Large Language Models are not thinking entities. They are, at their heart, "nothing but a statistical mirror of human language use." He explains that these models work because human speech is incredibly predictable, a phenomenon linguists have long described with Zipf's Law. This law states that the frequency of any word is inversely proportional to its rank; the most common word appears twice as often as the second, and so on.

Matthias uses this to dismantle the illusion of AI originality. "If every one of us had a personal, unique point of view, then AI training would be impossible," he writes. The fact that AI can predict the next word with such accuracy proves that we, the users, are far more predictable than we like to believe. Our expertise, our jokes, and our arguments are all just variations on a statistical theme. When an AI generates a response, it is not accessing a hidden consciousness; it is calculating the most probable sequence of words based on the collective output of humanity.

This leads to a profound conclusion about bias. If an AI system is racist, it is not because the machine has developed a hatred for a specific group. "When they are racist, they are racist in exactly the ways that we humans are," Matthias states. The system is simply echoing the biases present in the billions of words it was trained on. It is a reflection of the "collective memory of our species," not an independent moral agent. This distinction is vital for policymakers and executives who might otherwise blame the technology for societal failures that are, in fact, human in origin.

The Mirror of Wisdom and Folly

In the final analysis, Matthias reframes AI not as a threat, but as a tool for self-reflection. He suggests that these systems are "optimised phronesis machines," capable of dispensing practical wisdom based on a vast corpus of human experience. When we ask an AI for advice, we are not consulting a robot oracle; we are querying the distilled wisdom of our own culture.

"When we talk to AI, we are talking to a distilled, statistically optimal version of ourselves," he concludes. The machine knows what we want, what we fear, and what bores us, because it has learned these patterns from us. The danger lies not in the machine becoming hostile, but in us failing to recognize that the hostility, the bias, and the wisdom we see in the output are our own. By viewing AI as a mirror, we are forced to take responsibility for the data we feed it and the values we encode within it.

Bottom Line

Matthias's strongest contribution is the radical simplification of AI's nature: it is a mirror, not a monster. This perspective effectively neutralizes the fear of an alien intelligence while simultaneously raising the stakes for human responsibility. The argument's vulnerability lies in its potential to understate the unique dangers of autonomous systems that can act faster and with more scale than any human, regardless of their statistical origins. Readers should watch for how this "mirror" framing influences future regulations: if AI is just us, then regulating it becomes a matter of regulating our own collective output.