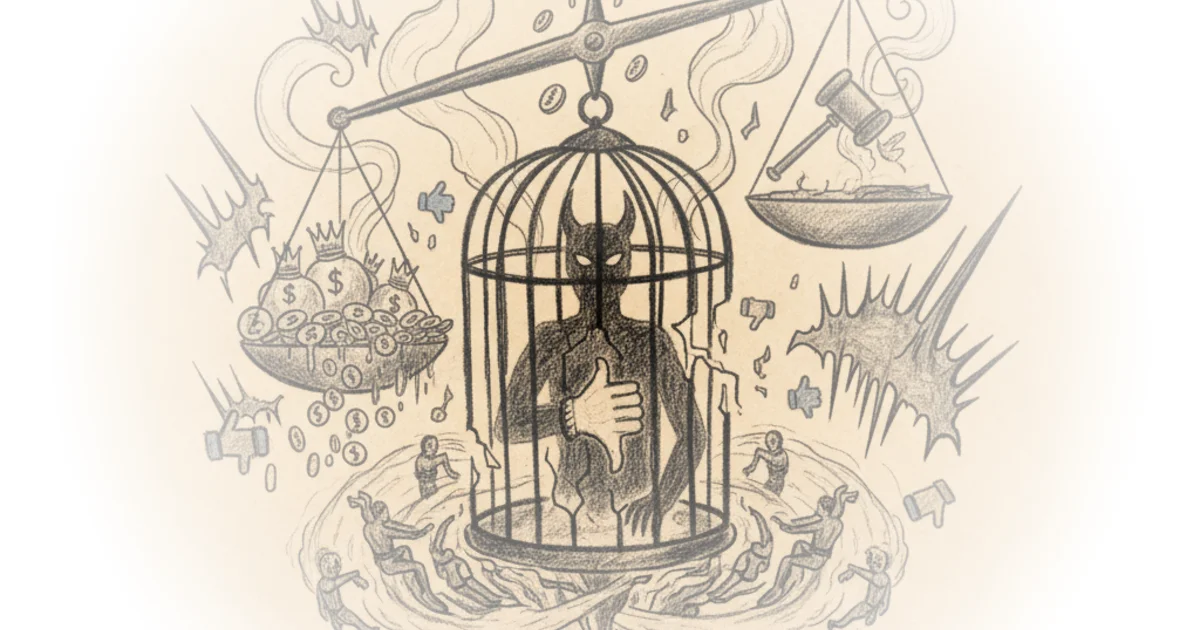

This piece delivers a scathing indictment of the disparity between how the law treats ordinary citizens versus tech oligarchs, centering on a staggering allegation: that Meta systematically pirated over 160 terabytes of copyrighted material to train its artificial intelligence models. The Hated One does not merely critique corporate policy but constructs a legal and moral case that the executive branch's selective enforcement of copyright law amounts to a two-tiered justice system where wealth buys immunity. For a reader navigating the complexities of AI development and intellectual property, this argument reframes the current legal battles not as technical disputes, but as a fundamental failure of accountability.

The Scale of Alleged Theft

The Hated One anchors the argument in specific court documents and internal communications, moving beyond speculation to detail a coordinated effort. "Mark Zuckerberg has crossed the line and this is a clear case study of how we don't have a universal justice system," they write, setting a tone of urgent moral indictment. The author details how Meta allegedly utilized shadow libraries like Anna's Archive and Libgen, downloading massive datasets while actively taking precautions to hide their tracks. Internal messages are cited where employees express discomfort, with one noting, "Torrenting from a corporate laptop doesn't feel right," and another stating, "I feel that using piracy material should be beyond our ethical threshold."

This evidence suggests a conscious decision-making process rather than a technical oversight. The author argues that the defense of "fair use" for AI training collapses when the method of acquisition involves torrenting, a protocol that inherently requires sharing (seeding) the stolen data. "One of Meta's executives had a Freudian slip about this in a testimony where he said Meta modified the conflict setting so that a smallest amount of seeding possible could occur," The Hated One observes, highlighting the contradiction between the company's public stance and its internal technical adjustments. The argument here is compelling because it exposes the mechanics of the alleged crime: the company knew the legal risks, admitted the ethical breach internally, and engineered a workaround to avoid detection.

Critics might note that the legal definition of "fair use" in the context of AI training is currently being litigated in federal courts, and a final judicial ruling has not yet determined whether the use of these datasets constitutes infringement or transformative work. However, the author's focus is less on the final legal verdict and more on the intent and method of acquisition.

If we held Zuck to the same standards that we are held to, he'd be jailed for life.

The Aaron Swartz Parallel

The piece pivots to a historical comparison that serves as its emotional and logical core: the prosecution of Aaron Swartz. The Hated One contrasts the fate of the young programmer, who downloaded 80 gigabytes of academic papers from a network he had legal access to, with the alleged actions of the Meta CEO. "Aaron didn't hack anything. He had free access to MIT's campus network which gave everyone in the campus free access to JSTOR," the author explains, emphasizing that Swartz faced 50 years in prison and a million-dollar fine for an act that the victim, JSTOR, had already deemed settled. In contrast, the author argues, the tech executive faces no such consequence despite allegedly stealing 2,000 times more data from illegal sources.

This comparison is used to illustrate the author's central thesis on the "two-tiered justice system." The narrative details how the government's aggressive prosecution of Swartz led to his suicide, framing it as a direct result of the state's refusal to accept a settlement that the victim was willing to honor. "The 2 years of Nixonian level of prosecution eventually led to him taking his own life," The Hated One writes, a statement that underscores the human cost of the legal disparity. The argument suggests that the administration's approach to Swartz was driven by a desire to make an example of a powerless individual, while the same legal tools are withheld from the powerful.

Institutional Collusion and Global Harm

Beyond copyright, the commentary expands to a broader critique of Meta's historical record regarding human rights and data privacy. The author lists a litany of grievances, from the Cambridge Analytica scandal to the platform's role in the ethnic cleansing of the Rohingya in Myanmar. "Facebook really does seem to have a genocide problem," The Hated One asserts, pointing out that the company was warned repeatedly but chose to prioritize engagement and profit over safety. The argument posits that the lack of legal consequences for these actions is not an accident but a feature of the current political economy.

The author connects these past failures to the current political climate, suggesting that the alignment between the tech giant and the administration ensures continued impunity. "These fines that barely make a percentage of his company's quarterly profits are just a cost of doing business. That's not punishment. That's corporate government collusion," they argue. This framing shifts the blame from individual corporate actors to the systemic relationship between the executive branch and big tech. The piece concludes with a call to action, urging readers to abandon these platforms for decentralized alternatives like Mastodon and Signal, arguing that the metadata collected by these companies is a threat to civil liberty.

It's more useful to like make things happen and then like apologize later than it is to make sure that you dot all your eyes now and then like just not get stuffed on.

Bottom Line

The strongest element of this commentary is its relentless juxtaposition of the Swartz case against the alleged actions of Meta, effectively using a tragic historical precedent to illuminate current injustices. The author's ability to weave internal corporate communications with high-level policy critique creates a narrative that is both specific and systemic. However, the piece's vulnerability lies in its conflation of ongoing civil litigation with criminal liability; while the moral argument is potent, the legal claim that the CEO should face life imprisonment remains a rhetorical device rather than a prosecutable reality under current statutes. Readers should watch for how federal courts eventually rule on the "fair use" defense in AI training, as that decision will either validate or dismantle the author's central premise.