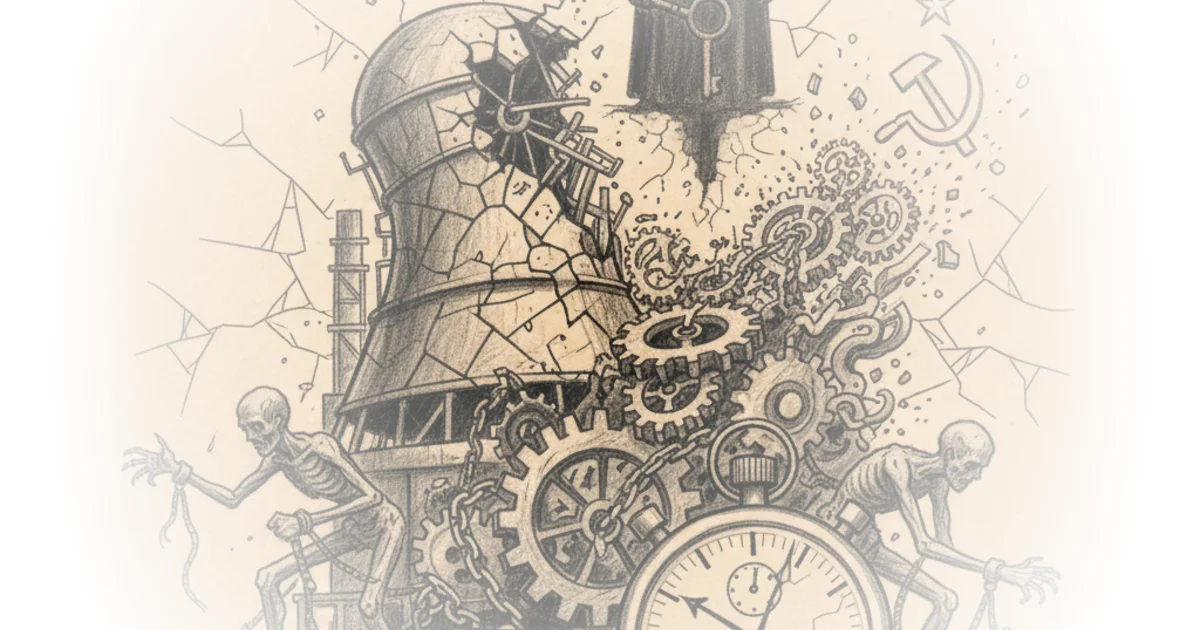

Forty years after the world's worst nuclear catastrophe, a new analysis from Reason cuts through the noise of sensationalism and political theater to deliver a starker, more structural truth: the explosion at Chernobyl was not an inevitable failure of nuclear physics, but a predictable outcome of a specific political economy. The piece argues that while the RBMK reactor design was flawed, the true disaster was manufactured by a system that prioritized bureaucratic survival over human life, a dynamic that remains dangerously relevant as energy policy debates heat up globally today.

The Anatomy of a Preventable Catastrophe

The article begins by dismantling the myth of Soviet technological invincibility. Long before the 1:23 a.m. explosion on April 26, 1986, state media had cultivated an image of absolute safety. "In 1983, state-sponsored news agency Novosti reported that Soviet scientists had estimated the probability of a nuclear accident involving a radioactive discharge at one in 1 million," Reason reports. This hubris was not merely propaganda; it was a systemic blindness. The piece details how the disaster was triggered by a botched safety test on a reactor design that had a fatal flaw known as a "positive void coefficient," where the water used to cool the reactor actually increased reactivity as it turned to steam.

The narrative is chilling in its technical precision. Operators, struggling to maintain power levels for the test, withdrew control rods to compensate for a power drop. When they finally attempted to shut down the reactor, the graphite tips on those rods, rather than absorbing neutrons, initially accelerated the reaction. "The only way of stopping the nuclear reaction was for the reactor to rearrange itself: which it did," the article quotes lead investigator Valery Legasov. This moment of mechanical rearrangement resulted in two massive explosions, blowing off 2,000-ton cover plates and spewing 50 tons of radioactive material into the atmosphere.

Secrecy then, is inimical to safety, for with secrecy about accidents, one can only learn from one's own mistakes and not from the mistakes of others.

The human cost was immediate and horrific. Two workers died instantly, and 28 firefighters succumbed to acute radiation poisoning within months, their bodies so radioactive they required burial in lead and concrete. Yet, the article emphasizes that the tragedy was compounded by a delay in evacuation. Soviet officials, terrified of admitting failure, waited 36 hours to evacuate the 45,000 residents of Pripyat. By the time the order came, the plume had already spread across Europe, detected first not in Moscow, but by radiation alarms in Sweden.

The Cost of Central Planning

The core of Reason's argument shifts from the mechanics of the meltdown to the mechanics of the Soviet state. The piece posits that the disaster was a direct product of "central planning and totalitarian secrecy." The decision to build these plants without the expensive containment structures standard in the West was not a technical oversight but a calculated economic choice. Containment would have raised costs by 25 to 30 percent, threatening the rigid timelines of the Party's five-year plans.

"Priority was given to the solution that was safe for the officials, but which subsequently created a threat to people's lives," the article notes, citing Russian researchers. This bureaucratic calculus meant that safety was sacrificed to protect the careers of planners and managers. The KGB, rather than ensuring safety, functioned as a censor, embedding spies to suppress reports of defects. A 1983 memo from a KGB lieutenant colonel had explicitly warned that the stations were "the most dangerous with regards to their future use, which could have alarming consequences," yet this warning was buried.

This systemic opacity meant that lessons from previous accidents, such as the 1957 explosion at Chelyabinsk, were never shared. The culture of fear prevented the feedback loops necessary for safety. "Excessive secrecy is characteristic of all totalitarian regimes and is one of their principal weaknesses," argues the piece, quoting physicists Alexander Shlyakhter and Richard Wilson. Critics might argue that even in the most transparent democracies, complex industrial systems suffer from information silos and regulatory capture, suggesting that the problem is not unique to totalitarianism. However, the scale of the cover-up at Chernobyl, where even the deputy chief engineer was unaware of prior reactor failures, points to a severity of institutional dysfunction that goes beyond mere bureaucratic inertia.

Separating Myth from Reality

In its final section, the commentary tackles the lingering myths surrounding the death toll. The article pushes back against alarmist estimates, such as a Greenpeace report claiming nearly a million deaths, citing more rigorous scientific analyses. The International Agency for Research on Cancer estimates 16,000 cancer deaths across Europe by 2065, a number that is tragic but orders of magnitude lower than the apocalyptic figures often cited.

"The vast majority of the population need not live in fear of serious health consequences from the Chernobyl accident," the piece cites from a 2008 UN report. The most significant health impact was thyroid cancer in children, caused by the consumption of milk contaminated with radioactive iodine. A simple public health intervention—banning fresh milk—could have mitigated this, but the Soviet refusal to issue warnings due to fear of panic prevented it. The article concludes that while the exclusion zone remains a haunting landscape of abandoned schools and decaying infrastructure, the disaster's legacy is a testament to the dangers of a system that values control over truth.

Bottom Line

Reason's analysis succeeds by refusing to let the disaster become a mere footnote in Cold War history or a sensationalized horror story; instead, it frames Chernobyl as a case study in the lethal consequences of suppressing information and prioritizing political expediency over engineering reality. The piece's greatest strength is its unflinching look at how the RBMK reactor's design flaws were exacerbated by a culture of fear, while its most vital warning is that the mechanisms of secrecy and bureaucratic self-preservation are not confined to a bygone era but remain a persistent threat to industrial safety anywhere they are allowed to fester.