Andy Masley delivers a jarring diagnosis for the current era: the intense moral revulsion many feel toward chatbots isn't about the technology's actual danger, but about the terrifying loss of social status it exposes. While most analysts debate the technical capabilities of large language models, Masley argues that the real panic stems from a psychological crisis where raw information is stripped of its traditional high-status and low-status human gatekeepers.

The Contradiction of Panic

Masley begins by dismantling the prevailing narrative that chatbots are simultaneously useless and demonic. He identifies a "chatbot moral panic view" that relies on three logically incompatible beliefs: that the tools are phenomenally stupid, that they cannot provide value by definition, and yet that they are inherently evil. "Chatbots are phenomenally stupid, useless, and incapable," Masley writes, noting that this belief clashes with the fear that they are "demonic" and possess an "ominous and evil" nature beyond measurable harm. He points out the absurdity of this stance: "It seems like complaining that a rock can barely sing at all."

This framing is effective because it forces the reader to confront the irrationality of their own emotional reactions. The author suggests that if the technology were truly as useless as critics claim, it wouldn't warrant such deep moral outrage. The panic, Masley argues, is a post-hoc justification for a deeper discomfort. Critics might note that this dismissal of "moral panic" risks underestimating genuine, non-status-based concerns regarding privacy and data exploitation, yet the core observation about the intensity of the reaction remains a compelling lens.

The Status Vacuum

The heart of Masley's argument is a psychological theory: chatbots are dangerous because they sever the link between information and the human figures we use to judge it. He draws a sharp parallel to a personal tragedy involving a friend who joined a cult, noting that the friend was "very hyper-sensitive to his personal sense of status" and used "big flashy contrarian ideas" to feel powerful. Masley recalls how his friend would explode into monologues about topics like solarpunk—a movement often associated with hopeful, decentralized futures—to position himself as a "special arbiter of truth." In fact, Masley notes, the friend claimed solarpunk was "fascist" based on an "incomprehensible pile of reasons" to create a conversational wall that excluded others.

This anecdote serves as a microcosm for broader social dynamics. Masley argues that in normal discourse, we rely on "high and low-status people" to validate our beliefs. When we discuss climate change, for instance, the conversation rarely stays on "Watt-hours or milliliters of water." Instead, it shifts to figures like Elon Musk or Mark Zuckerberg. "If you can associate me with Elon or Zuckerberg, you've cast me out as the bad guy and won the argument," Masley writes. The chatbot disrupts this by offering answers without a human face to attack or worship.

Chatbots remove our ability to associate information with specific high and low-status people.

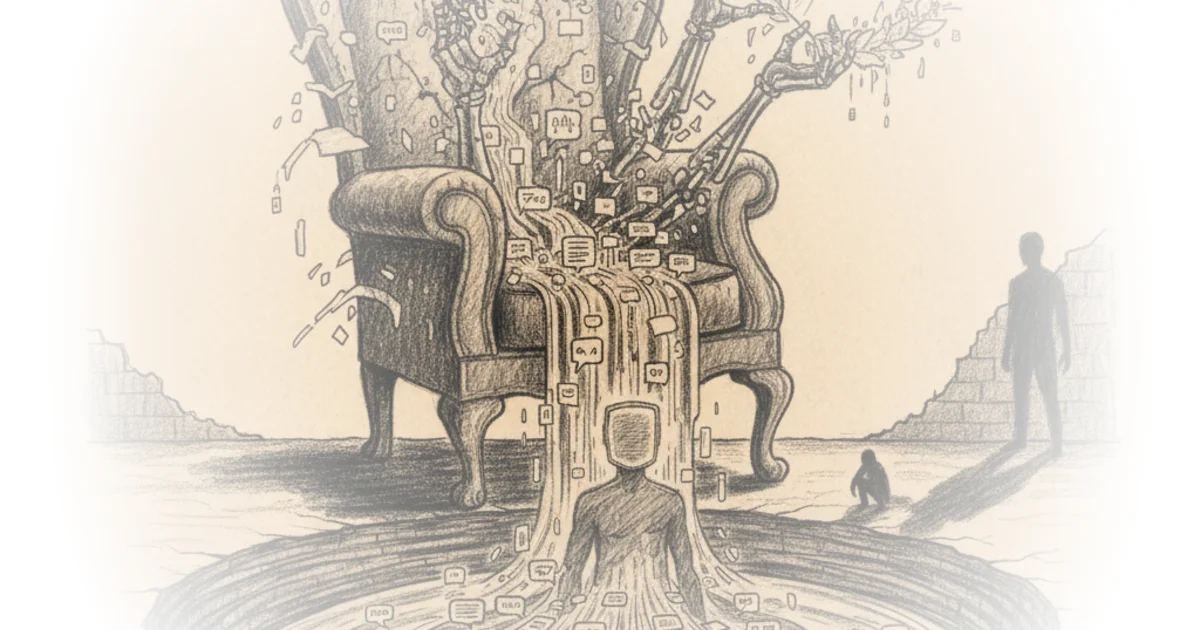

This insight is particularly potent when viewed through the lens of historical moral panics. Just as the Frankfurt School theorist Theodor W. Adorno analyzed how mass culture could manipulate the masses, Masley suggests we are now manipulating ourselves by clinging to status markers to avoid the hard work of evaluating facts. The chatbot, by providing information without a "cool jacket" or a famous name, leaves us naked before the data. Masley writes, "Raw information without an associated high or low-status person is dangerous to entertain, and you need to ignore it when possible."

The Illusion of Deep Belief

Masley extends this critique to how we consume culture and ideas, arguing that many of our "deeply held beliefs" are actually superficial talismans. He references the Norwegian author Karl Ove Knausgård to illustrate how people often engage with complex literature not to understand it, but to signal their own refinement. Masley paraphrases Knausgård's experience with modernist authors like Adorno and Blanchot, noting that the reader often learns "nothing, understood nothing, but just having contact with them... led to a shifting of consciousness." The goal was enrichment through association: "I was someone who read Adorno!"

This behavior, Masley suggests, is why we buy books with titles like "Capital-Feudalism Beyond the Anthropocene"—not to read them, but to "be the type of people who display them on their shelves." He argues that these books function as barriers against "evil ideas," filtering content through a trusted, high-status author. When a chatbot bypasses this filter, it threatens the social ritual of ascending into the realm of the "elect." "The information in the book itself is often not actually as important as the book serving as a social ritual," Masley observes. This is a stinging critique of the modern intellectual landscape, suggesting that our identity is often built on the appearance of depth rather than the substance of understanding.

Bottom Line

Masley's most significant contribution is reframing the AI debate from a technical assessment of capabilities to a psychological autopsy of our need for status hierarchies. His argument is strongest when exposing the contradiction between claiming chatbots are useless while fearing them as evil, but it stumbles slightly by potentially underestimating the very real, non-status-based risks of algorithmic bias and misinformation. The reader should watch for how this status vacuum evolves: as AI becomes more integrated, will we develop new human gatekeepers, or will we be forced to confront the uncomfortable reality of evaluating ideas on their own merits? The answer will define the next decade of public discourse.