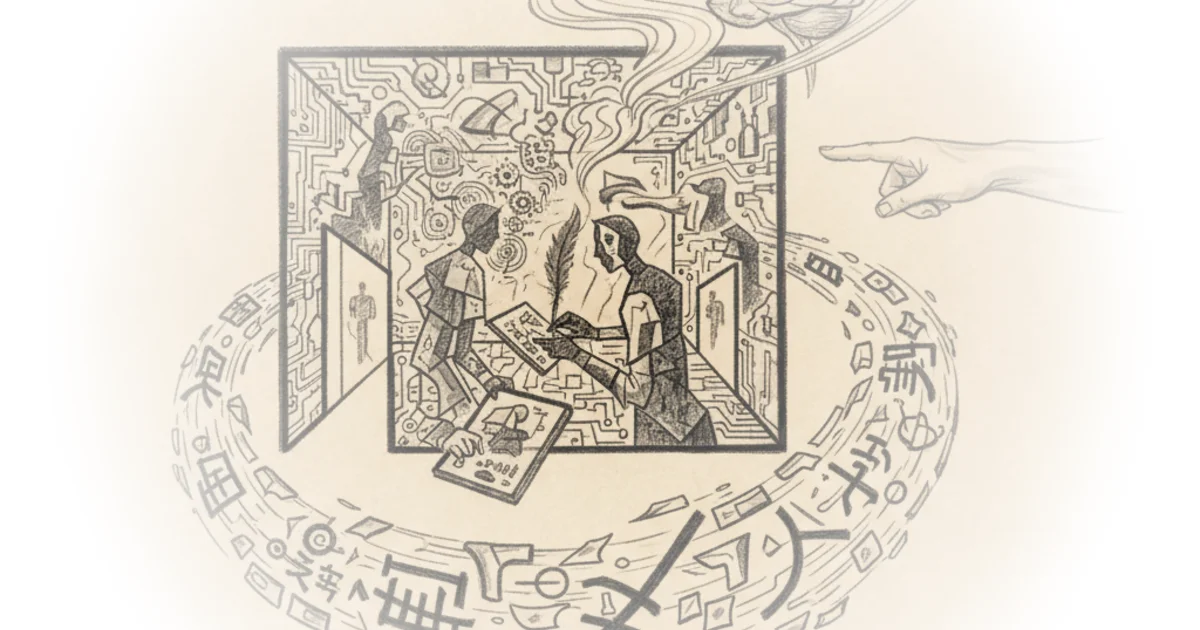

Jeffrey Kaplan dismantles the most seductive promise of modern technology: that a machine can truly think. In a rigorous breakdown of John Searle's famous "Chinese Room" argument, Kaplan argues that the distinction between manipulating symbols and understanding meaning is not just a technical detail, but a fundamental wall that digital computers cannot cross. For busy readers navigating an era of hype around artificial intelligence, this piece offers a necessary philosophical brake, proving that passing a test is not the same as possessing a mind.

The Hardware Trap

Kaplan begins by setting the stage with a clear, almost surgical definition of the target: "Strong AI." He explains that this theory posits the human mind is merely software running on the biological hardware of the brain. "This view that is strong AI has the consequence that there is nothing essentially biological about the human mind," Kaplan writes. He illustrates the radical nature of this claim by noting that, under this theory, "any physical system whatever that had the right program with the right inputs and outputs would have a mind in exactly the same sense that you and I have minds."

The author's framing here is sharp. He reduces a complex philosophical debate to a simple equation: if the inputs and outputs match, the internal state doesn't matter. Kaplan points out the absurdity of this logic by suggesting that "if you made a computer out of old beer cans powered by windmills, if it had the right program, it would have to have a mind." This reductio ad absurdum is effective because it forces the reader to confront the implications of functionalism—the idea that mental states are defined solely by their function rather than their substance. Critics might argue that this dismisses the emergent properties of complex systems, but Kaplan's goal is to expose the gap between simulation and reality.

The point is not that for all we know, it might have thoughts and feelings, but rather that it must have thoughts and feelings because that is all there is to having thoughts and feelings.

Syntax Without Semantics

To prove why the "beer can computer" cannot actually think, Kaplan introduces the critical distinction between syntax and semantics. He defines syntax as the formal features of a language—the shape of letters, the order of symbols—while semantics represents the actual meaning. "You can know lots of things about these symbols right here... You know something about their form, their shape, right? Those formal features... That's what Searle means by syntax," Kaplan explains. In contrast, semantics is the "actual meaning of these symbols... what these symbols represent."

This distinction is the engine of the entire argument. Kaplan uses the example of a Turing machine, a theoretical device that manipulates binary code. He notes that the machine "doesn't know that these ones and zeros represent numbers. It doesn't know anything about numbers." It simply follows rules based on the shape of the symbols. "It can just recognize the syntax. It can just recognize the shapes of the symbols and perform functions as a result of that," he writes. The machine can successfully add three and two, producing the correct answer, yet it possesses zero understanding of what addition means. This is a powerful illustration of the limits of computation: a system can be perfectly functional without ever grasping the content it processes.

The Chinese Room Revealed

With these concepts established, Kaplan prepares the reader for the core thought experiment. The argument hinges on the idea that a digital computer is, by definition, "a very specific type of thing. All it is is a digit manipulator. All it is is a device that moves around symbols. That's it." He argues that because computers only ever operate on syntax, they can never achieve semantics. "It didn't understand anything about addition, didn't know anything about numbers, didn't have any meaning or semantics at all," Kaplan states of the Turing machine, yet it "successfully added three and two by just following some basic instructions."

This leads to the inevitable conclusion regarding strong AI. If the mind is just a program, and programs are just syntax manipulators, then the mind has no semantics either. But Kaplan implies this is false because we do understand meaning. The thought experiment is designed to show that "having a mind just is running a certain program defined in terms of inputs and outputs" is a flawed theory. The argument suggests that no matter how complex the program, if it is only shuffling symbols, it cannot generate the "what it is like" experience of consciousness.

A digital computer is a very specific type of thing. All it is is a digit manipulator. All it is is a device that moves around symbols. That's it.

Bottom Line

Jeffrey Kaplan's commentary succeeds by stripping away the mystique of artificial intelligence to reveal a rigid logical constraint: syntax is not semantics. The strongest part of the argument is its reliance on the Turing machine model to demonstrate that correct output does not require internal understanding. However, the piece's biggest vulnerability lies in its potential dismissal of emergent complexity; critics might argue that a sufficiently complex manipulation of syntax could eventually give rise to semantics in a way we don't yet understand. For the reader, the takeaway is clear: do not confuse a machine's ability to mimic human conversation with the possession of a human mind.