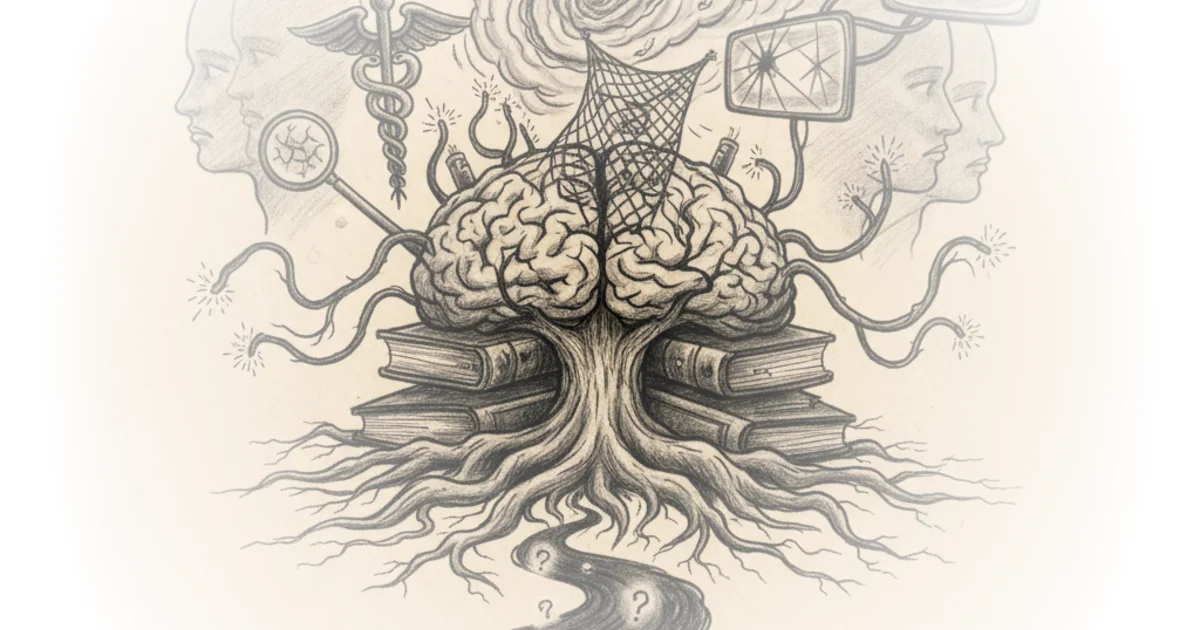

Rohin Francis dismantles the comforting myth that medical errors are merely rare accidents, arguing instead that they are the inevitable byproduct of human cognition colliding with complex, high-stakes data. In a field where the margin for error is non-existent, Francis exposes how the very tools doctors use to think—pattern recognition, heuristics, and intuition—are often the same mechanisms that lead them astray. This is not a takedown of the profession, but a necessary autopsy of the decision-making process that affects every patient who walks through a hospital door.

The Illusion of Objectivity

Francis begins by rejecting the notion that medicine is a pure science of facts, reframing it instead as a detective story where the clues are often misleading. He writes, "diagnosing a patient is complicated it's something you continue to learn and improve your entire career." This framing is crucial because it shifts the blame from individual incompetence to the inherent difficulty of the task. The author argues that doctors, like all humans, are susceptible to cognitive biases that distort their perception of risk and probability.

One of the most pervasive traps identified is the belief that innovation automatically equals improvement. Francis notes, "Medicines aren't like computer components they don't keep getting better with every generation there is no medical Moore's law." This distinction is vital in an era where patients, influenced by tech culture, demand the "latest" treatment. The author illustrates how drug companies exploit this "novelty bias" by tweaking molecules and rebranding them as superior, only to charge a premium for marginal or non-existent gains. Critics might argue that this view is too cynical, potentially stifling legitimate medical breakthroughs, but Francis's point stands: the history of medicine is littered with abandoned therapies that were once hailed as miracles.

There is no medical Moore's law. The history of medicine is littered with abandoned therapies that turned out to be garbage.

The Trap of First Impressions

The commentary then moves to the specific mechanics of diagnostic failure, using a fictionalized case study of a patient named "Timmy" to demonstrate how a doctor's initial instincts can derail the entire process. Francis describes "anchoring," where a physician latches onto a first impression—such as assuming a thin patient cannot have heart disease—and ignores contradictory evidence. He writes, "placing undue importance on your first instinct is called anchoring." This is a powerful explanation for why smart doctors make dumb mistakes; they are not ignoring data, they are filtering it through a biased lens.

This filtering is compounded by "automation bias," where clinicians trust computer readouts over their own clinical judgment. Francis observes, "just as people trust their GPS sat-nav more than their own ability to read a map these days she believes the computer." The danger here is that algorithms, while useful, can reinforce existing errors if the input data is flawed or if the doctor fails to apply critical thinking. The author also highlights "availability bias," where a doctor's recent studies or experiences skew their diagnosis toward what is fresh in their mind rather than what is statistically probable.

The Burden of Fear and Habit

Perhaps the most insightful section of the piece addresses the psychological pressures that drive over-treatment. Francis identifies "litigation bias," where the fear of being sued forces doctors to order excessive tests to rule out worst-case scenarios. He writes, "he remembers a patient who sued him in whom he missed a heart problem so now he places undue importance on ruling out heart disease in pretty much everyone he sees." This dynamic creates a system of defensive medicine that drains resources and exposes patients to unnecessary risks.

Furthermore, the author explores the "urge to do something," or commission bias, where doctors prescribe ineffective treatments simply to satisfy a patient's need for action. "The patient wants something done the doctor wants to do something they don't want to feel helpless," Francis explains. This is a profound admission of the emotional labor involved in medicine, where the desire to help can paradoxically lead to harm through over-intervention. The piece also touches on "familiarity bias," where specialists recommend the treatments they know best, regardless of whether a different approach might be superior.

The urge to do something or the desire to avoid any potential harm are both entirely natural and normal responses and a lot of the time they're entirely correct.

The Bottom Line

Francis's strongest argument is that medical error is not a failure of character but a failure of human cognition that requires systemic safeguards, not just individual vigilance. The piece's greatest vulnerability is its reliance on fictionalized anecdotes, which, while illustrative, may oversimplify the nuanced reality of modern healthcare systems where electronic records and multidisciplinary teams mitigate some of these biases. Ultimately, the reader should watch for how medical institutions are adapting to these cognitive realities, moving from a culture of blame to one of structured decision-making support. This is a necessary conversation for anyone who wants to understand the true cost of being human in the practice of medicine.