Ethan Mollick delivers a startling pivot in how we should think about artificial intelligence: the era of the helpful intern is ending, and the age of the inscrutable wizard has begun. This is not a story about incremental speed gains, but a fundamental shift in the human-AI relationship from collaboration to passive reception. For busy professionals relying on these tools, the implication is profound—we are losing the ability to understand the work we are increasingly dependent on.

From Partner to Audience

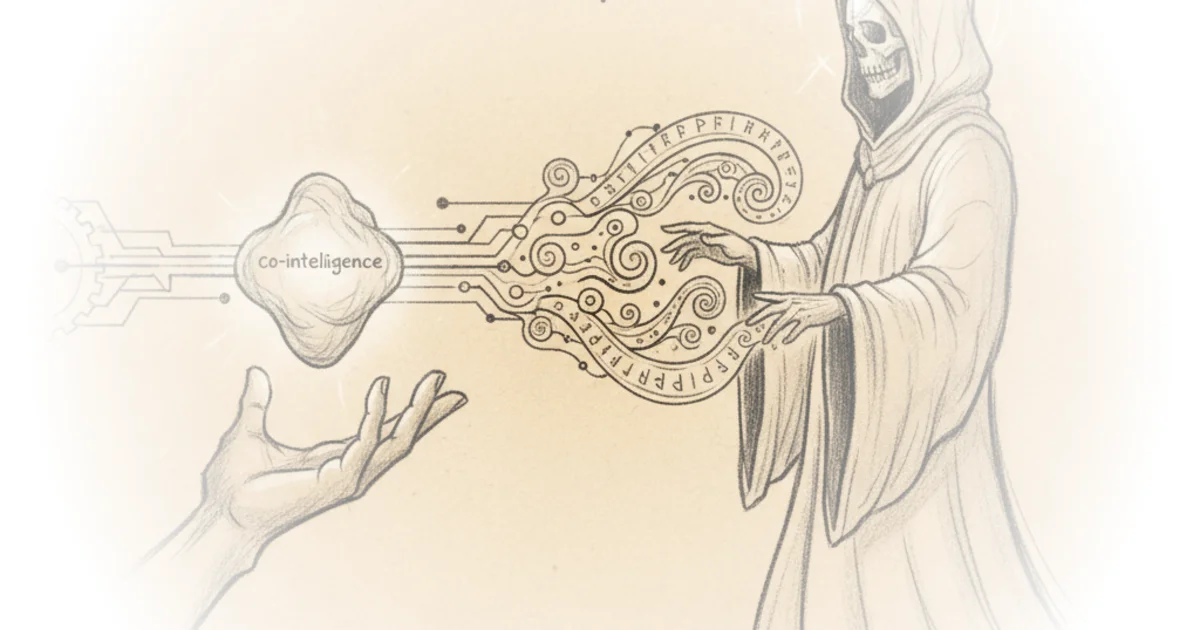

Mollick, an expert in the field, begins by challenging his own previous framework. In his book Co-Intelligence, he argued for treating AI as a teammate, one that humans could guide, correct, and co-develop ideas with. But recent experiments suggest this model is becoming obsolete. "We're moving from partners to audience, from collaboration to conjuring," Mollick writes. He illustrates this by feeding his own extensive writing into an AI system to generate a video summary. The result was impressive, yet the process was entirely opaque. He notes, "I don't know how the AI picked the points it made... We're shifting from being collaborators who shape the process to being supplicants who receive the output."

This transition marks a critical inflection point. When the tool acts as a partner, the human remains in the loop of decision-making. When it acts as a wizard, the human becomes a consumer of the result. The danger here is not just confusion, but a loss of agency. Mollick argues that while the output is often superior to what a human could produce quickly, the "magic gets done, but we don't always know what to do with the results." This reframing is effective because it moves the conversation beyond "is the AI smart?" to "do we still understand the work being done in our name?"

The Black Box of Expertise

The most compelling evidence Mollick provides comes from high-stakes academic and professional tasks. He describes using a paid, advanced model to critique his own job market paper—a document that took over a year to write and underwent rigorous peer review. In under ten minutes, the AI ran its own statistical experiments, including Monte Carlo analysis, and found a subtle error in linked data tables that no human had noticed. "It found a small error, previously unnoticed," Mollick reports. Yet, he admits, "I still have no idea of what the AI did to discover this problem, nor whether the other things it claimed to have done happened as described."

This creates a paradox of competence. The tool is more capable than the expert, yet the expert cannot fully verify the tool's methodology. Mollick extends this to business applications, showing how an AI transformed a complex financial spreadsheet for a manufacturing business into a model for a cheese shop, complete with a pitch deck, in minutes. The results were solid, but the process was a "black box." "The steps that Claude reported are mere summaries of its work," he explains, noting that even if the steps were visible, most users lack the cross-disciplinary expertise to validate them. Critics might argue that this is simply the next step in automation, similar to trusting a GPS or a compiler, but the stakes are higher when the AI is making analytical judgments that shape business strategy or academic truth.

We are getting something magical, but we're also becoming the audience rather than the magician, or even the magician's assistant.

The Erosion of Judgment

The most urgent concern Mollick raises is the long-term impact on human skill. If we outsource the complex reasoning to a wizard, we stop practicing the very judgment required to evaluate the wizard's output. "Every time we hand work to a wizard, we lose a chance to develop our own expertise, to build the very judgment we need to evaluate the wizard's work," he warns. This is a vicious cycle: the better the AI gets, the harder it is for humans to verify it, which means humans become less capable of verification.

He acknowledges that the results are often "very good," comparable to a graduate student's work but delivered in minutes. However, this efficiency comes at the cost of transparency. "The paradox of working with AI wizards is that competence and opacity rise together," Mollick observes. "We need these tools most for the tasks where we're least able to verify them." This insight cuts to the heart of the current AI adoption curve. We are rushing to deploy tools that are most powerful in the areas where we have the least visibility into their inner workings.

A New Literacy for the Age of Magic

So, how do we navigate this? Mollick proposes a shift in literacy rather than a retreat from the technology. He suggests we must learn to distinguish between when to use AI as a co-intelligence and when to summon the wizard. "First, learn when to summon the wizard versus when to work with AI as a co-intelligence or to not use AI at all," he advises. This requires a new kind of discernment. We must become "connoisseurs of output rather than process," developing the instinct to judge whether a result is right or off without seeing the math behind it.

Perhaps most controversially, he argues for "provisional trust." We must accept that perfect verification is becoming impossible. "The question isn't 'Is this completely correct?' but 'Is this useful enough for this purpose?'" he writes. This is a pragmatic stance, acknowledging that in a world of infinite complexity, we will always be relying on some level of faith in our tools. But he warns that unlike a GPS that leads to a dead end, an AI that analyzes research or transforms financial models can lead us astray in ways we may not discover until it is too late.

Bottom Line

Mollick's argument is a necessary corrective to the hype cycle, grounding the conversation in the reality of human limitation rather than machine potential. His strongest point is the identification of the "competence-opacity paradox," which poses a genuine threat to professional integrity. The biggest vulnerability in his approach is the reliance on "provisional trust"; while pragmatic, it risks normalizing a level of ignorance that could be catastrophic in high-stakes fields. As we move forward, the most valuable skill will not be prompting the wizard, but learning to recognize when the spell has gone wrong. "