Then & Now reframes the current AI boom not as a sudden technological miracle, but as the latest chapter in a century-long struggle to define what intelligence actually is. The piece's most striking claim is that the "theft" of human creativity by algorithms is merely the culmination of a historical failure to understand that intelligence is embodied, messy, and deeply rooted in the physical world. For busy readers navigating the hype cycle, this offers a crucial corrective: the current crisis isn't just about copyright; it's about the fundamental architecture of how we build machines.

The Myth of the Disembodied Mind

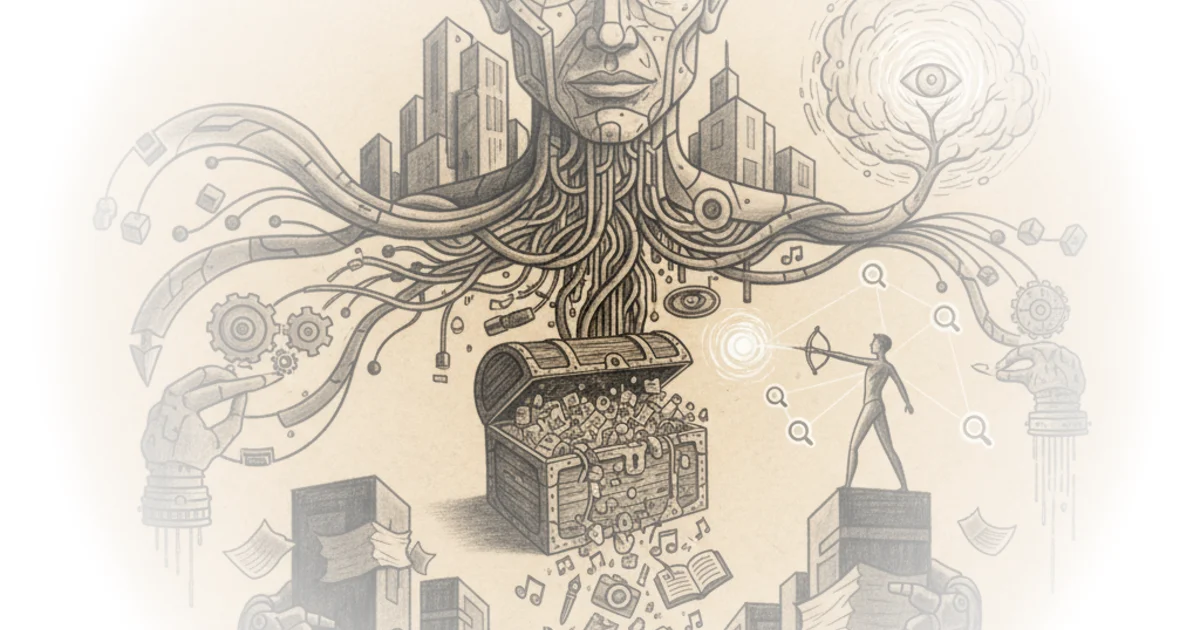

The narrative begins by dismantling the popular notion that intelligence is an abstract, transcendent force. Then & Now writes, "our control because we have this idea that intelligence is this abstract Transcendent disembodied thing something unique and special but we'll see how intelligence is much more about the deep deep past and the Far Far Future something that reaches out powerfully through bodies through people through infrastructure through the concrete empirical World." This framing is essential because it challenges the Silicon Valley fantasy that AI is a pure software problem. By grounding intelligence in the "concrete empirical World," the author suggests that any system ignoring physical reality is destined to fail.

The piece traces this back to the 1950s, when the media dubbed early computers "electronic brains." Then & Now notes that even then, the debate centered on whether machines could truly think, a question Alan Turing famously tackled with his test. The author argues that the field has been haunted by the "impossible totality" of trying to map the entire world into code. This historical context is vital; it shows that the current "AI winter" fears and the subsequent hype cycles are not new, but recurring patterns of overestimation followed by the realization that logic alone cannot capture human nuance.

"The whole problem of building an intelligent system is reduced to one of constructing a logical description of what the robot should do and such a system is transparent to understand why it did something."

The Trap of Symbolic Logic

Then & Now details the "symbolic approach," which dominated early research. The core argument here is that early pioneers believed they could solve intelligence by creating a digital replica of the human mind using strict logic. As Then & Now puts it, "if the traffic light is red then stop the car if hungry then eat if tired then sleep." This binary, true-or-false logic seemed intuitive to programmers because it aligned with the on/off nature of transistors.

However, the commentary highlights the fatal flaw: the real world is not binary. The author illustrates this with the "combinatorial explosion" problem, using the game of Towers of Hanoi to show how complexity grows exponentially. Then & Now writes, "for 64 discs I can't read out the number because if one disc was moved each second it would take almost 600 billion years to complete the game." This is a powerful metaphor for the limits of rule-based systems. The argument holds up well here; it demonstrates that trying to program every possible scenario is computationally impossible.

Critics might note that modern AI has largely abandoned this symbolic approach in favor of statistical learning, suggesting the historical critique is somewhat moot. Yet, the author's point remains relevant: the current models, while different in method, still struggle with the same "shades of uncertainty" and the inability to truly understand the context of the physical world.

The Bottleneck of Knowledge

The piece then moves to the "expert systems" of the 1980s, where researchers tried to feed specific domain knowledge into machines. While initially successful in diagnosing blood diseases or analyzing chemicals, the approach hit a wall. Then & Now quotes researcher Edward Feigenbaum, who described the knowledge acquisition process as a "cottage industry" that was "tedious time consuming and expensive." This is the crux of the "theft" narrative: the only way to get machines to be "intelligent" was to manually extract and codify human expertise, a process that is inherently slow and fragile.

The author argues that this bottleneck forced a shift toward the massive data scraping we see today. If you cannot manually code the rules of the world, you must ingest the world itself. Then & Now writes, "the knowledge is currently acquired in a very painstaking way... in the decades to come we must have more automatic means for replacing what is currently a very tedious timec consuming and expensive procedure." This reframes the current controversy over data scraping not as a new ethical dilemma, but as the inevitable, desperate solution to a decades-old engineering problem.

"The problem of knowledge acquisition is the key bottleneck problem in artificial intelligence."

Bottom Line

Then & Now's strongest contribution is linking the historical failure of symbolic logic to the current reality of data-hungry algorithms, suggesting that the "theft" of human work is a structural necessity of the field, not just a corporate greed issue. The piece's biggest vulnerability is its tendency to romanticize the "human" element of intelligence without fully addressing how statistical models might eventually replicate it in ways we don't yet understand. Readers should watch for how the next generation of AI attempts to solve the "embodiment" problem that has plagued the field since the 1950s.