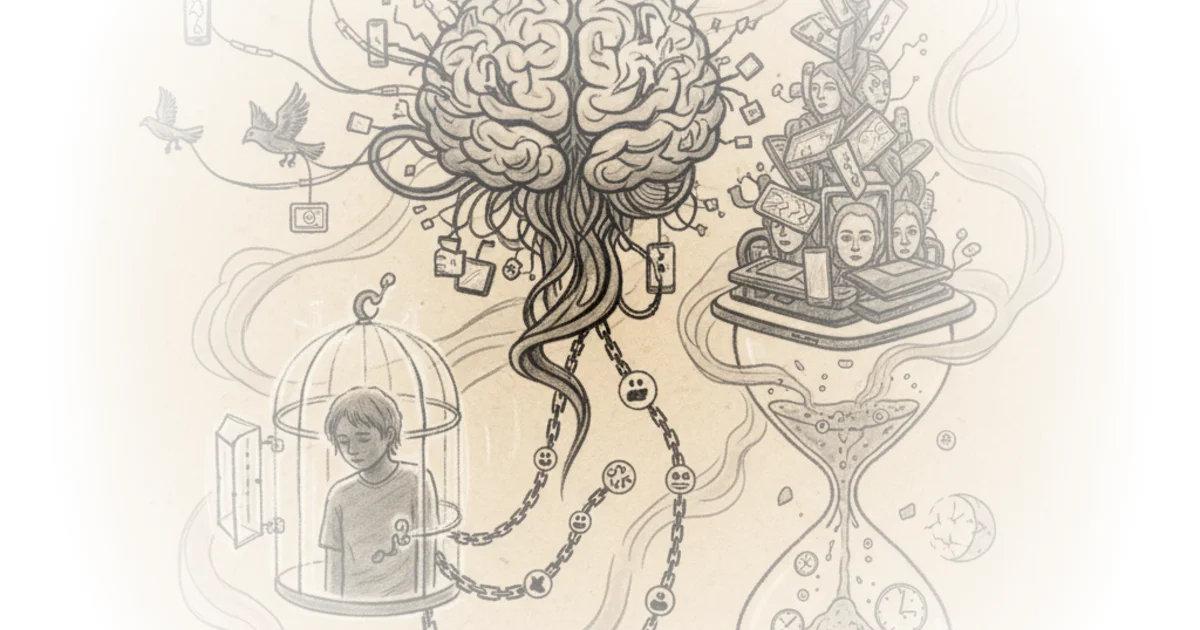

Jacqueline Nesi cuts through the noise of the endless "social media is destroying our children" debate by arguing that we are asking the wrong question. Instead of obsessing over whether these platforms cause clinical mental illness—a metric that is notoriously difficult to prove—she urges us to focus on the tangible, immediate dangers that are already visible in plain sight. For busy parents and policymakers, this shift from abstract anxiety to concrete safety is not just a semantic tweak; it is a necessary pivot toward actionable protection.

The Trap of Vague Metrics

Nesi identifies a critical flaw in the current discourse: the fixation on mental health as the sole litmus test for harm. She writes, "When we frame the conversation about social media entirely around mental health, I'm concerned we've done ourselves a disservice." This framing has created a paralysis where lawmakers and parents wait for definitive causal proof that simply cannot be generated with current research methods. The result is a stalemate where platforms operate unchecked while society argues over effect sizes.

The author argues that this obsession with "mental health" is counterproductive because the term itself is too broad to be useful. As Nesi puts it, "Asking whether anything is 'bad' for 'mental health' is going to get you a big, fat 'it depends,' because that's how mental health works." By treating mental health as a binary switch—either broken or fine—we ignore the spectrum of distress and the specific, non-clinical risks that children face daily. This approach allows the industry to dismiss concerns by pointing to the lack of a universal diagnosis, while the actual problems fester.

Critics might argue that dismissing the mental health angle ignores the long-term developmental impacts of chronic stress and anxiety, which do not always present as immediate clinical disorders. However, Nesi's point is not that mental health doesn't matter, but that using it as the only barometer for regulation is a strategic error that delays necessary intervention.

The Obvious Dangers We Ignore

Once the mental health fog is lifted, Nesi directs our attention to a litany of specific, documented hazards that do not require a psychological diagnosis to identify as harmful. She lists a series of alarming statistics derived from internal research and user surveys, including the fact that "45% of teens say they spend too much time on social media, and a quarter of girls who use TikTok say it gets in the way of their sleep every night."

The piece highlights that the platforms are exposing minors to content and interactions that society already deems inappropriate for them in the physical world. Nesi notes, "The majority of girls who use Instagram and Snapchat say they've been contacted by a stranger on these platforms in ways that make them uncomfortable." She further details the prevalence of unwanted sexual advances, with internal Meta research showing that nearly 14% of teens aged 13 to 15 received such advances in a single week, 93.8% of which came from strangers.

We do not go around warning 13 year olds never to open a bank account because it's BAD for their MENTAL HEALTH. We just..don't let them do it.

This comparison is the piece's most potent rhetorical move. Nesi argues that we have established cultural and legal norms for other activities based on judgment, safety, and appropriateness, not just mental health outcomes. We prevent children from drinking alcohol or working full-time jobs not because we have proven these activities cause depression, but because they are developmentally inappropriate or physically dangerous. Yet, we allow children to sign legal contracts (user agreements) and access a digital environment rife with gambling content, violence, and unwanted nudity.

Moving Beyond Panic to Protection

The author contends that the current messaging strategy is failing because it relies on fear rather than facts. "Instead of offering parents accurate information and realistic guidance, we scare and confuse them," Nesi writes. This panic-driven approach often backfires, creating a self-fulfilling prophecy where teens feel doomed by the very warnings meant to protect them. The solution, she suggests, is to stop shouting that social media is "destroying the children" and start addressing the specific mechanisms of harm.

Nesi emphasizes that for the majority of average, healthy teens, social media will not suddenly cause the onset of a mental illness. "Most average, healthy teens, with appropriate boundaries in place, are going to be just fine," she asserts. This nuance is vital; it prevents the demonization of the technology itself while demanding accountability for the specific features that facilitate harm. The goal is to replace vague warnings with specific, accurate information on risks, allowing for targeted regulation rather than blanket bans or inaction.

A counterargument worth considering is that the line between "mental health" and "safety" is often blurred, as exposure to unwanted sexual content or violent imagery can indeed precipitate mental health crises. However, Nesi's framework remains robust: even if the mental health link is hard to prove, the exposure itself is a violation of safety norms that warrants immediate action regardless of the psychological outcome.

Bottom Line

Jacqueline Nesi's argument is a necessary corrective to a debate that has become bogged down in unprovable theories about clinical depression. Her strongest contribution is the demand to treat social media safety with the same regulatory rigor we apply to physical safety, focusing on concrete harms like stranger contact and unwanted content rather than abstract mental health metrics. The biggest vulnerability in this approach is the risk that focusing on specific harms might let platforms off the hook for the broader, cumulative psychological effects of algorithmic design, but it remains the most viable path forward for immediate policy action.