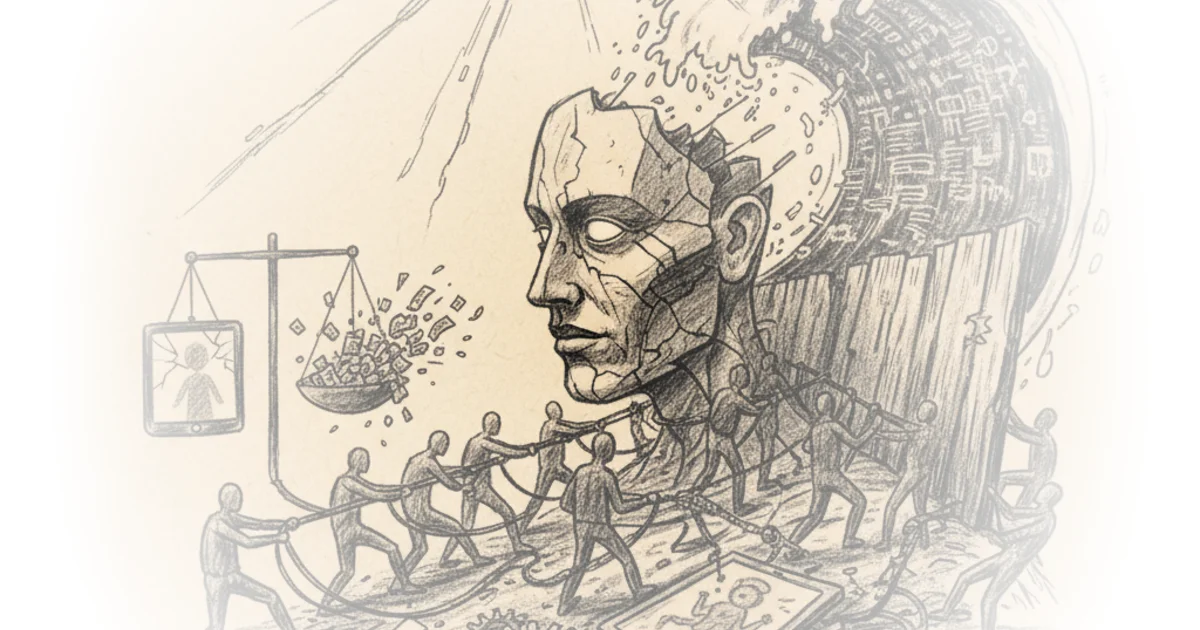

Matt Stoller delivers a rare and electrifying account of how ordinary citizens are finally piercing the legal shield of Big Tech. He argues that recent jury verdicts against Meta and Google are not just legal anomalies, but a fundamental shift in how the public perceives corporate power, effectively dismantling the "aura of invincibility" that has protected these oligarchs for decades.

The Jury as a Check on Power

Stoller frames the recent Los Angeles and New Mexico trials as a watershed moment where twelve ordinary people looked at the inner workings of social media giants and said "enough." He writes, "In many ways, they are the closest we can get to ordinary Americans expressing their informed views of corporate power." This perspective is crucial because it moves the debate from abstract policy papers to the visceral reality of human harm. The Los Angeles jury found the companies liable for addicting a child, while the New Mexico jury penalized Meta $375 million for creating a public nuisance that enabled predators.

The author highlights the stark contrast between the companies' legal defenses and the jurors' reaction to the evidence. Stoller notes that Meta executives argued their products were not a major factor in the victim's distress, blaming parental neglect instead. Yet, the jury saw through this, finding the company's internal documents—which showed a deliberate focus on keeping young users addicted—to be damning. As Stoller puts it, "The jury found these comments callous, but also thought him dishonest." This finding is significant because it strips away the corporate veneer of "neutral platforms" to reveal a business model built on exploitation.

"We wanted them to feel it. We wanted them to realize this was unacceptable."

Critics might argue that jury verdicts are unpredictable and that these specific cases rely on unique fact patterns that may not hold up on appeal. However, Stoller counters this by pointing out the strategic nature of the plaintiffs' approach. He observes that "it usually takes a couple of attempts to figure out how to make the right argument," suggesting these wins are the result of a maturing legal strategy rather than a fluke. The sheer volume of similar cases now poised to use this evidence indicates a systemic shift, not an isolated incident.

The Legal Shield Cracks

The core of Stoller's analysis lies in his dissection of why accountability has been so elusive for so long. He traces the problem to "libertarian legal assumptions" that have treated internet platforms as mere vessels for speech, protected by the First Amendment and Section 230 of the Communications Decency Act. He writes, "Section 230 says that if an 'interactive computer service' is hosting someone else's speech, they are not liable for what that third party says." For decades, this interpretation allowed companies to avoid responsibility for the harms their algorithms amplified.

Stoller effectively dismantles the argument that regulating these platforms is an attack on free speech. He points out the hypocrisy of legal elites who claim "the internet is on trial" while ignoring the specific, algorithmic design choices that cause harm. He notes that "legal elites have a reverence for a certain corporate-friendly version of the mid-20th century First Amendment," a view that fails to distinguish between a newspaper and a product designed to addict users. This framing is powerful because it exposes the disconnect between high-minded legal theory and the reality of children suffering from eating disorders and suicide.

The author draws a sharp distinction between the old view of platforms as publishers and the new reality of them as product manufacturers. "Facebook is more like a firm that sells microphones than a newspaper publisher," Stoller argues, suggesting that product liability laws, not free speech protections, should apply. This shift in legal theory is what allowed the recent verdicts to succeed. He references the "Blackout Challenge" on TikTok, where the algorithm encouraged self-asphyxiation, noting that "TikTok reads 230... to permit casual indifference to the death of a ten-year-old girl."

"The result is many free speech advocates have adopted a deeply immoral and corporatized vision of speech."

While Stoller is critical of the legal establishment, he acknowledges that the path forward is uncertain. He admits that "these cases will go on appeal, and circuit courts could overturn them." The defense will likely lean heavily on the argument that holding platforms liable for algorithmic recommendations violates the First Amendment. However, the momentum seems to be shifting, with Supreme Court justices increasingly skeptical of expansive Section 230 claims.

The End of Invincibility

The ultimate impact of these trials, according to Stoller, is psychological as much as it is legal. The stock market has already reacted, with Meta's price dropping as investors recognize the strategic defeat. But the deeper consequence is the restoration of the rule of law over corporate oligarchy. Stoller writes, "Either the oligarchs win, and the public will be completely neutered in our ability to have any say over our society. Or they will lose, and be subject to the rule of law."

He connects this moment to a broader populist turn, noting that "72% of Americans now see their role as sending messages to corporations." This sentiment is the driving force behind the jury's decision to hold the companies accountable. The verdicts are a signal that the era of unchecked corporate power is ending, replaced by a system where institutions must answer to the people they serve.

Bottom Line

Stoller's strongest argument is his reframing of these trials not as isolated legal disputes, but as a democratic correction to decades of regulatory capture. His biggest vulnerability is the uncertainty of the appellate process, where the same legal elites he criticizes may still find ways to protect the industry. Readers should watch closely for how the Supreme Court handles the upcoming appeals, as this will determine whether the "product liability" theory can permanently pierce the shield of Section 230.