"Nvidia's $4 trillion valuation is often dismissed as a speculative bubble, but Chipstrat makes a compelling case that it is merely a checkpoint on a much longer climb. The piece's most distinctive argument flips the script on supply constraints, suggesting that bottlenecks in chip packaging and data center power are actually preventing a catastrophic AI crash by pacing investment against real-world returns. For the busy investor or strategist, this reframing moves the conversation from 'when will it pop?' to 'how is the infrastructure maturing?'

From Training Monopoly to Inference Engine

The article begins by dismantling the idea that Nvidia's dominance was accidental. Chipstrat argues that the company's first trillion-dollar milestone wasn't just about selling silicon; it was about controlling the entire system. The piece notes that "Chips alone weren't enough. Nvidia had to control the full system to make clusters scale." This is a crucial distinction. While competitors like Google built powerful internal chips, they lacked the external ecosystem. Chipstrat points out that Google's TPUs "lacked a robust external-facing software ecosystem," leaving the market open for Nvidia's CUDA platform to become the industry standard.

The timing of this dominance was catalyzed by the viral launch of ChatGPT. The piece captures the chaotic realization of that moment through a quote from OpenAI's Nick Turley: "Day one was 'Is the dashboard broken?'... Day four was like, 'Okay, it's gonna change the world.'" This rapid shift from skepticism to global adoption forced hyperscalers to pour capital into training clusters, and Nvidia was the only supplier ready to deliver.

"Nvidia was the first, best, and only option for labs training at the frontier."

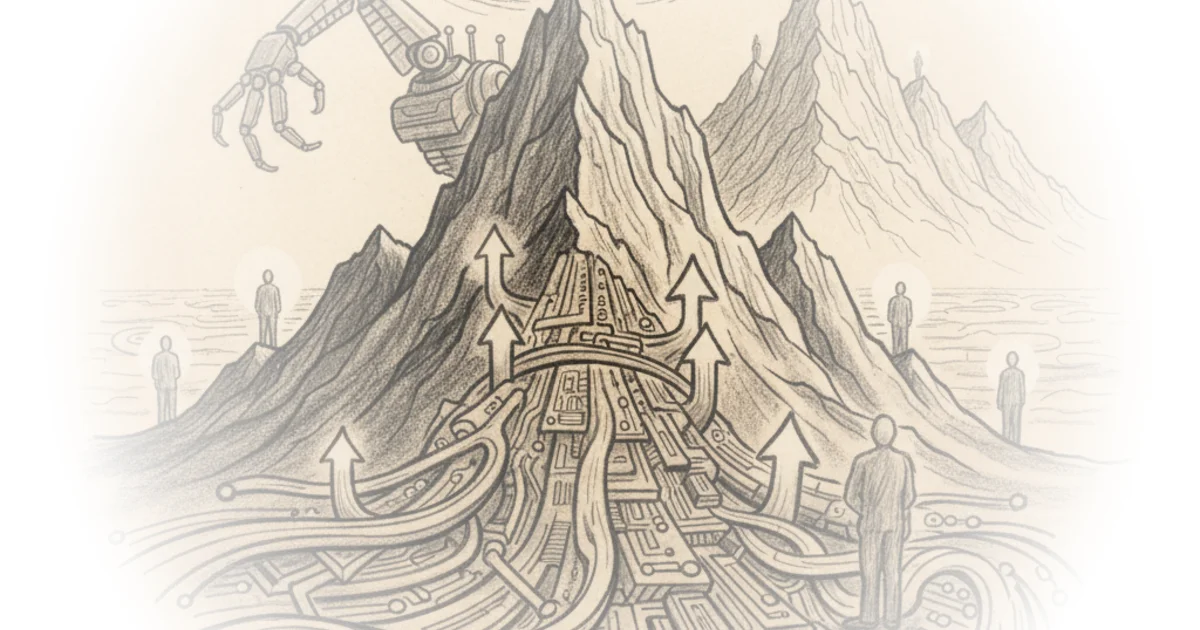

However, the article wisely pivots to the question of sustainability. If the initial boom was driven by the hope of future AI capabilities, could that hope sustain a $4 trillion market cap? Chipstrat acknowledges the fear of a "dot-com" style overbuild, where infrastructure outpaces value. The piece visualizes a scenario where "investment runs ahead of value creation," risking a pullback. Yet, the editors argue we have moved past that precarious phase. The focus has shifted from training models to running them—the inference era.

The Inference Explosion and Agentic AI

The core of the piece's bullish thesis rests on the sheer scale of current usage. Chipstrat reports that ChatGPT reached 1 billion weekly users in under three years, a speed unmatched by social media giants. This volume is no longer theoretical; it is a daily reality. "ChatGPT processes ~2.5 billion queries per day!" the article exclaims, emphasizing that this massive traffic is powered by Nvidia's hardware.

The argument deepens when discussing the shift toward "agentic AI"—systems that don't just answer questions but take actions. The piece cites a Wall Street Journal report on accounting firm RSM, which plans to invest $1 billion in AI to automate workflows. The result? "What was previously a 5% to 25% productivity boost to up to 80% in some cases." This is the kind of tangible return on investment that justifies continued capital expenditure.

Crucially, Chipstrat explains why this specific type of AI is a double-edged sword for demand. Agentic models require more "reasoning," which means they generate significantly more data per query. "Agentic 'thinking' increases the amount of tokens generated per query, often by 5 to 10 times," the editors note. This creates a self-reinforcing cycle: smarter AI uses more compute, which drives more demand for Nvidia's chips.

Critics might argue that relying on a single vendor for such critical infrastructure creates systemic risk, or that the ROI figures are still too early to be definitive. While valid, the piece counters that the "reasoning models" are already breaking through the skepticism that plagued earlier AI waves.

"Agentic AI will drive ROI, and not just for early adopters... The result is sustained infrastructure growth across the industry."

The Paradox of Scarcity

Perhaps the most counterintuitive insight in the coverage is the treatment of supply shortages. In most industries, a lack of supply is a bug; here, Chipstrat argues it is a feature. The piece contends that "constrained supply has quietly been a strength." Because TSMC's advanced packaging capacity and data center power access are limited, the rollout of AI infrastructure is naturally throttled.

This pacing is vital. If companies could buy unlimited chips immediately, they might overbuild, leading to a glut that crashes prices and investor confidence. Instead, the bottleneck forces a "slower CapEx growth" that allows the "LLM value" to catch up. Chipstrat explains that this alignment "keeps the ROI gap smaller and leading to a surplus sooner."

This perspective reframes the current manufacturing bottlenecks not as a barrier to growth, but as a stabilizer that prevents a boom-and-bust cycle. The piece suggests that "supply limits act as a natural throttle, aligning investment with monetization."

"Gating supply while value builds is very important... Instead of a boom-and-bust overbuild, Nvidia gets a paced rollout that preserves pricing power."

The article concludes by looking at future demand drivers, such as video generation and multimodal workloads, which will dwarf current text-based demands. With the Blackwell architecture ramping up and new platforms like Dynamo optimizing data center efficiency, the infrastructure is being built to handle the next wave of complexity.

Bottom Line

Chipstrat's analysis is strongest in its ability to connect the dots between software trends (agentic AI) and hardware economics (supply constraints), presenting a cohesive narrative for sustained growth rather than a speculative frenzy. Its biggest vulnerability lies in the assumption that the current "pacing" of supply will continue to perfectly match the accelerating pace of monetization, a balance that could be disrupted by geopolitical shifts or rapid technological breakthroughs elsewhere. The reader should watch for the transition from text-based inference to video and robotics, as these will be the true stress tests for the $4 trillion valuation.