In a field obsessed with chasing the highest accuracy scores, Arvind Narayanan and his co-authors deliver a jarring reality check: the current method of ranking AI systems is actively misleading. They argue that the industry's favorite leaderboards are broken because they ignore the most critical variable for real-world deployment—cost. For busy engineers and decision-makers, this is not just an academic critique; it is a warning that the "best" AI tools on paper might be financially ruinous in practice.

The Cost of Complexity

The authors dismantle the prevailing assumption that more complex AI agents are inherently superior. They point out that while sophisticated systems can squeeze out marginal gains in accuracy, they often do so at an exorbitant price. Narayanan writes, "If we eke out a 2% accuracy improvement for 100x the cost, is that really better?" This question cuts through the hype, forcing a re-evaluation of what "state-of-the-art" actually means. The piece demonstrates that simple baselines—like repeatedly asking a model to retry a task or escalating to a more powerful model only when necessary—often match the performance of complex agents while costing a fraction as much.

The analysis reveals a startling inefficiency in current research. Narayanan notes, "Current state-of-the-art agent architectures are complex and costly but no more accurate than extremely simple baseline agents that cost 50x less in some cases." This finding suggests that the AI community has been over-engineering solutions for standard benchmarks. The authors' decision to plot accuracy against dollar cost, rather than abstract metrics like parameter count, exposes this waste. It is a pragmatic shift that prioritizes engineering reality over scientific vanity.

Maximizing accuracy can lead to unbounded cost.

Critics might argue that dollar costs fluctuate too wildly to be a stable benchmark, but the authors counter that this volatility is exactly what matters to downstream users. For a business, a model that is 1% more accurate but 50 times more expensive is a liability, not an asset. The refusal to use proxies like parameter counts is a bold move that strips away the marketing fluff often used by model developers to make their products appear more efficient than they are.

The Reproducibility Crisis

Beyond the economics, the piece exposes a troubling lack of rigor in how these AI systems are evaluated. Narayanan and his team attempted to reproduce the results of top-performing agents and found significant discrepancies. They write, "Published agent evaluations are difficult to reproduce because of a lack of standardization and questionable, undocumented evaluation methods in some cases." In one instance, they could not replicate the reported accuracy of a leading agent, finding it significantly lower than claimed. This isn't just a minor error; it suggests that the entire hierarchy of AI performance may be built on shaky foundations.

The authors highlight how subtle changes in methodology—such as removing specific test cases or failing to report hyperparameters—can artificially inflate results. Narayanan points out that "weak baselines could give a false sense of the amount of improvement attributable to the agent architecture." This creates a feedback loop where researchers are incentivized to build complex, expensive systems that look good on a leaderboard but fail to deliver value in the real world. The piece serves as a necessary corrective to a field that has become too focused on beating the benchmark rather than solving the problem.

A New Metric for a New Era

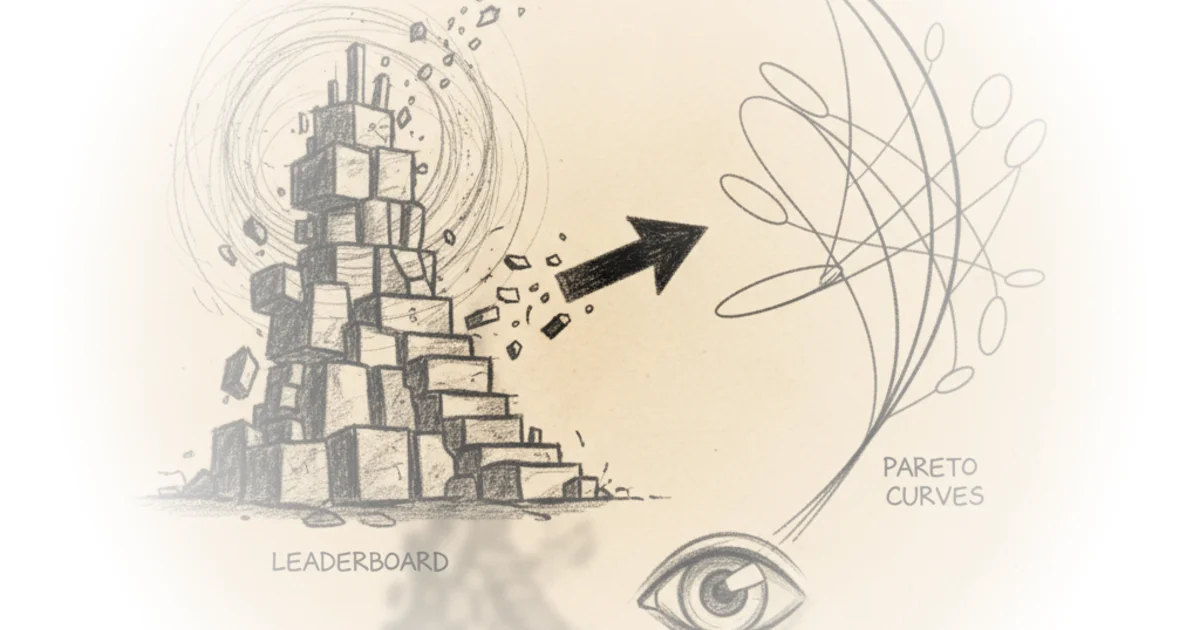

The authors propose a shift toward Pareto curves, a visual tool that maps the trade-off between accuracy and cost. This approach allows stakeholders to identify the "efficient frontier"—the point where any further gain in accuracy requires a disproportionate increase in cost. Narayanan argues, "We should directly measure dollar costs instead." This is a call for transparency that aligns AI evaluation with the practical needs of the industry. By forcing researchers to account for the financial implications of their models, the field can move away from the arms race of raw compute and toward genuine efficiency.

The distinction the authors draw between "model evaluation" (for researchers) and "downstream evaluation" (for practitioners) is particularly insightful. They note that while researchers may care about compute efficiency, "downstream developers... simply care about the dollar cost relative to accuracy." This separation clarifies why current benchmarks fail: they are designed for the wrong audience. The piece effectively argues that until evaluations reflect the economic realities of deployment, the industry will continue to chase illusions of progress.

Bottom Line

Arvind Narayanan's argument is a necessary course correction for an industry intoxicated by accuracy metrics that ignore economic reality. The strongest part of this piece is its empirical demonstration that simple, cheap strategies often outperform complex, expensive ones, exposing a massive inefficiency in current AI development. Its biggest vulnerability lies in the practical difficulty of standardizing cost metrics across a rapidly changing market, but the authors' call for transparency is a vital step forward. Readers should watch for whether the community adopts these Pareto-based evaluations, as this shift could fundamentally change how AI systems are built and bought.