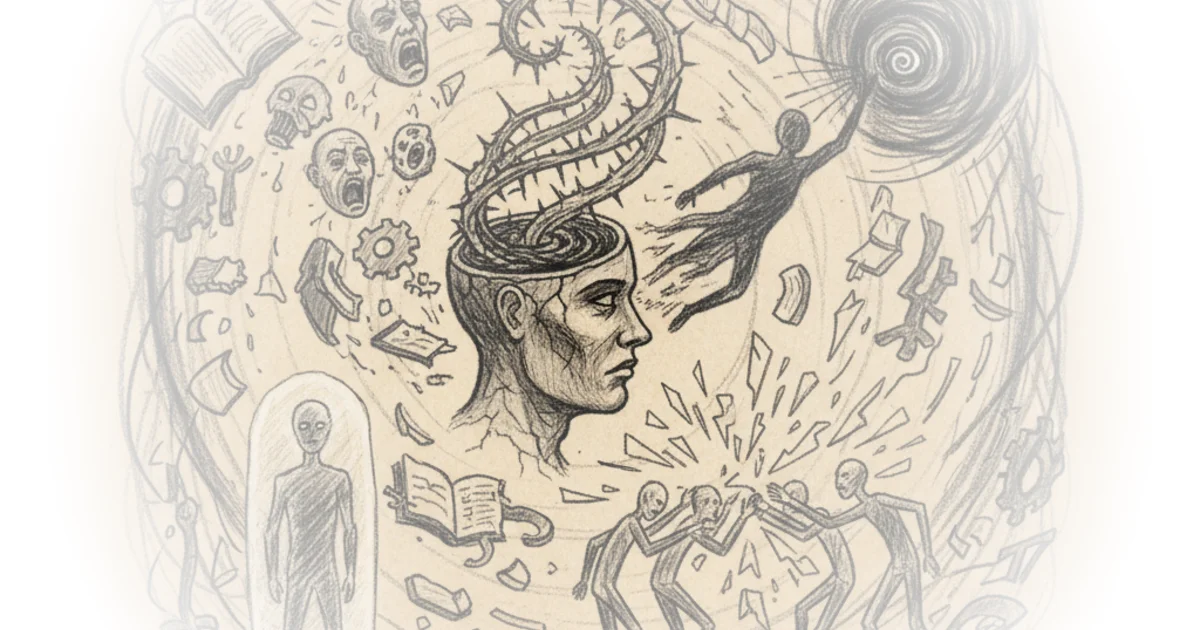

Andy Masley identifies a psychological trap that turns reasonable people into ideological zealots, arguing that the problem isn't the extreme idea itself, but the flawed way believers update their confidence when met with ignorance. In an era where digital echo chambers amplify every bad take, Masley offers a counter-intuitive prescription: to stay sane, you must stop treating the average critic as a representative of the opposing worldview.

The Trap of the Bad Argument

Masley observes a disturbing trend where friends and acquaintances fracture under the weight of their own convictions, becoming "brittle" and unable to interact with anyone who disagrees. He pinpoints the exact moment of failure: when a believer encounters a critic who is misinformed or morally confused, the believer mistakenly concludes that the critic's failure proves the believer's idea is correct. "My claim here is that the only thing wrong here, the thing that really messes people up, is that last step where you update in favor of your idea," Masley writes. This is a crucial distinction. It suggests that the path to extremism is paved not with bad ideas, but with bad epistemology—the inability to separate the quality of an argument from the quality of the person making it.

The author illustrates this with the example of a Marxist encountering the general public. Most people cannot articulate a sophisticated defense of capitalism; they offer platitudes or cruel simplifications. A true believer sees this and thinks, "Look how dumb they are; I must be right." Masley argues this is a logical fallacy. The average person is simply "asleep" on most topics, lacking the time or training to engage deeply. "The average representative of the opposing side is almost always going to be pretty terrible, but that's because the average person just doesn't know much about your hyper specific thing, not that the other side is terrible," he explains. This reframing is powerful because it removes the ego from the equation. It forces the believer to realize that a bad argument from a stranger is not evidence of a flawed ideology, but merely evidence of a disengaged society.

Losing sight of that, and only looking down from your local vista on the ocean of confused people, and feeling self-satisfied that you know something they don't, is one of the most dangerous moves you can make with an extreme idea.

The Danger of Tribal Roulette

Masley draws on his own history to show how easily this dynamic takes hold. He recalls a conversation with a conservative peer who opposed a nuclear arms reduction deal simply because "we need to fight terrorists," a misunderstanding of the policy's mechanics. Masley admits he immediately concluded, "Man conservatives are so stupid," using a single ignorant comment to validate his entire worldview. He now recognizes that the peer was just a "roulette-wheel" adopting random beliefs from their tribe, not a thoughtful critic of nuclear policy. "One guy I bumped into happening to know nothing about nuclear weapons should have counted as zero evidence for me about nuclear weapons," Masley asserts. This is a hard pill to swallow for anyone who feels intellectually superior to their neighbors. It requires admitting that the sea of public opinion is mostly confusion, and that finding a knowledgeable opponent is rare, regardless of which side of the aisle they stand on.

Critics might argue that this approach is too charitable to the status quo. If the "average person" consistently fails to grasp the urgency of issues like climate change or animal welfare, isn't their ignorance a legitimate reason to feel alienated? Masley anticipates this, noting that even experts disagree on complex topics. The solution isn't to lower the bar for truth, but to raise the bar for engagement. He suggests we should be "slightly elitist" about who we listen to, ignoring the noise of the unprepared and seeking out the few who actually understand the stakes. "The person knows basic facts about what you're disagreeing about... and the person is not super fired up and doesn't just want to act as a soldier for their team," he writes. This is a call for intellectual rigor over tribal loyalty.

Reclaiming the Sense of Company

The emotional core of Masley's argument is the human need for connection. People cling to extreme ideologies because they provide a sense of being "awake" among the sleeping masses. "Feeling like you're with someone who's a live player in the world can give you a deep sense of company, a core human need," Masley notes. The tragedy is that this sense of company becomes a prison. By equating "awakeness" with a specific set of beliefs, believers isolate themselves from anyone who doesn't share their specific dogma. Masley proposes a radical shift: decouple the feeling of being awake from the specific ideology. "You should try to keep your sense of awakeness and your specific ideological beliefs as disconnected from each other as possible," he advises. This allows one to find intellectual company in unexpected places, turning the world from a hostile ocean into a landscape of interesting puzzles.

Instead of associating who's 'awake' with your ideology, you should think of 'awakeness' as a quality anyone with a careful interest in figuring out what's actually true has.

This perspective transforms the political landscape. It suggests that the enemy isn't the person who disagrees with you, but the habit of assuming that disagreement equals stupidity. It invites a more nuanced engagement where one can hold an extreme view—like the urgency of animal welfare—without needing to believe everyone else is a monster for not seeing it immediately. The goal is to stop updating your confidence based on the worst arguments you hear, and start seeking the best arguments available, even if they are hard to find.

Bottom Line

Masley's most compelling contribution is the diagnosis of "updating on bad arguments" as the primary engine of modern polarization; it shifts the blame from the content of ideas to the mechanics of belief formation. However, the piece's biggest vulnerability lies in its practicality: in a media ecosystem designed to reward outrage, maintaining the discipline to ignore the "sea of confusion" requires a level of emotional labor that few can sustain. The reader should watch for how this framework applies to institutional discourse, where the pressure to perform tribal loyalty often overrides the search for genuine understanding.