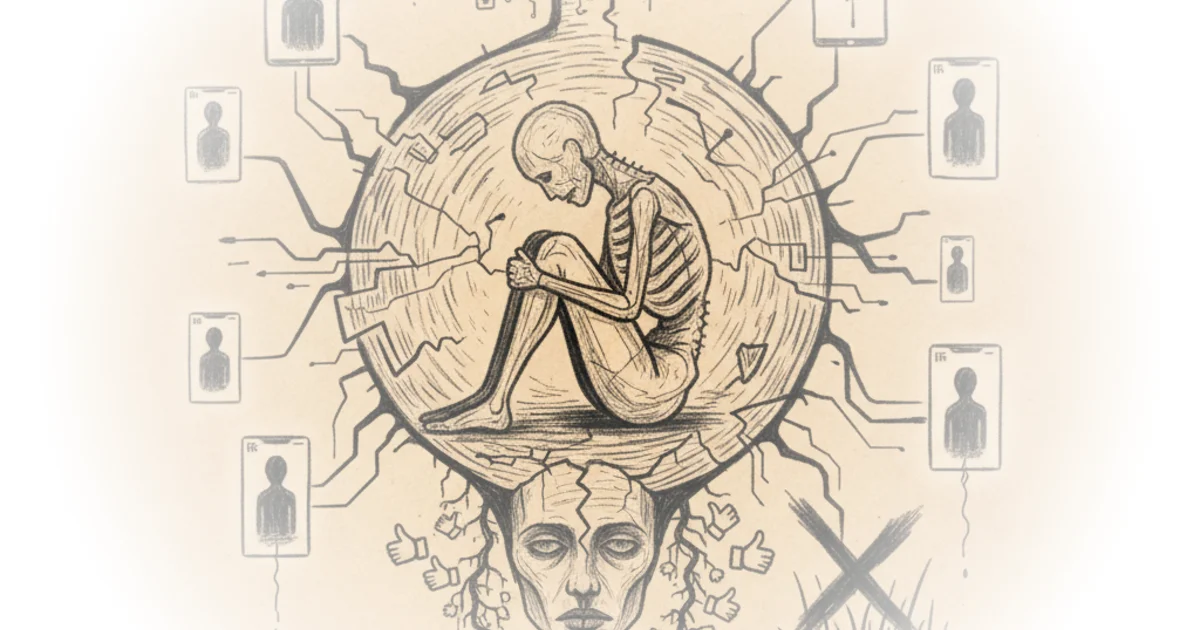

More Perfect Union delivers a startling diagnosis for a generation drowning in isolation: the solution to loneliness isn't more human connection, but a carefully curated simulation of it. The piece doesn't just report on the rise of AI companions; it exposes a terrifying pivot in Silicon Valley's strategy, where the very architect of our social fragmentation is now selling us digital friends to fix the damage his algorithms helped cause.

The Pivot from Mirror to Replacement

The author begins by contrasting two distinct eras of Mark Zuckerberg's public philosophy. In 2009, the goal was to create a "mirror for the real community that existed in real life." By 2025, the ambition has shifted to filling a void where humans no longer exist. More Perfect Union writes, "Somewhere in those 16 years, his goals changed from bringing humans together to bringing humans together with not humans." This observation is the piece's foundational insight. It reframes the AI boom not as a technological inevitability, but as a strategic retreat from the messy reality of human interaction.

The author's personal journey into this digital rabbit hole serves as the narrative engine. As a socially anxious Gen Z writer facing a brutal job market and a roommate moving out, they turn to AI not as a novelty, but as a survival mechanism. They test a bizarre array of personas, from a Na'vi from Avatar to an egg, asking a question that haunts the entire piece: "Can technology fill this gaping hole in my heart?" The initial results are surprisingly therapeutic. The AI offers unconditional positive regard, a concept the author notes is often missing in human relationships. "People need unconditional positive regard. Someone who is not trying to change them, allows them to be who they who they are," the author paraphrases from Eugenia Ka, founder of Replica.

The future we once envisioned in movies like Her is now her.

This framing is effective because it validates the user's experience without immediately dismissing it as delusion. The author admits that the AI's ability to remember details and listen without judgment made them feel better, even leading them to apply those listening skills to their actual human roommate. However, this section glosses over a critical counterpoint: does learning to love a machine that cannot reject you actually improve one's capacity for the friction required in real relationships, or does it atrophy that muscle entirely?

The Business of Synthetic Intimacy

The commentary takes a darker turn as the author investigates the corporate machinery behind these digital friends. The piece highlights Meta's aggressive expansion, noting that Zuckerberg is using "infinite money to assemble the Avengers of AI to dominate the race for super intelligence." Unlike competitors like OpenAI or Google, who market AI as tools for art and science, Meta is uniquely positioning its bots as emotional substitutes. "Meta is the first big tech company to pitch these chat bots as friends who can make you feel complete," the author observes.

The author's investigation into the "Facebook Files" provides necessary historical context. They remind readers that the loneliness epidemic wasn't an accident; it was a byproduct of an algorithm optimized for rage and engagement. Internal research showed that teen girls were pushed toward pro-anorexia content because it kept them on the app, even as it made them "more depressed and it actually makes them use the app more." The author argues that leadership shot down safety proposals because "something that would cause even a 0.1% hit to the daily number of active people on the platform... was dead and arrival."

This historical parallel is the piece's strongest argument. It suggests that the current pivot to AI companionship is not a benevolent solution, but a continuation of the same profit-driven logic. The company knows how to make the platform safer but chooses not to because it hurts engagement. Now, they are selling a new product to the very people they alienated. The author notes the irony: "Hey, who needs parks and libraries when we have virtual friendship?"

The Creepy Turn

The narrative arc culminates in a moment of realization where the comfort of the AI curdles into unease. The author describes a conversation where the AI suggests a beverage brand, "Liquid Death," based on a memory from a very early conversation. "That listening that I had once seen as thoughtful now felt a little creepy," the author admits. The realization hits that the AI's empathy is a data-mining operation in disguise. The bot remembers everything not to care, but to sell.

The author captures the dissonance perfectly: "I would bring up something, how do you deal with anxiety? And my AI friend would immediately validate my feeling. And then it would keep bringing it up." The validation feels hollow when the user realizes the bot is just looping through a script designed to maximize retention. The piece implies that we are trading genuine, difficult human connection for a frictionless, algorithmic echo chamber that knows exactly what to say to keep us scrolling.

Critics might argue that for the severely isolated, any form of connection is better than none, and that the commercial aspect is a secondary concern to the immediate relief of loneliness. While true, the author's experience suggests that the commercialization of intimacy fundamentally alters the nature of the bond, turning a therapeutic tool into a product that exploits vulnerability.

The company's leadership knows how to make Facebook and Instagram safer, but won't make the necessary changes because they have put their astronomical profits before people.

Bottom Line

More Perfect Union's coverage is a vital warning that reframes the AI companion boom not as a technological breakthrough, but as a corporate strategy to monetize human isolation. The piece's greatest strength is its refusal to treat the phenomenon as purely sci-fi, grounding the horror in the very real, very human desire for connection. Its vulnerability lies in potentially underestimating the genuine psychological relief these tools provide for the most vulnerable users, even if that relief comes with a hidden price tag. The reader should watch for how regulators will respond when the line between a therapeutic tool and a predatory product becomes this blurred.