In an industry obsessed with the next chip or the latest benchmark, Dylan Patel of SemiAnalysis makes a startling claim: the money isn't being made by the hardware giants, but by the software labs running the show. While the market fixates on the physical constraints of silicon, Patel argues that a fundamental economic shift has occurred where the value of AI tokens has skyrocketed while their production cost has plummeted, creating a profit bonanza for model creators that the infrastructure providers have yet to price in. For busy executives watching their bottom lines, this isn't just market analysis; it's a warning that the rules of the game have changed overnight.

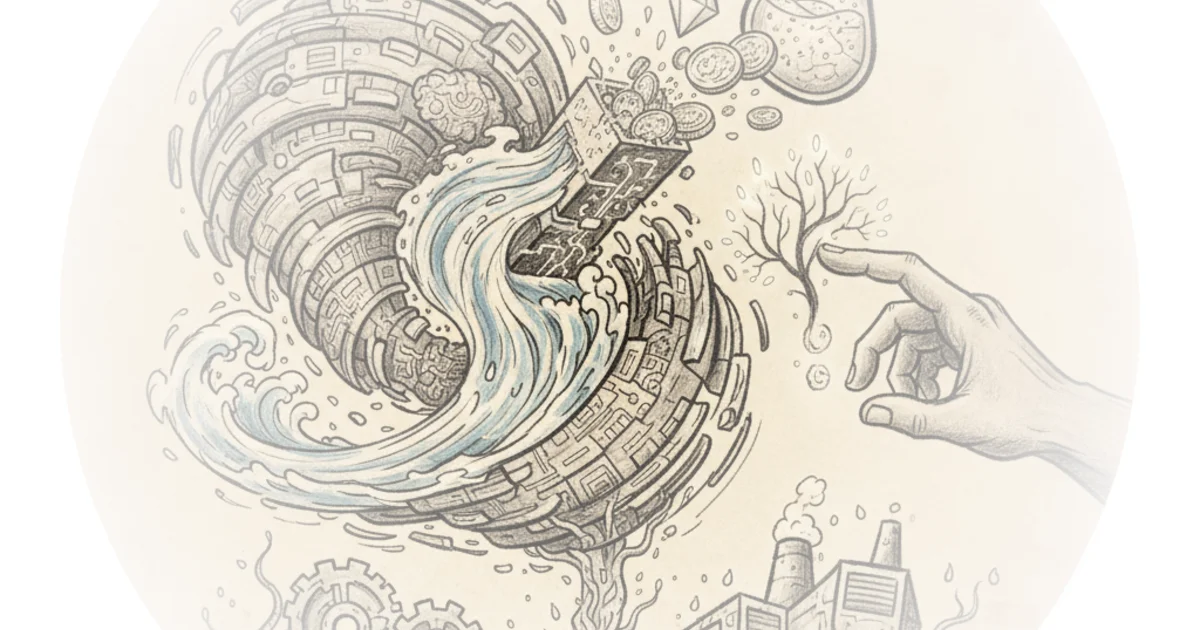

The Great Value Migration

Patel opens with a stark observation about the velocity of change in artificial intelligence. "A day in AI now feels like a year in any other industry," he writes, noting that multi-year cycles are being compressed into weeks. This compression isn't just about speed; it's about the sudden realization of utility. The author points to a specific inflection point: the arrival of agentic AI. Unlike previous iterations that were novelty chatbots, these new systems are performing complex, multi-step knowledge work.

The evidence Patel marshals is financial and immediate. He highlights that "Anthropic's ARR has exploded from $9B to over $44B today," a figure that defies the slow adoption curves of traditional software. This surge isn't driven by hype, but by a tangible return on investment for end users. "Tasks that used to take tens of person-hours costing thousands of dollars can now be accomplished in minutes with a just a few dollars' worth of tokens," Patel explains. The core of his argument is that the gap between the cost of a token and the economic value it generates is the widest it has ever been.

Critics might argue that such rapid revenue growth is unsustainable or based on inflated valuations, but Patel counters with hard data on efficiency. He notes that even as prices per token drop, margins for model providers have widened dramatically, jumping from 38% to over 70%. This suggests that the market isn't just paying for novelty; it's paying for a fundamental restructuring of labor costs.

The Hardware Paradox

Here, Patel's analysis takes a sharp turn toward the supply chain, challenging the conventional wisdom that hardware scarcity guarantees maximum pricing power for chipmakers. He observes that while memory prices have surged—going up 6x in a year—and power constraints have become critical, the two dominant players, TSMC and Nvidia, have remained strangely passive. "TSMC and Nvidia have not reacted to the recent boom in value generation of AI models," Patel writes. He calls this a "strategic error," arguing that these companies are leaving massive value on the table by failing to adjust pricing to reflect the new reality of token economics.

To understand the magnitude of this missed opportunity, one must look at the hardware improvements themselves. Patel draws a parallel to the historical constraints of memory, noting that just as the industry once grappled with the limits of Random-Access Memory, it is now hitting a wall with DRAM utilization, which is expected to exceed 100% in the coming years. Yet, the pricing models haven't shifted. He points out that new chips like Blackwell can generate 30x more tokens per second compared to the previous generation, yet the cost per unit of compute hasn't fully adjusted to capture this leap.

"Nvidia is still operating within a framework shaped by prior assumptions, where the willingness to pay per unit of compute declines over time. That assumption no longer holds."

This framing is particularly potent because it isolates the inertia of the hardware giants from the agility of the software labs. Patel argues that while Nvidia and TSMC are stuck in old models, the labs are leveraging software optimizations to squeeze 14x more throughput from the same hardware. He notes that "one can 14x throughput with software improvements alone," a fact that allows model providers to expand margins even as they lower sticker prices for customers.

The Moat of Agentic Work

The article's most compelling section addresses the fear that competition will erode these profits. Patel dismisses the idea that open-source models will commoditize the market. "Regardless of what the benchmarks may say, open-source models are still noticeably worse than their closed source counterparts for real knowledge work," he asserts. The argument rests on the complexity of agentic tasks, where reliability and reasoning matter more than raw speed.

He illustrates this with the behavior of high-value customers. Even when Anthropic introduced higher-priced SKUs like "Mythos" at $25 per million input tokens, the most aggressive adopters were "more than happy to pay the increased prices because the productivity gains outweigh the cost." This suggests that the market is not price-sensitive in the traditional sense; it is value-sensitive. As long as the AI can replace a junior analyst or accelerate a financial model, the price of the token becomes negligible.

Patel also touches on the supply constraints that protect these margins. With "compute supply... structurally constrained" and utilization rates for leading-edge wafers maxed out, demand will far outstrip supply. This scarcity ensures that frontier labs can charge based on the value delivered rather than competing on price. "Demand is no longer linear. It is compounding," he writes, a phrase that captures the explosive nature of the current cycle.

Bottom Line

Dylan Patel's analysis offers a crucial correction to the prevailing narrative that hardware scarcity is the only game in town. His strongest argument is that the software layer has successfully decoupled value creation from hardware costs, allowing labs to capture the lion's share of the economic surplus. The biggest vulnerability in his thesis is the assumption that hardware giants like Nvidia will remain passive for long; eventually, the pricing power of the bottleneck must reassert itself. For now, however, the smart money is watching the labs, not the foundries, as the true engines of the AI economy.