Chipstrat's year-end wrap on AI networking cuts through the marketing fog to reveal a fundamental truth: the bottleneck for artificial intelligence is no longer just the chip, but the invisible plumbing that connects them. While the industry fixates on raw compute power, this analysis argues that the real revolution lies in making distributed memory feel local, a shift that turns physical distance into a software abstraction. For investors and engineers alike, understanding the distinction between scaling up, scaling out, and the emerging concept of scaling across is not academic—it is the difference between a stalled cluster and a functioning supercomputer.

The Illusion of Locality

The piece opens by dismantling a common misconception: that a single GPU's memory is sufficient for the world's largest models. Chipstrat reports, "Once a model no longer fits in a single GPU's HBM, the accelerator must fetch portions of the model from elsewhere... which is slower and less predictable than local memory." This creates a critical engineering challenge. If the system must constantly reach for data, the processor sits idle, waiting. The proposed solution is "scale-up" networking, a strategy designed to pool memory across multiple chips so they behave as one massive logical device.

The argument here is compelling because it reframes the hardware stack. It suggests that the goal is to create a "single logical compute domain" where the programmer doesn't need to manage data placement. Chipstrat notes that achieving this requires interconnects so fast that "remote HBM feel[s] as close to local memory as possible." This is where the stakes are highest. The piece highlights Nvidia's Blackwell system as the current apex of this philosophy, quoting Jensen Huang's observation that the NVL72 configuration is "basically one giant chip." The sheer scale is staggering: 1.4 exaFLOPS of performance and 1.2 petabytes per second of memory bandwidth, processing what the article describes as "the entire world's internet traffic" in a single room.

However, this approach hits physical walls. The article clarifies that while scale-up often happens within a rack, it is not technically limited to it. "Accelerators can be tightly coupled across adjacent racks and still behave as a scale-up system as long as latency remains low," the editors explain. This nuance is vital. It means the definition of a "supercomputer" is expanding beyond the chassis, driven by the need to bypass the finite capacity of High Bandwidth Memory (HBM) on individual dies. Critics might note that relying on such extreme interconnect speeds introduces fragility; a single link failure in a tightly coupled 72-GPU domain could theoretically collapse the entire logical unit, a risk that distributed systems are designed to mitigate.

The miracle of the Blackwell system is not just the transistors, but the networking that makes 72 GPUs act as one.

The Farming Analogy and the Limits of Coordination

To explain the transition from scaling up to scaling out, Chipstrat employs a surprisingly effective farming analogy. The piece argues that scaling up is like adding a second combine harvester to the same field: it accelerates a single job but requires instantaneous, fine-grained coordination. "Two combines means twice as many rows of corn get harvested each pass," the text states, but only if they can communicate via "line-of-sight hand gestures" or instant radio calls. In computing terms, this is the low-latency domain of scale-up.

But what happens when the field is too big? The article posits that beyond a certain point, coordination overhead dominates, and productivity plateaus. The solution is to send a friend to a different field entirely. "Scale out communication is slower. The other field might be twenty miles away, so coordination happens over a phone call rather than hand signals," the piece explains. This shift from instantaneous local coordination to slower, high-level global coordination is the defining characteristic of scale-out architecture. It allows for massive parallelism—harvesting multiple fields simultaneously—but sacrifices the tight coupling that makes scale-up so efficient for single, massive tasks.

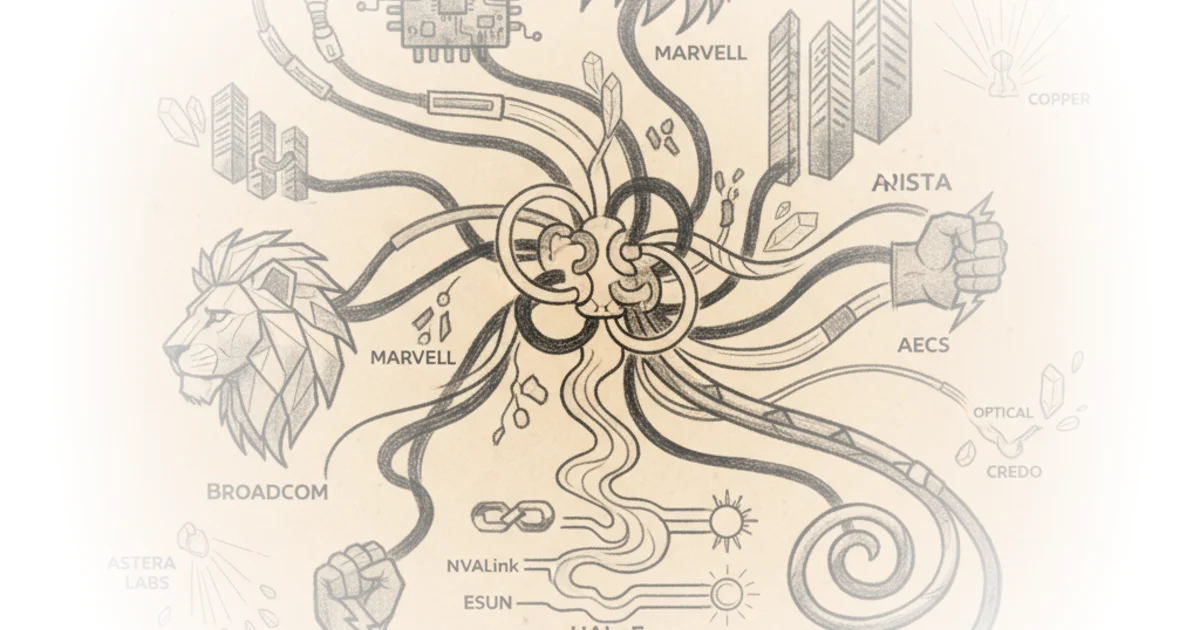

This distinction is often blurred in industry hype, but Chipstrat insists on the separation. The editors note that while scale-up handles the heavy lifting of training a single model, scale-out is necessary when "you want to do even more simultaneous work than can be supported by a single domain." This is where the protocol wars begin, with Nvidia's NVLink dominating the scale-up space while alternatives like UALink and ESUN prepare to challenge the status quo in 2026.

Scaling Across: The Geography of Compute

The most forward-looking section of the commentary addresses the physical limits of data centers. When power and space run out in a single location, the industry is forced to look further afield. Chipstrat introduces the term "scale across" to describe coordinating workloads across geographically separate campuses, tens of miles apart. The article cites Google's expansion in Iowa and Nebraska, where isolated campuses are being linked to form a gigawatt-scale cluster by 2026.

The piece draws a parallel to agricultural expansion: "Entrepreneurial farmers that want to keep growing the business eventually run into local land constraints... When expansion at home is no longer possible, the farmer might buy land several hours away." This is now the reality for AI infrastructure. The editors argue that fiber optics moving data at near light-speed make this possible, allowing a "single AI workload" to span multiple sites. "Can those physically separate data centers still coordinate on a single massive training run? Yep!" the text asserts.

This shift has profound implications for the networking stack. It moves the battle from the server rack to the wide-area network, demanding new standards for reliability and latency over distance. The article hints that while the technology exists, the economic and architectural trade-offs are just beginning to be understood. As the piece concludes, the focus is shifting from "how many chips can we fit in a rack" to "how many racks can we coordinate across a continent."

Bottom Line

Chipstrat's analysis succeeds by stripping away the jargon to reveal the physical constraints driving the AI arms race. Its strongest argument is the clear delineation between scale-up, scale-out, and scale-across, proving that the future of AI depends as much on the speed of light in fiber optics as it does on the speed of the processor. The piece's biggest vulnerability is its optimism regarding the seamless integration of these disparate layers; while the theory of a unified logical domain is sound, the engineering reality of maintaining that illusion across geographically distributed sites remains a massive, unsolved challenge. Readers should watch closely as the industry moves from 2025's theoretical frameworks to 2026's physical deployments, where the promise of "one giant chip" will be tested against the friction of the real world.