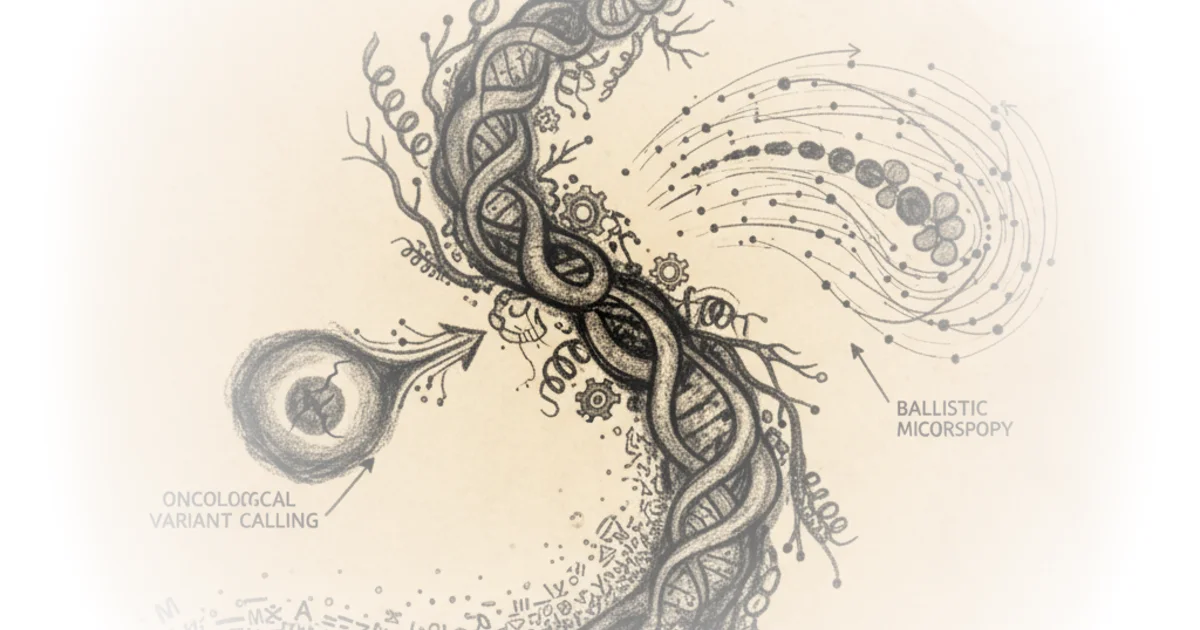

This week's scientific landscape is defined not by a single breakthrough, but by a fundamental reckoning with how we measure progress in biology. The prevailing assumption that "more data equals better models" is crumbling under the weight of new evidence, while a radical new microscopy technique suggests we can photograph the inside of a living cell by shooting bullets through it. Pranay Satya's curation forces a pause on the hype cycle, revealing that the next leap in precision oncology and cellular imaging depends less on raw compute and more on data hygiene and engineering creativity.

The Myth of Infinite Scaling

Satya opens with a critical examination of protein language models, challenging the tech industry's favorite mantra. In the realm of natural language processing, scaling laws have allowed researchers to predict model performance based on compute and data size. Satya notes that Align Bio sought to apply this logic to proteins, training their AMPLIFY suite on yearly snapshots of protein databases from 2011 to 2024. The results were jarring. "From their results, they found that the increase in performance, as measured by the Spearman correlation, fluctuated quite significantly, and even decreased some years with the addition of billions of new protein sequences."

This finding upends the idea that simply feeding a model more sequences will automatically yield smarter predictions. Satya explains that the authors discovered the issue wasn't a lack of volume, but a lack of variety. "The later years were often dominated by redundant sequences, and relative composition of the dataset was also in constant flux with events like the COVID-19 pandemic increasing the relative percentage of a specific group of proteins." The argument here is compelling because it shifts the burden of proof from quantity to quality. The authors conclude that "the size of the dataset is not the predominant driver of predictive capability, but rather the diversity within the training set is."

Critics might argue that diversity is difficult to quantify and that the field has historically relied on volume as a proxy for robustness. However, Satya highlights that the authors' follow-up experiments showed that while adding labeled data helped in some contexts, it failed entirely in others, implying a "nuanced" relationship that simple scaling laws cannot capture. The takeaway is clear: without proper data hygiene, the industry risks building massive models on shaky foundations.

The size of the dataset is not the predominant driver of predictive capability, but rather the diversity within the training set is.

Rewriting the Rules of Cancer Detection

Shifting from protein modeling to clinical application, Satya turns to a significant leap in oncology. For years, the tools used to identify cancer-causing mutations have been limited by the technology they were built for: short-read sequencing. These tools struggle with repetitive regions of the genome, often missing the very mutations that drive tumor progression. Satya introduces DeepSomatic, a new model from Google and collaborators that leverages long-read sequencing to overcome these blind spots.

The core of the innovation lies in the creation of the CASTLE dataset. Because high-quality benchmark data for somatic mutations was scarce, the team had to build their own. Satya describes how they sequenced cell lines from breast and lung cancers using multiple technologies, then used a consensus approach to label the data. "This process yielded the CASTLE dataset, with the long-read sequencing data and variant predictions made publicly available." This is a massive contribution in itself; by releasing the data, the authors have lowered the barrier for the entire field to improve.

The performance gains are not marginal; they are transformative. Satya reports that the model achieved a "substantial decrease in error rate of 95% when classifying indel variants and 37% when classifying single nucleotide variants." Furthermore, the tool excels at detecting low-frequency mutations, which are critical for understanding how tumors evolve and resist treatment. "DeepSomatic also showed high accuracy when evaluated on held-out cell lines, demonstrating more robust generalizability."

The practical implications are immediate. Satya notes that the model was tested on real patient data, including glioblastoma and pediatric blood cancers, where it identified mutations that previous methods missed. "The model demonstrated higher recall performance on the glioblastoma sample when compared to the ClairS method, and was also able to identify ten additional somatic mutations not found by ClairS in pediatric blood cancers." While the model did miss a couple of mutations, the overall trajectory points toward a future where clinicians can characterize complex tumors with unprecedented precision.

Shooting Bullets to Map the Cell

Perhaps the most visually arresting piece of coverage Satya presents is the introduction of Ballistic Microscopy (BaM). The challenge in cellular biology has long been the trade-off between spatial resolution and molecular depth. You can see where a protein is, but not what it's interacting with, or vice versa. Satya describes a solution that sounds like science fiction: "Jijumon et al. decouple spatial extraction and molecular by introducing Ballistic Microscopy (BaM), where high-speed nanoparticles are blasted through living cells to extract tiny attoliter droplets of cytoplasm onto hydrogels, preserving the cell's spatial information for later molecular analysis, akin to taking a molecular photograph onto a hydrogel film."

The engineering required to make this work is staggering. The team modeled heat transfer and drag to ensure that firing gold nanoparticles at 1,000 meters per second would pierce the cell without vaporizing it. Satya writes that they "showed that the particles wouldn't overheat (transient temperature rises only ~200K for a microsecond), would not cavitate beyond 0.2 μm, and could safely traverse the cell membrane without blowing it apart."

Once the physics were confirmed, the biological results were equally striking. The technique allowed researchers to pool thousands of tiny samples and run mass spectrometry, revealing a dense interaction network of proteins. "The proteomic analysis revealed 641 associated proteins forming a dense interaction network. Among them, Keratin-18 emerged as a consistent and surprising interactor." This discovery, linking intermediate filaments to the structural organization of protein condensates, would have been nearly impossible with traditional methods.

Satya acknowledges the limitations: the method currently lacks z-depth resolution and requires pooling samples due to the tiny amount of material captured. "There's no z-depth resolution yet, and mechanical shock from gas acceleration can limit repeated sampling." Yet, the modularity of the approach—separating capture from analysis—opens doors for combining destructive techniques on the same sample. "This is an insanely creative piece of engineering - shooting little bullets to form a splatter map of a cell is a wild idea that hopefully will never be accepted as obvious!"

The Brain's Pattern Completion

Finally, Satya touches on neuroscience, exploring how the brain constructs reality from incomplete data. Using illusory contours, the study by Shin et al. investigates how the visual cortex infers edges that aren't physically present. Satya explains that the team identified a sparse population of neurons in the primary visual cortex that specifically encodes these inferred shapes.

The study goes beyond observation to causation. By using a closed-loop optical pipeline to stimulate these specific neurons, the researchers could recreate the perceptual experience in the subject. "To test whether the IC-encoders causally drive the representation of these inferred contours, the team implemented a closed-loop optical pipeline: imaging thousands of neurons, identifying functional ensembles in real time, and selectively stimulating them with two-photon holographic optogenetics." This suggests a compact, generalizable circuit motif that could inform not only biological understanding but also the design of more efficient artificial intelligence systems.

Bottom Line

Pranay Satya's curation delivers a powerful corrective to the "bigger is better" mindset that has dominated AI and biotech. The strongest argument here is that data diversity and quality are the new bottlenecks, not compute power or dataset size. The biggest vulnerability in the field remains the scarcity of high-quality, diverse benchmark data, a gap that the CASTLE dataset begins to fill. As the industry moves forward, the focus must shift from scaling parameters to engineering smarter, more nuanced data collection and analysis strategies.

This is an insanely creative piece of engineering - shooting little bullets to form a splatter map of a cell is a wild idea that hopefully will never be accepted as obvious!