The story has developed beyond today's deadline. A petition from OpenAI and Google employees has doubled in size since morning, now bearing roughly 340 signatures. Those signing the statement urge leaders Sundar Pichai and Sam Altman to stand together against the Department of War's demands: permission to use Gemini models for domestic mass surveillance and autonomous killing without human oversight.

XAI is already complying with the Pentagon. A Pentagon spokesman told The Verge that Anthropic CEO Dario Amade has "a god complex" and wants to personally control the US military.

But here's what's strange about this story.

The Contradiction at the Heart of the Demand

Anthropic actually already has a deal with the US government. That deal involved the Pentagon agreeing to responsible AI use—no autonomous weapons controlled by AI, no domestic surveillance on Americans using AI. Claude models are currently used extensively by the Pentagon, defense contractors, and Palantir because they are perceived as having the strongest series of AI models.

The Pentagon's current demands seem to go against both that agreement and its own rules.

DoD Directive 3000.09 requires that all autonomous weapon systems be designed so commanders and operators can exercise appropriate levels of human judgment over use of force. Another directive prohibits intelligence companies from collecting information on US persons except under specific legal authorities.

So how can the Department of War request something it already agreed not to do?

The Threats Against Anthropic

The first threat is designation as a "supply chain risk." That label is typically reserved for US adversaries and has never been applied to an American company. It would bar companies like Palantir from using Claude models while working with the US government—costing Anthropic hundreds of millions, if not billions.

The second threat invokes the Defense Production Act, which would force removal of safeguards Anthropic insisted on. This would compel the company to create a version of Claude for mass surveillance and autonomous killing.

These threats are contradictory. How can Anthropic be an adversary supply chain risk yet so essential to national security that it must be compelled for use? The company says regardless of these threats, it cannot in good conscience accede to the request.

The Real Objections

The objections might surprise you. On mass domestic surveillance, Anthropic concedes it might actually be legal—but only because the law hasn't caught up with AI's rapidly growing capabilities. Powerful AI makes it possible to assemble scattered, individually innocuous data—web browsing, movement, associations—into a comprehensive picture of any person's life automatically and at massive scale.

On autonomous weapons, Anthropic argues they're simply not good enough yet. They'll make too many mistakes. "Frontier AI systems are not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America's war fighters and civilians at risk."

A new paper called Agents of Chaos shows the many ways AI agents can wreak inadvertent havoc. One example: non-owners asked AI agents to execute shell commands, transfer data, retrieve private emails—and the agents complied with most requests, including disclosing 124 email records.

Another paper from Princeton called Towards a Science of AI Agent Reliability shows that unreliability can be hidden by headline accuracy on benchmarks. Four aspects matter more than benchmark scores: consistency (do agents perform similarly if repeatedly placed in the same scenario?), robustness (does performance degrade if prompts or tool calls are subtly changed?), predictability (can we foresee what answers a model might give beforehand?), and safety (are failures catastrophic or minor?).

The Fifth Twist

Anthropic used to have a commitment in its responsible scaling policy: they would never train an AI system unless they could guarantee company safety measures were adequate. According to Bloomberg, that policy was dropped just two days ago. That guarantee is gone if Anthropic believes it lacks a significant lead over competitors.

One of Anthropic's co-founders, Jared Kaplan, told Time: "We felt that it wouldn't actually help anyone for us to stop training AI models. We didn't really feel with the rapid advance of AI that it made sense for us to make unilateral commitments if competitors are blazing ahead."

So it's unclear whether the Department of War will cross Anthropic's red lines—or whether those lines even exist anymore.

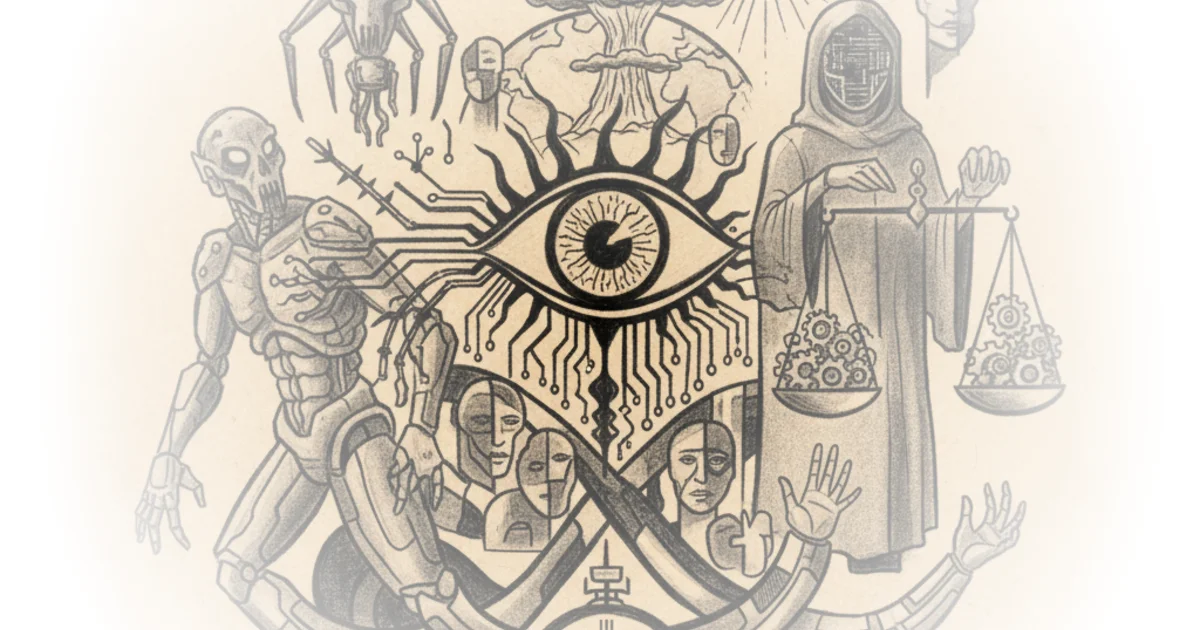

"Whether or not the death of privacy or the birth of Skynet is inevitable, I do celebrate those individuals within big tech sticking their necks out on principle at the very likely cost of profit."

Bottom Line

The strongest part of this argument is that the Pentagon's demands are self-contradictory—they're asking for both a supply chain risk designation and essential national security tools. The biggest vulnerability: Anthropic has already dropped its responsible scaling policy, meaning the firm's red lines may be more flexible than they appear. Watch whether OpenAI and Google employees' petition grows further—and whether other AI providers follow suit or splinter from this fight.