Varun Agarwal delivers a rare glimpse into a future where the boundary between thought and action dissolves, not through science fiction, but through rigorous engineering. This week's selection moves beyond the hype of brain-computer interfaces to address the most elusive frontier: the silent, internal monologue of the human mind. The coverage is notable because it tackles the privacy paradox head-on, offering a technical solution to the fear that our inner thoughts might be broadcast without consent.

The Silent Voice in the Cortex

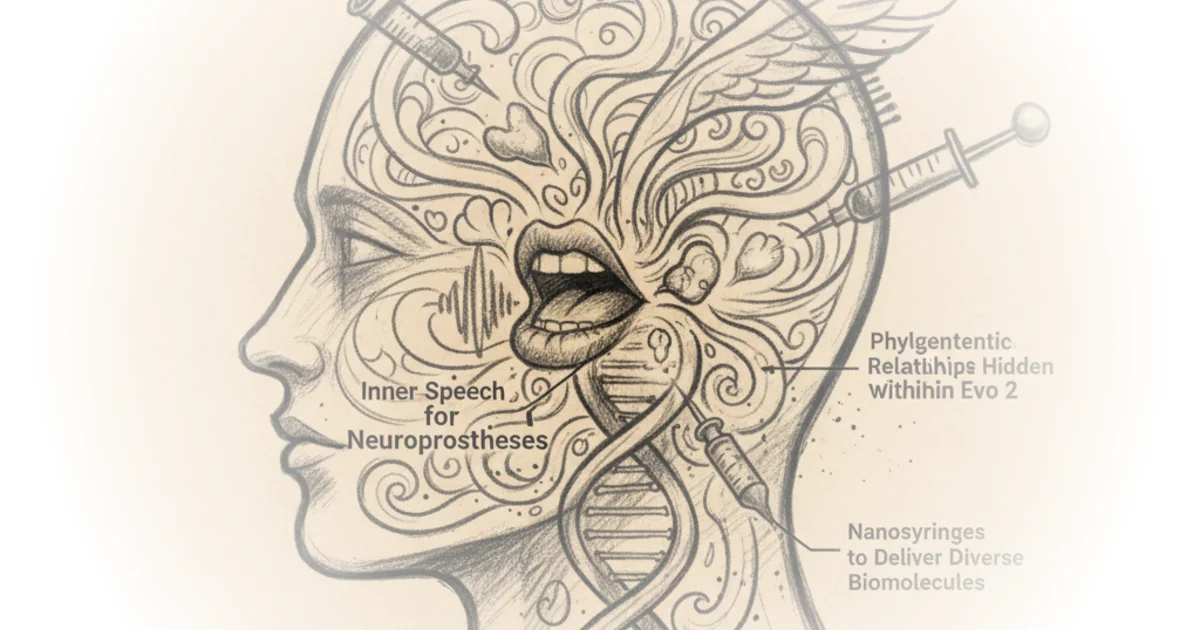

Agarwal begins by highlighting a breakthrough from Stanford Medicine that challenges the assumption that inner speech is merely a degraded version of attempted speech. "Inner speech is not a degraded, noisy shadow of attempted speech, but instead forms a scaled, separable representation," he writes. This distinction is crucial; it suggests the brain has a dedicated, distinct channel for thinking aloud that can be tapped without the physical fatigue of trying to speak. The author notes that the study found a specific "motor-intent" neural dimension that separates imagined words from attempted ones, providing a clean substrate for decoding.

The implications for patients with severe paralysis are profound. Agarwal explains that the system achieved decoding accuracies between 46 and 74% in real-time, a significant leap for a modality that was previously considered too abstract to capture. However, the most compelling part of Agarwal's analysis is his focus on the ethical safeguards. He points out that the team didn't just build a decoder; they built a lock. "The first control involves labeling attempted speech patterns with their appropriate phonemes and labeling inner speech patterns with a 'silence' token," Agarwal writes. This dual-layer safety mechanism, which also includes a mental password, directly addresses the terrifying prospect of involuntary thought surveillance.

Critics might argue that 46% accuracy is still too low for fluid conversation, but Agarwal rightly frames this as a proof-of-principle that establishes the feasibility of the modality rather than its immediate perfection. The real story here is the shift from "can we do it?" to "how do we do it safely?"

Unlocking inner speech as a modality could enable more natural, fluent communication, while reducing the fatigue and articulatory effort often required of users.

Mapping the Tree of Life in Code

Shifting from the human brain to the genome, Agarwal turns his attention to a finding that fundamentally changes how we interpret artificial intelligence. He discusses a paper by Pearce et al. regarding Evo 2, a massive DNA foundation model. The surprise here is not just that the model works, but how it works. Agarwal writes that the model "implicitly encodes species identity and evolutionary relationships as a structured, geometric object: a curved phylogenetic manifold."

This is a significant departure from viewing AI as a black box. Agarwal explains that by analyzing the geometry of the model's internal activations, researchers found that the distance between species in the digital space perfectly mirrors their actual evolutionary history. "These geodesics were linearly correlated with the true phylogenetic branch-length distances," he notes. This discovery suggests that the model isn't just memorizing sequences; it has learned the underlying rules of biology.

The author emphasizes the practical utility of this finding. If we can understand the "latent ontologies" within these models, we can steer them more effectively. Agarwal points out that a simple decision tree using basic genome statistics could predict the model's complex internal states, grounding abstract math in tangible biological signals. "Ultimately, this substantiates the idea that the manifold is not an abstract mathematical artifact, but one grounded in meaningful biological signals," he writes.

A counterargument worth considering is that this geometric structure might be an artifact of the training data rather than a fundamental truth of biology. However, Agarwal's coverage suggests the correlation is strong enough to warrant further investigation into using these models for functional annotation and predicting complex phenotypes.

The Modular Nanosyringe

In the realm of delivery systems, Agarwal highlights a study by Kreitz et al. that reimagines how we get drugs into cells. He describes the Photorhabdus virulence cassette (PVC) as a "spring-loaded nanosyringe" that bacteria naturally use to inject proteins. The innovation, as Agarwal frames it, is turning this biological weapon into a therapeutic tool called SPEAR.

The key advancement is the ability to carry complex cargo that traditional methods cannot. "Kreitz et al. flip the loading site to the spike itself: they fuse cargos to the spike base or tip, so cargos stay folded," Agarwal writes. This allows for the delivery of Cas9 ribonucleoproteins and single-stranded DNA without the need for transfection, a process that often damages cells or fails to deliver the payload efficiently. The modularity of the system is its strongest feature, allowing researchers to swap out targeting arms like changing lenses on a camera.

Agarwal notes that this system can deliver split-protein systems and precise genetic edits, pushing the boundaries of what is possible in gene therapy. "In short, Kreitz et al. deliver a bacterially manufacturable, antibody-swappable injector that delivers diverse payloads including RNPs and ssDNA directly into cells without transfection or endocytotic pathways," he summarizes. This approach bypasses many of the cellular import mechanisms that have long been bottlenecks in drug delivery.

Scaling the Medical Future

Finally, Agarwal examines the trajectory of medical AI with the introduction of the Cosmos Medical Event Transformer (CoMET). He presents a stark reality: the predictive power of these models scales directly with the amount of data they consume. "The family of CoMET models demonstrated: Strong predictive performance in: Medical event sequences," he writes, citing a 30-day readmission prediction accuracy that outperformed the best supervised models.

The scale of the dataset is staggering—118 million patients and 115 billion discrete medical events. Agarwal argues that this is not just a bigger model, but a fundamentally different approach to understanding patient journeys. By predicting the next event in a patient's history, the model can flag diseases weeks before a clinical diagnosis. "CoMET-L correctly flagged more than 50% of patients with their eventual diagnosis by the final prediction time, and more than 25% of patients weeks ahead of diagnosis," he notes.

This capability transforms the role of AI from a passive record-keeper to an active diagnostic partner. However, a critical perspective is necessary here: the reliance on Real World Data means the model inherits the biases and gaps present in the healthcare system. While Agarwal highlights the impressive metrics, the ethical implications of using such models for differential diagnosis require careful oversight to ensure they do not perpetuate existing disparities.

Bottom Line

Varun Agarwal's curation succeeds by connecting disparate breakthroughs through a common thread: the transition from theoretical possibility to engineered reality. The strongest argument is that safety and interpretability are no longer afterthoughts but are being baked into the core architecture of these technologies, from the mental passwords in brain implants to the geometric transparency of DNA models. The biggest vulnerability remains the gap between laboratory proof-of-concept and widespread clinical deployment, but the trajectory is undeniably upward. Readers should watch for how these foundational tools are adapted to address the specific, messy realities of human biology and healthcare equity in the coming years.