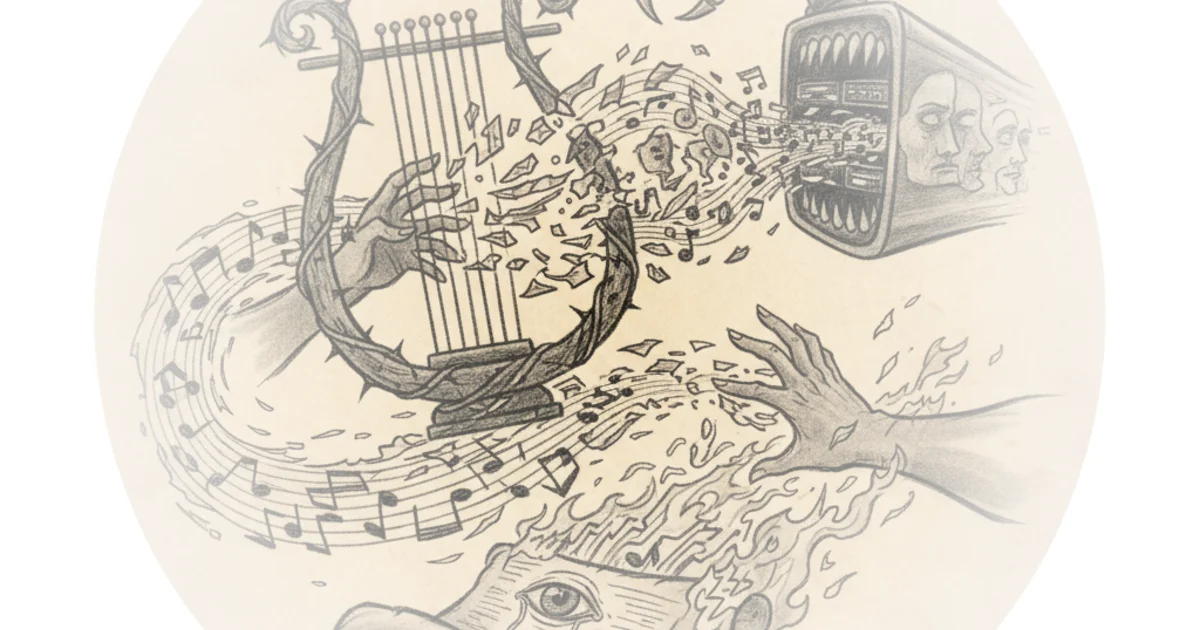

Fighting Fire With Fire

Benn Jordan, a professional musician with over 25 years of independent work, has spent the last several years watching generative AI companies scrape his catalog without consent, train models on it, and sell the output back into the same marketplace where he earns his living. His response is not a lawsuit or a lobbying campaign -- though he has pursued both -- but a technical countermeasure that he calls Poisonify. Combined with a University of Tennessee research project called Harmony Cloak, the approach embeds adversarial noise into music files that degrades AI training while remaining inaudible to human listeners.

The premise is straightforward: if the legal system will not protect musicians, perhaps the physics of neural networks can. Jordan frames the situation with characteristic bluntness.

Once tech companies started raising millions of venture capital dollars and scraping my music without my consent, then generating shittier music with it that is inadvertently associated with my name and then attempting to resell that in the same economy in which I make money from my music.

What makes Jordan's video more than a standard anti-AI polemic is that he demonstrates working technology. The adversarial noise attacks are not theoretical. He shows AI music extension services -- Suno, Minimax Audio, Meta's MusicGen -- producing garbled, crashed, or degraded output when fed encoded files. The TensorBoard graphs from the University of Tennessee team show training loss flatlining almost immediately on poisoned data. These are not cherry-picked anecdotes; they are reproducible results across multiple commercial systems.

The Economics of Consent

Jordan's most interesting contribution to the AI-and-music debate is not the adversarial noise itself but his work with Voice-Swap AI, the company he co-founded with DJ Fresh and Nico Polarin. The model is instructive: train vocal AI exclusively on consensual data, share equity with participating artists, and pay ongoing royalties. The results challenge the industry's prevailing assumption that consent is a luxury that slows innovation.

By training an entirely new vocal base model on consensual data and fine-tuning it in collaboration with the artists, the resulting voice model sounded superior to our competitors.

Voice-Swap grew to 150,000 users without venture capital and became the only AI music company certified by BMAT for royalty payments. Most of its vocalists earned more annually from their voice models than from their entire Spotify catalogs. That last detail is worth pausing on. A consent-based AI company is out-earning Spotify for the artists who participate in it. The usual counterargument -- that requiring consent would make AI products uncompetitive -- appears to be empirically false, at least in this domain.

The counterpoint, which Jordan does not fully address, is whether this model scales. Voice-Swap works with individual vocalists who actively collaborate on fine-tuning. Scaling a consent-based approach to the hundreds of millions of tracks that companies like Suno have allegedly scraped would require infrastructure that does not yet exist -- registries, licensing clearinghouses, standardized opt-in mechanisms. The technical victory of adversarial noise may be real without being sufficient.

The Pareto Problem

The most provocative section of Jordan's analysis applies the Pareto principle to generative AI's trajectory. The observation is that AI image and music generators improved rapidly at first -- the easy 80 percent -- but have stalled on the hard 20 percent. Text rendering, hand anatomy, prompt fidelity in music generation: these problems persist despite billions in investment.

I suspect that the reason for this is because these AI models quickly improved 80% with only 20% of the time, investment, and work with training them. And now just getting a 5% improvement on those models is an expensive, complicated, and unprofitable grind.

This framing has real analytical teeth. If generative AI music is approaching diminishing returns on model retraining, then poisoning the training data becomes an existential threat rather than a minor nuisance. A company that needs massive new datasets to push past its quality plateau will find those datasets increasingly toxic. The window where AI music companies could scrape freely and improve rapidly may already be closing, and adversarial noise accelerates that closure.

There is a counterargument worth considering: AI research does not always follow smooth curves. Breakthroughs in architecture -- the kind that UNET represented for image generation -- can reset the trajectory entirely. A sufficiently novel approach to music generation might render current adversarial techniques obsolete. Jordan himself acknowledges this implicitly by randomizing which tracks get which encoding methods and refusing to disclose the specifics, a deliberate obfuscation strategy against future model architectures.

Beyond Music: The Surveillance Angle

The video's most unsettling demonstrations have nothing to do with music piracy. Jordan shows adversarial audio commands that can trigger smart home devices, unlock doors, and manipulate voice assistants -- all through sounds that human ears cannot distinguish from ambient noise or soft classical music. He demonstrates attacks against Amazon Echo, Meta's speech recognition models, and general AI-powered listening devices.

Just about anything that you can accomplish via a voice command, like ordering something on Amazon or opening your garage door, can presumably be triggered by a sound that human beings cannot identify. And this is accomplished by using adversarial noise.

The information security implications are staggering and extend well beyond the music industry. The fundamental vulnerability -- that neural networks interpret audio differently than human brains -- means that any environment with microphone-equipped devices is potentially susceptible. Jordan treats this as a secondary topic, but it may be the more consequential one. The music industry's problems are economic; adversarial audio attacks on smart home security systems are a matter of physical safety.

The Practical Barriers

Jordan is candid about the current limitations. Harmony Cloak requires specialized GPU clusters. His own Poisonify encoding takes two RTX 5080 cards running for two weeks to process a single album, consuming roughly 242 kilowatt-hours of electricity. At current US energy prices, that translates to $40 to $150 per album for most users -- a non-trivial cost for independent musicians already struggling to earn from streaming.

There is also the collateral damage problem. Adversarial noise does not distinguish between malicious AI scraping and legitimate algorithmic analysis. Spotify's recommendation engine uses instrument classification, and poisoned files could cause an ambient electronic album to be recommended to barbershop quartet enthusiasts. Jordan finds this amusing; working musicians dependent on algorithmic discovery might not.

The path to viability runs through the partnership with Symphonic Distribution and the rebranding of Voice-Swap as Topset Labs. If the encoding can be made efficient enough to offer as an API at the distribution level -- a checkbox when uploading to streaming services -- then the technology becomes accessible. Until then, it remains a proof of concept that only well-resourced musicians can deploy.

The Suno CEO's Gift

Jordan reserves particular disdain for Suno CEO Michael Schulman, whose public comments provide an almost too-perfect foil.

It's not really enjoyable to make music. Now, I think the majority of people don't enjoy the majority of the time they spend making music.

Jordan's response is acidic: Schulman could expand Suno to build autonomous bowling machines so customers could pay a subscription fee instead of bowling with their friends. The mockery is effective because Schulman's premise -- that music creation is drudgery that technology should eliminate -- is so fundamentally disconnected from the experience of actual musicians that it undermines confidence in everything else his company builds. If the CEO does not understand the problem he claims to solve, the solution is unlikely to serve anyone except investors.

Bottom Line

Benn Jordan's adversarial noise work represents a genuine technical breakthrough in artist self-defense against unconsented AI training. The demonstrations are convincing, the science is peer-reviewed, and the results across multiple commercial AI music platforms are consistent. The larger significance, however, lies not in the technology itself but in what it reveals about the generative AI music industry's fragility. An industry built on unconsented data that cannot tolerate poisoned inputs has a structural vulnerability no amount of venture capital can patch. Whether adversarial noise becomes a practical tool for working musicians or remains a proof-of-concept weapon depends entirely on whether the encoding process can be made cheap and fast enough to deploy at scale. The consent-based model at Voice-Swap suggests that the real long-term solution is economic, not adversarial -- but until the legal and business infrastructure catches up, poison pills may be the only leverage musicians have.