The Tool That Writes Tools

Dylan Patel's latest piece marks something rare in AI coverage: a concrete inflection point, not another prediction. The claim is specific — four percent of GitHub public commits already come from Claude Code, with twenty percent projected by end of 2026. That is not speculation. That is version control history.

What Claude Code Actually Does

Patel draws a sharp line between Claude Code and chatbot wrappers. It is terminal-native, not a sidebar. It reads entire codebases, plans multi-step tasks, then executes them iteratively while taking direction from the user.

As Dylan Patel puts it, "Claude Code is a CLI (command line interface) tool that reads your codebase, plans multi-step tasks, and then executes these tasks." The distinction matters. This is not autocomplete. This is an agent with filesystem access.

Patel reframes the tool's scope entirely: "It might be incorrect to think of Claude Code only as focused on Code, but rather as Claude Computer." The agent understands its environment, makes plans, and completes them. Users describe objectives in natural language, not implementation details.

The piece cites engineers who have already crossed over. Boris Cherny, creator of Claude Code, states that "Pretty much 100% of our code is written by Claude Code + Opus 4.5." Andrej Karpathy notes he is "slowly starting to atrophy my ability to write code manually." Ryan Dahl, creator of NodeJS, declares that "the era of humans writing code is over."

"One developer with Claude Code can now do what took a team a month."

The Economic Layer Beneath the Code

Patel's analysis extends beyond developer workflow into Anthropic's revenue trajectory. The Tokenomics model forecasts Anthropic's quarterly ARR additions have overtaken OpenAI's. Compute capacity constrains growth — Anthropic is on track to add as much power as OpenAI in three years, while Sam Altman's lab suffers datacenter delays.

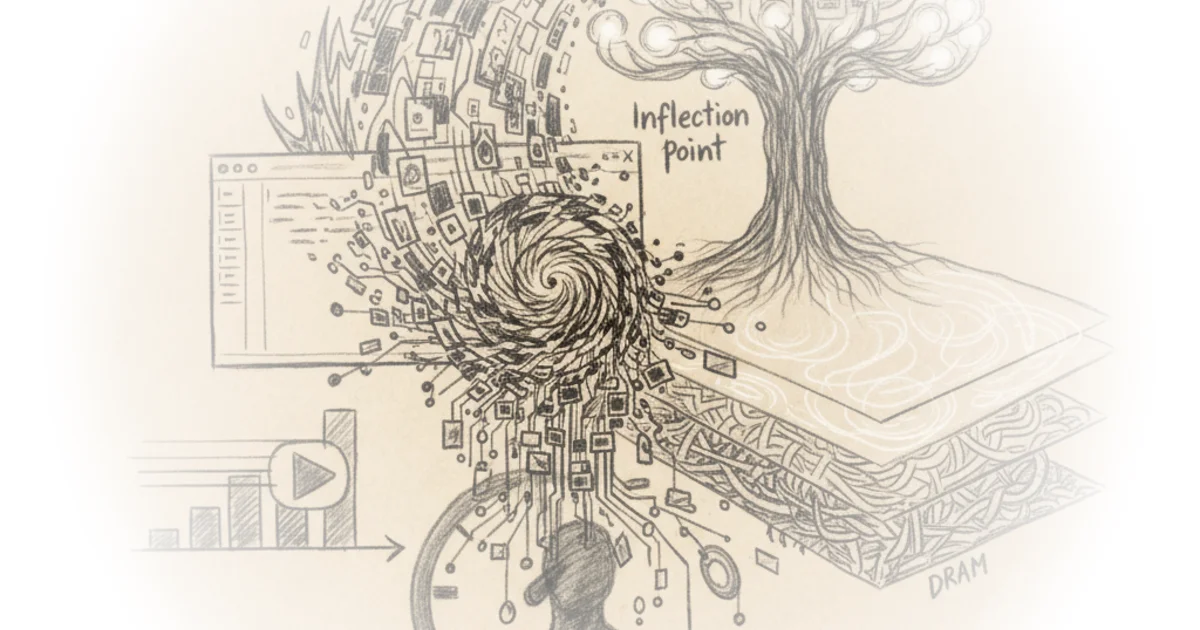

The argument positions Claude Code as the agentic layer's ChatGPT moment. Patel writes: "We believe that Claude Code is the inflection point for AI 'Agents' and is a glimpse into the future of how AI will function." The comparison traces AI's viral moments: GPT-3 proved scale worked. Stable Diffusion showed images. ChatGPT proved demand. Claude Code organizes model outputs into outcomes.

Beyond Software: The Fifteen Trillion Dollar Target

Coding becomes the beachhead, not the destination. Patel identifies one billion information workers — roughly one-third of the global workforce — whose workflows follow the same READ, THINK, WRITE, VERIFY pattern that Claude Code automates for software.

Dylan Patel writes: "If Agents can eat software, what labor pool can they not touch?" The piece projects automation into financial services, legal, consulting. Accenture signed to train thirty thousand professionals on Claude, targeting financial services, life sciences, healthcare, and the public sector.

The cost collapse drives adoption. A Max subscription costs two hundred dollars monthly. The median US knowledge worker costs three hundred fifty to five hundred dollars daily fully loaded. An agent handling even a fraction of workflow at six to seven dollars per day yields ten to thirty times return on investment.

Counterpoints

Critics might note that METR data shows autonomous task horizons doubling every four to seven months — accelerating, but still measured in hours, not weeks. Multi-day automation remains fragile.

The piece treats SaaS moats as eroded, but enterprise data migration, workflow retraining, and integration complexity have defeated cheaper alternatives for decades. Agents may cheapen margins without capturing value.

Patel's GitHub commit percentage measures public repositories. Private enterprise commits — where actual business logic lives — remain unmeasured. The twenty percent projection assumes public trends mirror private adoption.

Bottom Line

Claude Code is the first AI tool that measures itself in completed work, not chat responses. Dylan Patel's economic model tracks the shift from selling tokens to orchestrating outcomes. The bottleneck is no longer model quality — it is how long an agent can work before failing. That horizon doubles every few months. When it reaches days, not hours, the fifteen trillion dollar information economy becomes addressable.