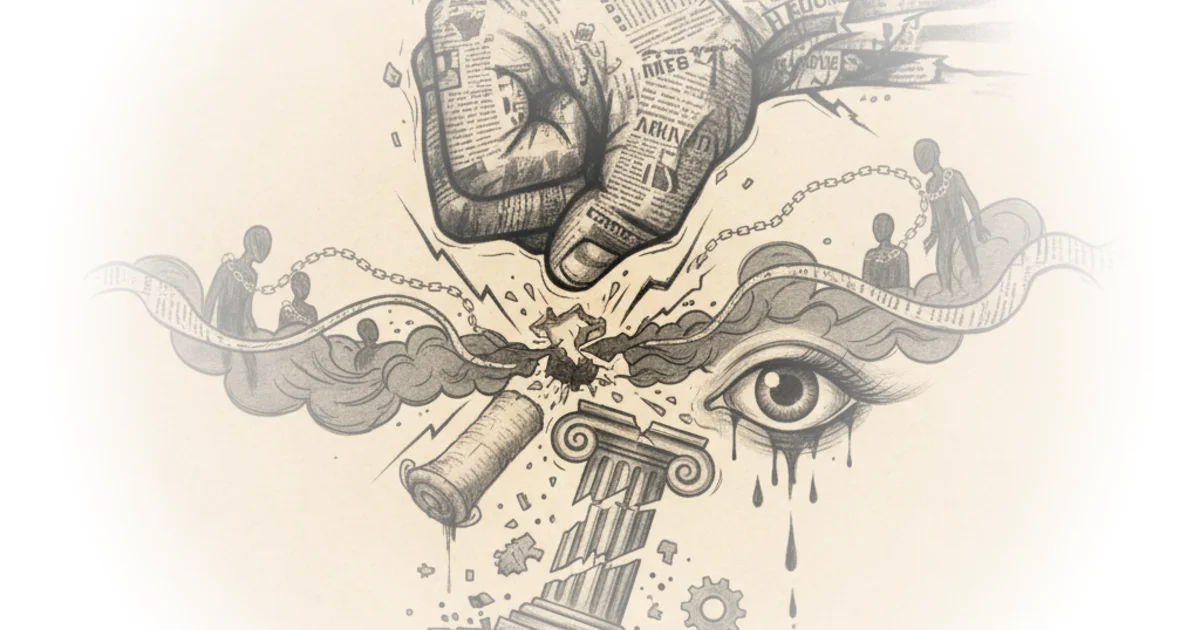

Casey Newton identifies a seismic shift in the digital economy: the era of artificial intelligence models freely ingesting the entire public web is effectively ending. This isn't just a legal skirmish; it is a fundamental renegotiation of value between the builders of generative tools and the creators of the data that fuels them. For busy professionals watching the AI landscape, the New York Times lawsuit against OpenAI represents the moment the industry's "free rider" phase hits a wall.

The Economics of Free Riding

Newton frames the New York Times lawsuit not merely as a copyright dispute, but as an existential challenge to the business model of large language models. The complaint alleges that AI companies are engaging in "unlawful copying and use" to build products that directly compete with the sources they rely on. Newton observes that the suit contends these defendants should be held responsible for "billions of dollars in statutory and actual damages" related to the theft of uniquely valuable works.

The author's analysis cuts through the technical jargon to the core economic reality: if AI can summarize news better and faster than the news itself, the publisher's revenue stream evaporates. Newton writes, "Defendants seek to free-ride on The Times's massive investment in its journalism," accusing the tech giants of using content without payment to "steal audiences away from it." This framing is crucial because it moves the debate from abstract intellectual property rights to the tangible survival of the institutions that verify facts.

However, the legal path forward is murky. Newton points out that while literal copying is easy to prosecute, the more dangerous threat is when models change words sufficiently to create summaries that still kill the original business. As Newton puts it, "Summaries in different words would still be sufficient to kill The Times and similar organizations — and leave us newsless." This highlights a critical gap in current copyright law, which struggles to protect the value of information when the form is altered.

If you can get information more cheaply from an LLM than from the New York Times, you might drop your subscription. But if everyone did that, there would be no New York Times at all.

Critics might argue that fair use has historically protected transformative works, and that AI training is simply the digital equivalent of a human reading a library. Yet, Newton suggests that the scale and commercial nature of this "reading"—where the output directly substitutes the input—changes the equation. The argument that AI firms need the news to exist is a pragmatic one: "Rationally and economically, therefore, they ought to be obligated to pay for the information they are using."

The Regulatory Shadow

When voluntary licensing fails, as it appears to be doing with the New York Times, the industry often looks to the regulatory playbook. Newton draws a direct line from the struggles of Google and Meta to the potential fate of AI companies. The piece notes that while platforms once relied on news for credibility, they eventually deprioritized it, leading to a shrinking journalism sector.

The article highlights how Australia and Canada intervened with "link taxes," forcing platforms to negotiate fees or face binding arbitration. Newton is skeptical of these legislative fixes, calling them "little more than shakedowns that do almost nothing to ensure that the resulting revenues are spent on journalism." Despite this skepticism, the author concedes that the precedent is set: "now that two countries have shown it is possible to wring a few dollars out of platforms this way, many more seem likely to follow."

This creates a precarious future for AI developers. Newton argues that a better solution would be for developers to "pay a fair price to any publisher from whom they are deriving significant, ongoing value." If they refuse, the trajectory suggests they will be forced into a similar regulatory scheme as their search engine predecessors. The implication is clear: the cost of doing business in AI is about to skyrocket, and the burden of that cost will likely be passed down to the consumer or the developer's bottom line.

The Substack Paradox

Shifting gears, Newton addresses a different kind of platform failure: Substack's handling of extremist content. The piece details how Substack co-founder Hamish McKenzie defended the monetization of hate speech by arguing that censorship "makes the problem go away" is a false premise. McKenzie stated, "We believe that supporting individual rights and civil liberties while subjecting ideas to open discourse is the best way to strip bad ideas of their power."

Newton dismantles this logic by pointing out the hypocrisy of a platform that claims to uphold civil liberties while simultaneously profiting from content that incites violence. The author notes that Substack's own guidelines prohibit funding initiatives that "incite violence based on protected classes," yet the company's actions suggest a tacit admission that these rules are not always enforced. Newton writes, "Rolling out a welcome mat for Nazis is, to put it mildly, inconsistent with our values here at Platformer."

The commentary emphasizes that this is not a difficult trade-off but a clear moral failure. Newton argues that the platform is not just hosting these views but actively incentivizing them by allowing monetization, which "seems all but certain to worsen the problem by inviting Nazis to Substack and telling them explicitly that they can make money there." In response, Newton announces the creation of a database to track these accounts, with the intent of pressuring payment processors like Stripe to cut off the revenue stream.

Content moderation often involves difficult trade-offs, but this is not one of those cases.

A counterargument worth considering is the slippery slope of defining "hate speech" and the potential for platforms to over-censor legitimate dissent. However, Newton's stance is that advocating for genocide falls far outside the bounds of protected discourse, making the distinction clear-cut. The author's willingness to leave the platform if changes aren't made signals a growing tension between the creator economy's ideals and its actual practices.

Bottom Line

Newton's analysis succeeds in reframing the AI copyright debate from a legal technicality to a battle over the economic viability of truth-telling institutions. The strongest part of the argument is the realization that AI models cannot survive without the journalism they are currently consuming for free. The biggest vulnerability, however, lies in the legal uncertainty of "fair use" and the potential for courts to rule that training data is transformative, leaving publishers without a clear path to compensation. Readers should watch for how the New York Times case unfolds, as its outcome will likely dictate whether the next generation of AI is built on licensed data or forced to navigate a fractured, regulated web.