Most industry analysis treats AI infrastructure as a simple hardware arms race, but Chipstrat makes a compelling case that the real bottleneck isn't the processor—it's the network connecting them. The piece argues that the economics of training frontier models hinge on a single, often overlooked metric: deterministic, low-jitter communication. This reframes the entire conversation from raw compute power to the invisible fabric that keeps thousands of chips from idling while waiting for a single straggler.

The Lockstep Problem

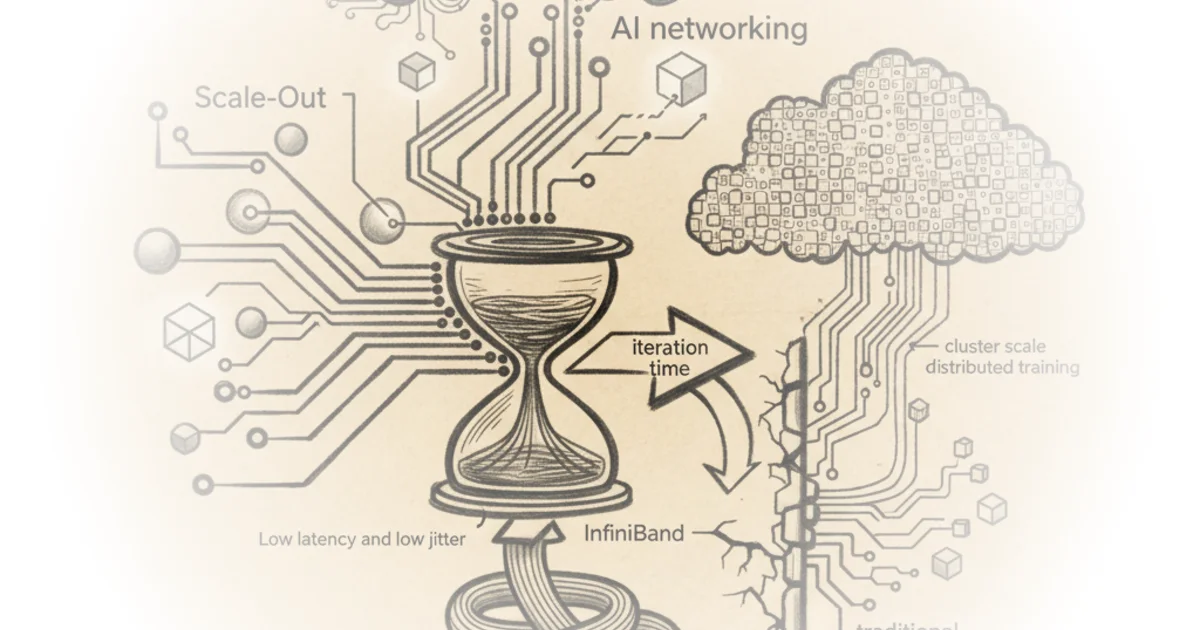

Chipstrat begins by dismantling the assumption that traditional data center networking can handle the demands of modern large language model training. The article draws a sharp distinction between general-purpose enterprise traffic and the rigid synchronization required for AI. "AI training is conceptually a few steps repeated over and over," the piece explains, detailing the cycle of forward passes, backward passes, and gradient aggregation. The critical insight here is the concept of all-reduce, where every GPU must share its calculations before any can proceed. "If one GPU is late, all others stall," Chipstrat reports, noting that in a cluster of 10,000 GPUs, a single device lagging by just 20 microseconds can waste 0.2 seconds of collective compute time. "For such massive clusters, that can be significant dollars of depreciating GPUs idling due to poor networking performance."

This analysis effectively highlights why standard Ethernet, designed for flexibility and feature-richness, fails in this specific context. The editors note that enterprise networks prioritize security and traffic management over speed, while hyperscale clouds tolerate variability because their workloads are independent. "A delayed packet in a database query or email transfer doesn't stall other applications," the article observes, contrasting this with the "lockstep" nature of AI training where variability, or jitter, is fatal. Critics might argue that modern Ethernet variants are closing this gap rapidly, but the piece maintains that the fundamental design philosophy of traditional networks remains a mismatch for the synchronous requirements of distributed training.

"Latency and jitter are so important in AI networking... Deterministic, low-jitter communication is therefore essential to keep GPUs quickly advancing in lockstep."

The HPC Heritage

The commentary then pivots to the historical roots of the solution, challenging the common misconception that InfiniBand is a proprietary invention of a single vendor. Chipstrat clarifies that "InfiniBand is not proprietary, but rather an open standard born from an industry consortium." The piece traces the lineage of high-performance computing back to supercomputers used for scientific simulations, where the need for ultra-low latency was long established. "Unlike enterprise or hyperscale environments, HPC jobs are single workloads that span thousands of servers at once," the editors explain, drawing a direct parallel to the architecture of modern AI clusters. The article details how InfiniBand was purpose-built for this environment, utilizing features like Remote Direct Memory Access (RDMA) to bypass the CPU and lossless forwarding to prevent packet drops. "It uses credit-based flow control to avoid packet drops, which prevents jitter and expensive retransmissions," Chipstrat reports, emphasizing that these are not mere optimizations but architectural necessities.

The piece also addresses the vendor landscape with nuance, acknowledging that while the standard is open, the market is dominated by specific players who have invested heavily in the ecosystem. "Mellanox did not invent InfiniBand," the article asserts, correcting a widespread industry myth. However, it concedes that the current commercial reality involves a heavy reliance on Nvidia's implementation of these standards, alongside emerging alternatives like the Ultra Ethernet Consortium. "Single vendor doesn't mean proprietary," the editors argue, though they acknowledge the practical monopoly that exists in the supply chain for these specialized components.

The Economic Stakes

Ultimately, the article positions networking not as a supporting cast member but as a primary driver of the total addressable market for AI infrastructure. Chipstrat suggests that the shift toward AI is forcing a re-evaluation of the entire data center stack. "Nvidia's monster networking business" is highlighted as a critical component of its expanding market reach, with the piece noting that the company's mix of InfiniBand, NVLink, and Spectrum-X creates a formidable barrier to entry for competitors. The editors point out that the physical reality of these clusters is staggering, referencing images of "purple cables" and active electrical cables connecting hundreds of thousands of GPUs. "Nvidia networking TAM expansion!" the piece exclaims, underscoring the financial magnitude of the shift from general-purpose networking to specialized AI fabrics.

While the focus on Nvidia's dominance is clear, the article leaves room for the evolution of open standards, noting that the industry is actively working on alternatives like Spectrum-X and the Ultra Ethernet Consortium's specifications. "We will contrast InfiniBand with modern Ethernet-for-AI stacks," the editors promise, suggesting that the future may see a hybrid approach where the rigid determinism of InfiniBand competes with the cost-efficiency of re-engineered Ethernet.

"The moment those results need to be shared is when networking shines… or is the bottleneck!"

Bottom Line

Chipstrat's strongest contribution is its rigorous explanation of why jitter is the silent killer of AI efficiency, a concept often glossed over in favor of raw bandwidth metrics. The piece's vulnerability lies in its heavy reliance on the current dominance of a single vendor's ecosystem, potentially underestimating the speed at which open Ethernet standards might mature to meet these deterministic demands. For investors and technologists alike, the takeaway is clear: the next frontier of AI progress depends less on the next generation of chips and more on the invisible threads that bind them together.