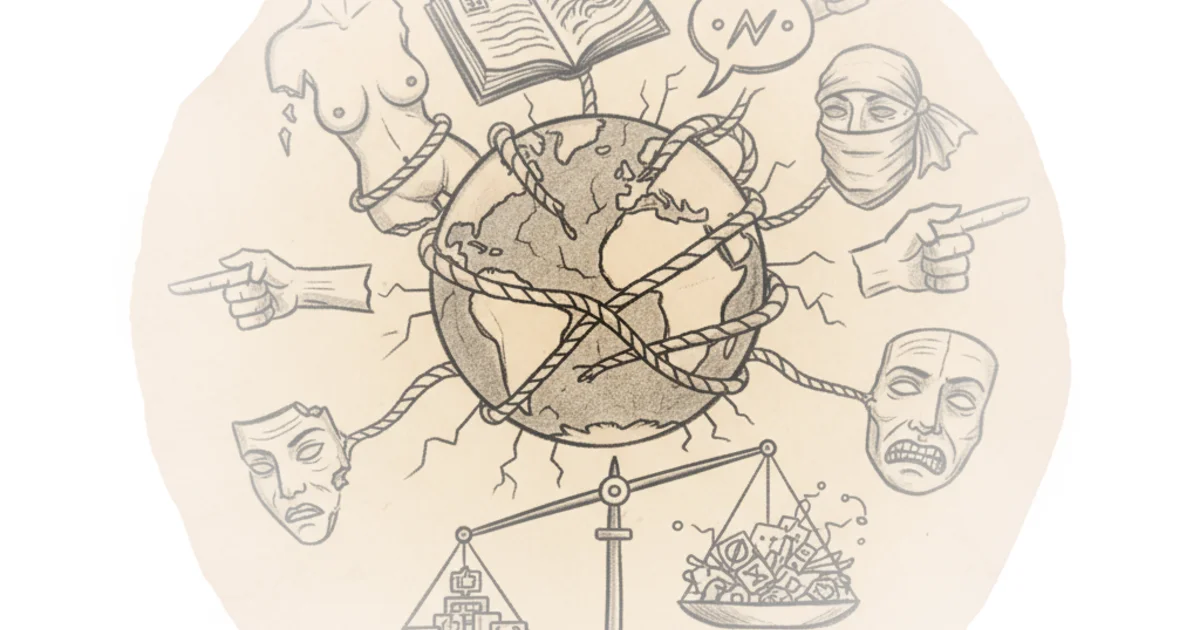

Casey Newton delivers a sobering verdict on Meta's Oversight Board, arguing that its five-year experiment in self-regulation has largely failed to deliver justice, yet remains the only imperfect shield against total corporate autocracy. The piece's most striking claim is not that the board is useless, but that its very design—a fantasy of a judicial branch coequal to the executive—was doomed from the start by the company's refusal to cede real power.

The Fantasy of Independence

Newton opens by dismantling the board's founding myth. Five years ago, the board accepted its first cases, tackling high-stakes questions like whether quoting Goebbels constitutes commentary on fascism or if a veiled threat against a French president incites violence. Newton writes, "The founding promise of the Oversight Board — that it would serve as a kind of Supreme Court for content moderation, a judicial branch coequal to Meta's executive — has been revealed as a fantasy."

This framing is essential because it shifts the blame from individual board members to the structural impossibility of the task. The board emerged from the ashes of the Rohingya genocide and the Cambridge Analytica scandal, intended to restore trust after Mark Zuckerberg held absolute power over every post. While the board has secured wins, such as forcing Meta to acknowledge the "adverse human rights impact" of over-moderating Palestinian content, Newton points out the stark reality: the board has taken on disappointingly few cases and can take nearly a year to rule on posts that credibly incited political violence.

Critics might argue that any independent review is better than none, but Newton suggests the board's silence on recent catastrophes undermines that defense. When Meta's internal research showed users had to be caught trafficking people for sex 17 times before being banned, or that the company earned $16 billion from scams, the board offered no response. As Newton observes, "None, though, has been forthcoming."

The board was at its most prominent in 2021, when Meta asked it to consider whether Trump should be permanently banned for his actions related to the January 6 Capitol riots. But the board punted that decision back to Meta, and since then has largely faded from public view.

The Funding Trap and the Shift in Power

The commentary then pivots to the board's vulnerability: its funding. Meta funds the board only for short terms, creating a dynamic where board members are reluctant to bite the hand that feeds them. Newton notes that the board's silence risks appearing to be a comment on its own independence. "It limits their willingness to push back," says law professor Kate Klonick, "Because even for a lot of the people on the board, it's just a very nice paycheck, and they'd rather not give up that paycheck."

This financial tether becomes even more dangerous when the political winds shift. Newton highlights a disturbing trend where Meta's policy team was sidelined, empowering lobbyists to rewrite guidelines on the fly to curry favor with the administration in Washington. In a move that blindsided the board, Meta announced new categories of permitted hate speech to please the White House. The board's response? A bizarrely upbeat statement welcoming the changes. Newton writes, "Particularly during a year when Meta abruptly sidelined its policy team, empowering lobbyists to rewrite community guidelines on the fly to curry favor with the Trump administration."

Here, the article exposes a grim reality: the board operates in a world where Meta policy must not damage its relationship with the US government. When the administration threatens to deny visas to content moderators, the board's ability to provide oversight evaporates. The analogy of the board as a court falls apart because courts do not wait for cases to arrive and then rule narrowly; they do not issue statements when companies behave badly, yet the board is expected to be both aloof and reactive.

A Reluctant C-Grade

Despite the harsh critique, Newton refuses to dismiss the experiment entirely. The board has provided a mechanism for civil society to have a direct voice, a stark contrast to the "black magic" of the pre-board era where contacting Facebook required personal connections. Newton quotes Klonick again: "Before the board existed, it was real black magic for civil society, governments, every type of group to have a voice at these platforms when something happened."

The board's impact is also strongest outside the United States, particularly in the Global South, where it has protected speech in regions Meta leadership cares less about. However, this geographic success highlights a darker truth: the board does the most good where the company's profit motives are weakest. Newton notes, "The darker suggestion in that idea is that the board has been able to do the most good in regions where Meta's leadership cares the least."

Ultimately, the author lands on a reluctant C-grade. It is a verdict that acknowledges the board's failures while recognizing the void that would exist without it. "This really did not meet my expectations," Klonick admits, "But would I have changed it or decided not to do this at all? Absolutely not."

It's bad for government to control speech. And it's bad for billionaires to control speech. And it was always really, really important for users to have a mechanism of direct impact and control.

Bottom Line

Newton's strongest argument is that the Oversight Board's failure is structural, not accidental; it was designed to be a shield for a billionaire, not a sword for the public. Its biggest vulnerability remains its financial dependence on the very entity it is meant to police. Readers should watch whether the board can evolve beyond its judicial pretense to become a more vocal advocate for human rights, or if it will remain a silent observer as Meta continues to prioritize political favor over user safety.