Jon Y cuts through the hype of artificial intelligence to reveal a fundamental hardware crisis: our most powerful computers are hitting a wall of their own making. While the industry chases faster processors, this piece argues that the very architecture of modern computing—the separation of memory and processing—is the primary obstacle to true intelligence. For leaders tracking the future of energy and compute, the implication is stark: we may need to stop building better engines and start building entirely new vehicles.

The Energy Paradox

Y begins by dismantling the assumption that raw speed equates to superiority. He contrasts the biological efficiency of the human brain with the voracious appetite of modern data centers. "The brain operates at about 12 to 20 watts of power... The desktop computer on the other hand does about 175 watts. Leading edge AI accelerators like the Nvidia H100 use anything from 300 to 700 watts." This comparison is not merely a trivia point; it highlights a physical limit. As Y notes, the brain achieves exaflop-level performance without a central clock, relying instead on "insane parallelism" where 86 billion neurons fire asynchronously.

The author's framing of the central clock as a source of waste is particularly compelling. In digital circuits, components must wait for a signal to synchronize, creating idle time and energy loss. Y writes, "A central clock system costs time and energy to distribute the clock signals across the system. And there is waste as each system component does not execute its task until it is told to do so." This reframes the pursuit of speed as a pursuit of inefficiency. Critics might argue that for deterministic tasks like financial modeling, the precision of a synchronized clock is non-negotiable. However, Y's point stands for the messy, probabilistic nature of learning and pattern recognition, where the brain's lack of synchronization is a feature, not a bug.

"Neurons literally throw things at the wall and are not afraid to make mistakes. In doing so, they find something that works."

Chaos as a Feature

The piece takes a bold turn by suggesting that noise is essential to intelligence. Y describes the brain's environment as chaotic, where most signals are ignored, yet the system remains robust. "When a neuron receives a spike from a synapse, the majority of the time it does nothing - noise. But there are so many extensive connections... that enough spikes get through to the right neurons to carry on." This stands in sharp contrast to the digital imperative of zero errors, where a single bit flip can crash a system.

Y argues that this tolerance for error allows for resilience. "By living amidst chaos, brains also become shockingly resilient and flexible - even in situations of massive damage." The commentary here is vital: it suggests that our current drive for perfect signal-to-noise ratios in hardware may be counterproductive for general AI. The tradeoff is clear. As Y puts it, "You use a lot more power to achieve this very low signal-to-noise ratio." This is a critical insight for policymakers and engineers alike; the path to efficient AI may require embracing a certain degree of hardware unreliability.

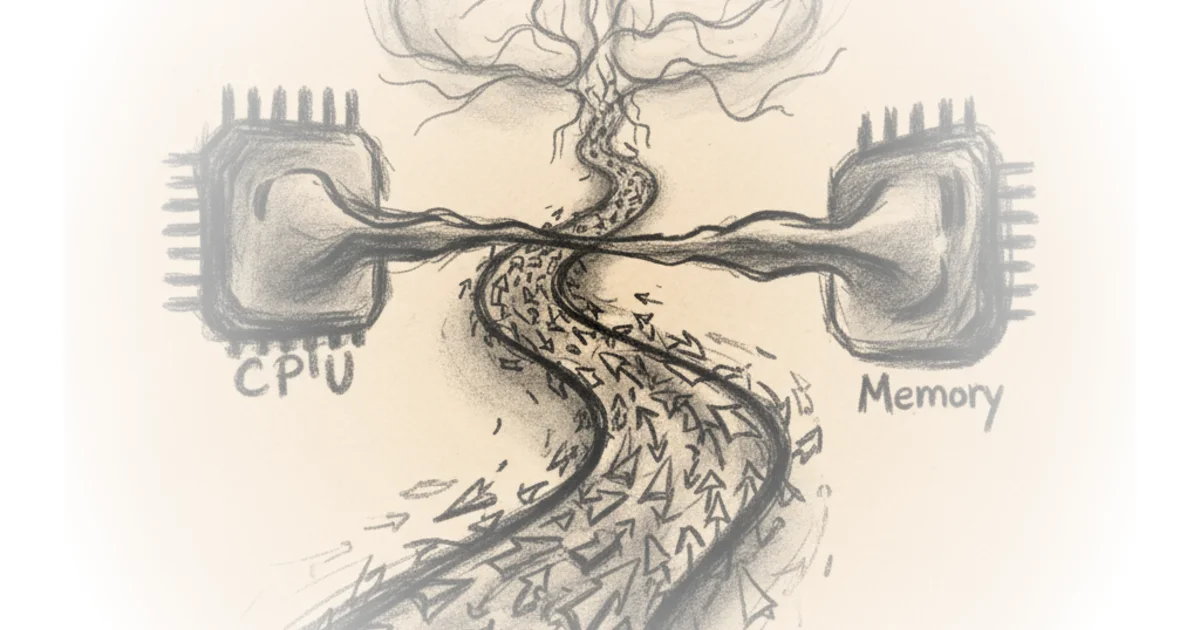

The Von Neumann Bottleneck

The core technical argument centers on the "Von Neumann bottleneck," the physical roadblock caused by separating memory from the processor. Y explains that in current systems, data must travel back and forth, creating a traffic jam that limits speed regardless of how fast the CPU is. The brain solves this by merging the two functions. "Neurons and Synapses merge together the work of computation and memory - doing a bit of both. Memories are stored in the relative strength of the synapses between neurons. But those synapses can do calculations as well. That is why the brain does not suffer the Von Neumann bottleneck."

This distinction separates true neuromorphic hardware from the software simulations running on current GPUs. Y clarifies that while artificial neural networks mimic the brain's logic, they run on "Von Neumann hardware - which means dealing with the bottleneck." The author notes that while companies like IBM and Intel have created "silicon neurons" using standard manufacturing, they still face the limitations of digital approximation. "The brain is an analog device - digital devices are an ill fit for replicating their behavior," Y writes, pointing to the need for a paradigm shift in materials science.

Beyond Silicon

The piece concludes by looking at the memristor, a component that acts as a "memory resistor" capable of remembering its resistance history even without power. This technology promises to bridge the gap between memory and processing. Y describes the potential: "Their key characteristic is that the value of that electrical resistance is dependent upon the history of the voltage passing through it - ergo the name 'memory'." This non-volatile nature mirrors the synaptic plasticity of the biological brain, offering a path to hardware that learns and adapts rather than just executes.

However, the transition is not seamless. Y acknowledges that current CMOS-based neuromorphic chips like IBM's TrueNorth face a tradeoff between energy efficiency and accuracy. "If we want the model to give us competitively accurate results... we need to use more power." This suggests that while the theoretical benefits are massive, the commercial viability of these systems depends on overcoming significant engineering hurdles. The industry is at a crossroads between refining the old architecture and betting on the new.

"The brain's powerful capabilities - achieved at low power - is the single most significant motivation for building neuromorphic hardware today."

Bottom Line

Jon Y's analysis effectively shifts the conversation from software algorithms to the physical constraints of hardware, arguing that the next leap in AI requires a fundamental rethinking of how computers are built. The strongest part of the argument is the exposure of the energy inefficiency inherent in the Von Neumann architecture, a vulnerability that will only grow as AI models scale. The biggest vulnerability, however, is the immense difficulty of manufacturing analog, chaotic systems at the scale of modern silicon; the path from memristor theory to mass-market chips remains steep. Readers should watch for breakthroughs in non-volatile memory technologies, as these will likely determine whether the industry can escape the bottleneck or remain trapped by it.