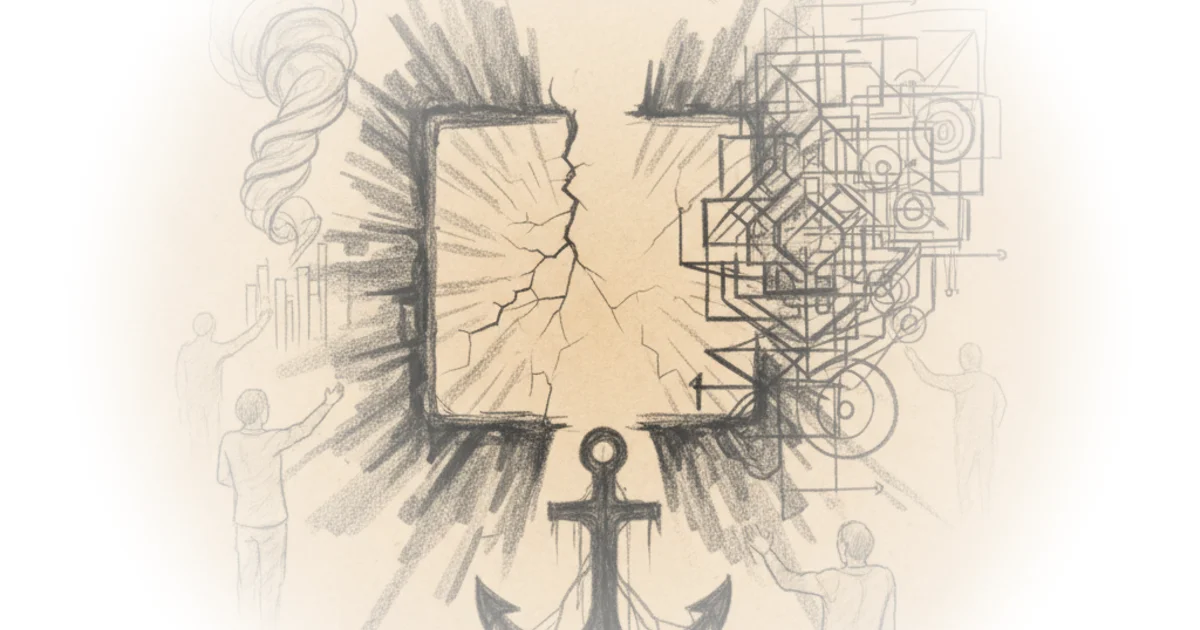

Ross Haleliuk exposes a brutal paradox at the heart of modern security: the most effective work is the work that leaves no trace, and therefore, no credit. By anchoring his analysis in a two-decade-old engineering management study, he reveals that the industry's obsession with "heroic" firefighting isn't just a cultural quirk—it is a systemic trap that actively degrades organizational resilience. For leaders drowning in alerts, this piece offers a rare diagnosis of why their best efforts to prevent disasters are being punished by the very metrics designed to measure success.

The Vicious Cycle of "Working Harder"

Haleliuk begins by dismantling the intuitive belief that more effort equals better security. He draws on the foundational work of Nelson Repenning and John Sterman to illustrate a counterintuitive dynamic: immediate productivity gains often mask long-term decay. "Working harder leads to immediate performance improvements," Haleliuk notes, "but over time, things get worse." This "better before worse" trajectory explains why security teams are perpetually exhausted; they are rewarded for putting out fires rather than installing sprinklers.

The author argues that when teams are bogged down by manual, repetitive tasks, they lack the bandwidth to invest in foundational hygiene or architectural changes. This creates a feedback loop where the system becomes increasingly fragile. "The more they firefight, the more fragile the system becomes, and the more fragile the system, the more they need to firefight to keep it from falling apart," Haleliuk writes. This framing is particularly sharp because it shifts the blame from individual burnout to structural incentives. The real cost isn't just fatigue; it's the accumulation of invisible technical debt that eventually makes the system unfixable through sheer effort alone.

"Nobody ever gets credit for fixing problems that never happened."

The Illusion of Shortcuts

The commentary then pivots to the seductive nature of shortcuts. Haleliuk explains that when under pressure, teams inevitably cut corners on documentation, threat modeling, and root-cause analysis. These decisions feel rational in the moment, offering a "grace period" of increased output. However, the consequences are delayed. "Shortcuts are tempting because there is often a substantial delay between cutting corners and the consequent decline in capability," he observes.

This delay is the critical failure point. Just as a manager might skip preventive maintenance to meet a quarterly target, security teams skip patching or ignore identity access management exceptions to ship features faster. The result is a hidden accumulation of risk that only becomes visible when it is too late. Haleliuk's analysis here is compelling because it reframes "bad security" not as a lack of skill, but as a predictable outcome of prioritizing short-term velocity over long-term stability. Critics might argue that in a fast-moving startup environment, some shortcuts are unavoidable, but Haleliuk's point stands: without a conscious strategy to repay that debt, the system will eventually collapse under its own weight.

The Fundamental Attribution Error

Perhaps the most psychologically astute part of the piece is its application of the "fundamental attribution error" to security breaches. Haleliuk points out that when things go wrong, humans instinctively look for a person to blame rather than a process to fix. "If you believe the system is underperforming due to low capability, then you should focus on working smarter," he writes, contrasting this with the default assumption that workers are "lazy, undisciplined, or just shirking."

In the security context, this manifests as executives blaming "bad security teams" for systemic failures, or teams blaming "dumb users" for clicking phishing links. The reality, as Haleliuk suggests, is often a complex web of chronic underinvestment and poor process design. This attribution error prevents organizations from learning from their mistakes. Instead of fixing the broken system that allowed the breach, they double down on training users or hiring more analysts, perpetuating the cycle of failure. This connects to broader historical patterns in organizational behavior, where the fundamental attribution error has long been cited as a primary reason why safety initiatives in fields like aviation and healthcare fail to take root until a catastrophe forces a systemic review.

The Toxic Hero Culture

The article culminates in a critique of the "hero culture" that rewards last-minute problem solving over prevention. Haleliuk quotes an engineer who perfectly captures the tragedy of the situation: "Nobody ever gets credit for fixing problems that never happened." Because organizations promote those who save the day, they inadvertently incentivize the very chaos that creates the need for heroes.

"As organizations grow more dependent on firefighting... they reward and promote those who, through heroic efforts, manage to save troubled projects," Haleliuk explains. This creates a leadership class of "war heroes" who are ill-equipped to build preventative systems because their entire career has been defined by reacting to crises. The author suggests that this is not unique to security but is a universal organizational flaw, visible in manufacturing and healthcare as well. The only way to break this cycle, Haleliuk argues, is for leaders to grant security teams the political capital to introduce friction and prioritize "working smarter," even if it means a temporary dip in visible productivity.

"Prevention means friction, so it's not only that 'Nobody ever gets credit for fixing problems that never happened', but it's also that nobody can afford to introduce more friction."

Bottom Line

Haleliuk's strongest contribution is his ability to translate a decades-old management theory into a visceral diagnosis of the modern security crisis, proving that the industry's burnout is a feature of its incentives, not a bug of its people. The argument's vulnerability lies in the difficulty of execution: while the diagnosis is clear, convincing a board to accept short-term productivity losses for long-term stability remains a profound political challenge. Leaders must watch for the emerging shift toward "preemptive cybersecurity," but until the reward structure changes, the cycle of firefighting will likely persist.