The world of AI skills is collapsing beneath our feet every few months — and most people are still learning skills that expired last quarter. That’s the provocative claim from Nate Jones, who argues we’ve been teaching the wrong thing.

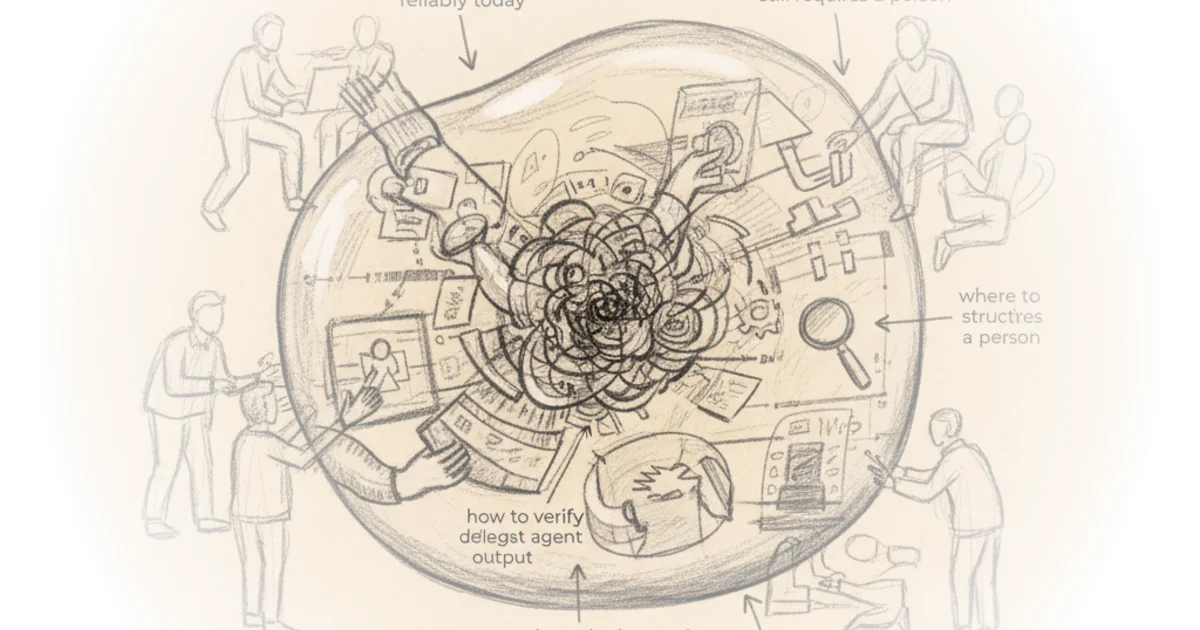

Jones makes his case with a vivid metaphor: imagine AI capability as an expanding bubble. The air inside represents everything AI agents can do reliably today; the outside is what still requires human judgment. But this bubble keeps inflating with every model release, every capability jump — and skills that once sat on the surface now sit comfortably inside. Someone who learned to work at the November boundary is now standing inside it, doing work an agent handles better.

But here’s the paradox no one talks about: as the bubble expands, the surface area actually grows. There’s more boundary to operate at, not less. More seams between human and agent work. More judgment calls about what crosses that membrane and what doesn’t. Every previous workforce skill — literacy, numeracy, computer literacy, coding — had a finish line. AI doesn't.

There's more boundary to operate at, more places for human judgment, not less.

Jones calls this new skill frontier operations: sensing where the edge sits, structuring handoffs between AI and human, maintaining models of how agents fail at the current capability level, forecasting where the surface expands next, deciding where human attention creates value it didn't need to create before. It's not AI literacy — that's just knowing what a language model is. It's not prompt engineering — that's one technique inside one component. It's the first workforce skill in history that expires on a roughly quarterly cycle.

Boundary Sensing

The first of five persistent skills is boundary sensing: maintaining accurate, up-to-date operational intuition about where the human-agent boundary sits for a given domain. This isn't static knowledge; it updates with every model release. Opus 4.5 couldn't reliably retrieve information from deep in a long document. Three months later, Opus 4.6 scores 93% on retrieval at 256,000 tokens. A person who calibrated their boundary sense against the November model and hasn't updated is now either overrusting or underusing the February model — and both errors are expensive.

For a product manager, good boundary sensing looks like letting an agent draft a competitive analysis but realizing that same agent will miss political dynamics between executives it's never observed. So the product manager hands the market sizing and feature comparison to the agent — tasks now safely inside the AI bubble — while reserving stakeholder dynamics for themselves.

A marketing director might use an agent for ideation and first drafts, then edit brand voice copy personally and avoid asking for iterations past version two. Bad boundary sensing looks like trusting everything and getting burned by hallucinations, or trusting nothing and doing everything manually. Most commonly, it looks like calibrating six months ago and not noticing the boundary moved.

Seam Design

The second skill is seam design: structuring work so transitions between human and agent phases are clean, verifiable, and recoverable. This is architectural — closer to how a good engineering manager thinks about system boundaries than individual tasks.

A software lead might structure the handoff so ticket triage and routing go to the agent while architectural decisions stay with humans. The boundary is defined by specific artifacts: ticket content, codebase structure, org chart. Specific verification checks at that seam ensure the handoff is clean. Without those, you either go end-to-end with agent runs and lack verification infrastructure, or you have humans manually reviewing things the agent now handles better.

A consulting engagement manager might break a strategy project into research (agent-led with human-defined scope), synthesis (human-led with agent-generated first pass frameworks), and client presentation (human-led with agent-generated slide drafts). The seam between research and synthesis is a structured deliverable: a fact base with source citations the human can spot-check. Six months ago, that scene would have required manual fact verification on every data point, but the agent's citation accuracy improved dramatically — so it made sense to move the scene.

Failure Model Maintenance

The third skill is failure model maintenance: maintaining an accurate, current mental model of how agents fail at the specific texture and shape of current capability levels. Early language models failed obviously — garbled text, wrong facts, incoherent reasoning. Current frontier models fail subtly: correct-sounding analysis built on a misunderstood premise, plausible code that handles happy path and breaks on edge cases, research summaries 98% accurate while the remaining 2% are confidently fabricated in ways very difficult to distinguish from accurate parts.

The skill isn't skepticism — that's necessary but not useful. It's maintaining a differentiated failure model. For task type A, agent's failure mode is X; here's how to check for it. For task type B, failure mode is Y; different check.

A corporate counsel knows an agent reviewing contracts will catch boilerplate issues but miss indemnification clauses or non-standard termination language and might miss the interaction between a specific liability cap and section 7 in an exhibit. The current failure model says trust the boilerplate scan, manually review cross references between liability provisions and exhibits — very different from reading the whole thing again.

A data scientist knows an agent generating Python will handle pandas transformations and standard statistical tests reliably but produce plausible-sounding nonsense when data has messy edge cases like mixed formats or implicit nulls. The failure model says verify data cleaning steps and column semantics, then trust downstream analysis if cleaning is correct.

Capability Forecasting

The fourth skill is capability forecasting: making reasonable short-term predictions about where the bubble boundary will move next and investing learning and workflow development accordingly. This isn't predicting AI's long-term future — no one can do that reliably. It's reading trajectory well enough to make sensible six-to-twelve-month bets about what likely becomes agent territory.

A person with good capability forecasting in early 2025 could look at coding agents gaining thirty minutes of sustained autonomy, see how it scales from there, and invest more in code review and specification skills rather than raw coding. A UX researcher watching agents get better at survey design and qualitative coding can start investing in interpretive synthesis — turning coded data into product insights that shift a roadmap.

The skill is probabilistic positioning, not linear prediction. Bad forecasting looks like chasing every new tool (exhausting with no compound returns) or ignoring developments until forced to catch up. More often, it looks like investing heavily in learning a particular platform whose advantage might evaporate when the next model shifts and either deletes that part of the workflow or provides leverage that platform can't deliver alone.

Leverage Calibration

The fifth skill is leverage calibration: making high-quality decisions about where to spend human attention, now the scarcest resource. As agent capabilities increase, the bottleneck shifts from getting things done to knowing what's worth a human's attention.

Even McKinsey has a framework describing two to five humans supervising fifty to one hundred agents running an end-to-end process — and that's not just McKinsey; it's emerging across industries. That roughly ten-to-one ratio makes attention math very clear: with one hundred streams of agent output and eight hours a day, you cannot review everything at equal depth.

An engineering manager overseeing agent-assisted development across five teams develops hierarchical attention allocation. Most agent-generated code flows through automated test suites and gets linted on its own. A smaller subset like billing or data pipelines might get flagged for human code review. Only architecture decisions get full human attention — a reversal of how most managers currently operate.

Critics might note that frontier operations sounds great in theory but faces a fundamental problem: the quarterly expiration cycle means any training curriculum is obsolete before it lands. Our entire workforce development infrastructure assumes the target stands still; this one doesn't. The gap between what the economy needs and what we've built to teach is massive — and unlike previous skill shifts, there's no steady-state version of "proficient" to aim for.

Bottom Line

Jones's core argument is compelling: frontier operations is real, it's valuable, and existing training infrastructure can't handle it. His biggest vulnerability is that his five skills sound like distinct capabilities but read more like a checklist of behaviors — useful as diagnosis, less clear as curriculum. The piece's power comes from the paradox he identifies: we're trying to teach an expanding surface skill with fixed-destination methods, and that's exactly why most AI training fails.