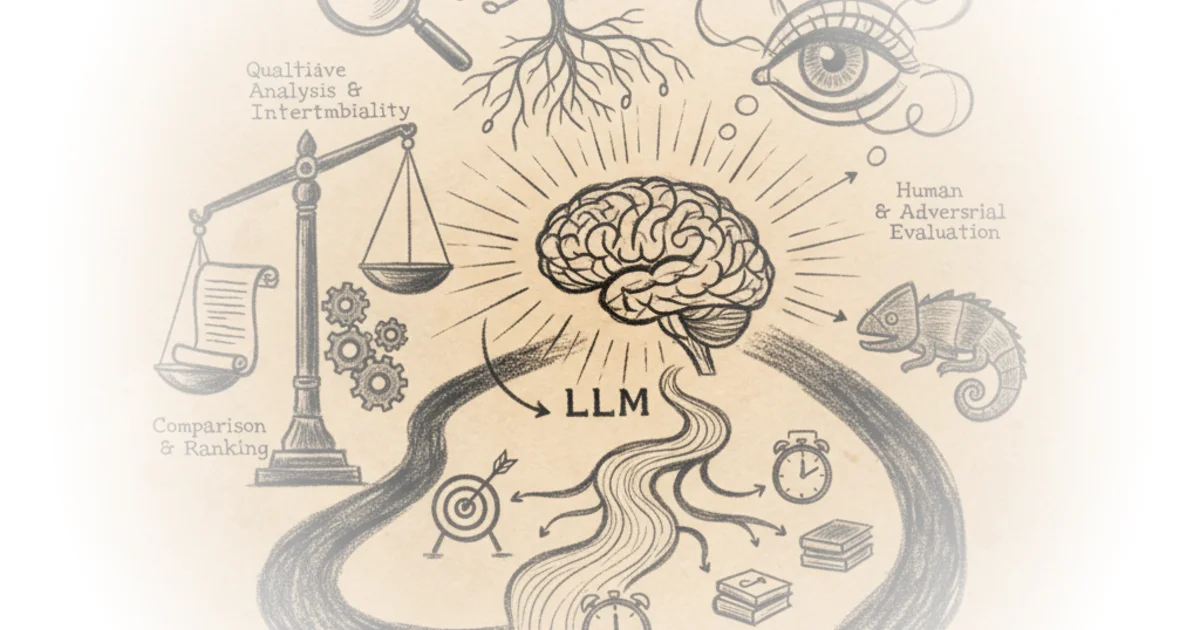

In a field drowning in hype and opaque leaderboards, Sebastian Raschka offers a rare, grounded map for navigating the chaotic landscape of large language model evaluation. Rather than accepting marketing claims at face value, he dissects the four dominant methodologies—multiple-choice benchmarks, verifiers, leaderboards, and LLM judges—revealing how each distorts or clarifies our understanding of AI progress. This is not just a technical manual; it is a necessary corrective to the industry's tendency to conflate high scores with genuine intelligence.

The Illusion of the Multiple-Choice Test

Raschka begins by dismantling the reliance on standardized tests like the Massive Multitask Language Understanding (MMLU) dataset, which has become the de facto metric for model capability. He notes that while these benchmarks are "simple and useful diagnostics," they suffer from a critical blind spot. "A limitation of multiple‑choice benchmarks like MMLU is that they only measure an LLM’s ability to select from predefined options and thus is not very useful for evaluating reasoning capabilities," he writes. This is a crucial distinction: a model can memorize the correct letter without ever understanding the underlying logic.

He illustrates this by walking through code that forces a model to predict a single letter (A, B, C, or D) for a math problem. The result is often a binary pass or fail that masks the nuance of the model's thought process. "It does not capture free-form writing ability or real-world utility," Raschka argues, highlighting that a high score on a static dataset might simply indicate that the model has "forgotten" less knowledge compared to its base version, rather than demonstrating superior reasoning. This framing is effective because it shifts the focus from the result to the mechanism of evaluation, exposing how easily a model can game a system designed to test human knowledge recall.

Critics might note that multiple-choice tests remain the only scalable way to evaluate models across dozens of subjects simultaneously, making them a pragmatic, if imperfect, necessity. However, Raschka's insistence that a low score can highlight "potential knowledge gaps" while a high score doesn't guarantee practical strength serves as a vital warning against over-interpreting leaderboard positions.

A high MMLU score doesn't necessarily mean the model is strong in practical use, but a low score can highlight potential knowledge gaps.

The Promise and Peril of Verifiers

Moving beyond static tests, Raschka introduces verification-based approaches as a more robust alternative for specific domains like mathematics and coding. Here, the model generates a free-form answer, and an external tool—such as a code interpreter or calculator—determines if the final result is correct. "This approach can introduce additional complexity and dependencies, and it may shift part of the evaluation burden from the model itself to the external tool," he admits. Yet, he contends that this trade-off is worth it because it allows for the generation of "unlimited number of math problem variations programmatically."

This method represents a significant shift in how we measure progress. Instead of asking the model to guess the right letter, we ask it to solve the problem and then use a deterministic tool to check the work. Raschka explains that this has "become a cornerstone of reasoning model evaluation and development" precisely because it forces the model to engage in step-by-step logic rather than pattern matching. The argument lands strongly here: by decoupling the generation of the solution from the verification of the answer, we get a clearer picture of whether the model is actually reasoning or just hallucinating a plausible-looking conclusion.

However, the reliance on external tools introduces a new variable. If the verifier itself is flawed or if the domain is too complex to be reduced to a deterministic check (like creative writing or nuanced policy analysis), the evaluation breaks down. Raschka acknowledges this limitation by noting that this method is best suited for domains that can be "easily (and ideally deterministically) verified."

The Road Ahead

As the field pivots toward "reasoning models," Raschka's breakdown suggests that the old metrics are becoming obsolete. He points out that while reasoning is "one of the most exciting and important recent advances," it is also "one of the easiest to misunderstand if you only hear the term reasoning and read about it in theory." His hands-on approach, complete with from-scratch code examples, serves as a reminder that true understanding requires digging into the mechanics, not just reading the headlines.

The piece ultimately argues that we need a more nuanced mental map to interpret the flood of new research. "Having a clear mental map of these main approaches makes it much easier to interpret benchmarks, leaderboards, and papers," Raschka writes. This is the piece's most valuable contribution: it empowers the reader to look at a model's score and ask not just "how high is it?" but "what exactly does this number measure, and what does it miss?"

Bottom Line

Raschka's analysis is a masterclass in demystifying AI evaluation, successfully arguing that no single metric can capture the complexity of modern language models. His strongest move is exposing the gap between multiple-choice accuracy and genuine reasoning, a distinction that is often lost in the rush to publish new leaderboard records. The biggest vulnerability remains the lack of a perfect, universal evaluation framework, but by clarifying the trade-offs of each method, he provides the best possible tool for navigating the current uncertainty.