Dwarkesh Patel", ## The Age of Scaling Is Over

In the world of artificial intelligence, something strange is happening. The biggest companies are investing roughly 1% of GDP into AI—resources that would have felt science fiction decades ago—but for most people, nothing has actually changed. That's the paradox at the heart of this conversation with Ilya Sutskever.

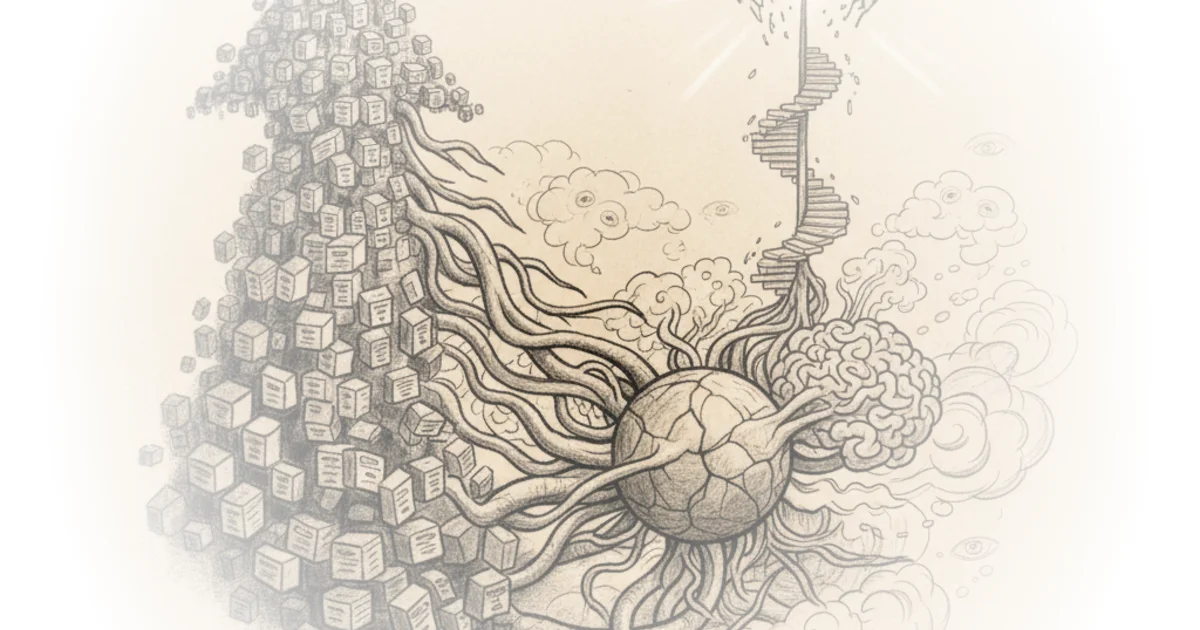

Sutskever, a pioneer in deep learning and co-founder of OpenAI, makes a bold claim: we're moving from an era where scaling existing techniques was enough to drive progress, into something far more uncertain—an age of genuine research. The shift isn't just semantic. It's a fundamental change in how we think about what these models can become.

The Puzzle of Smarter Models

One of the most confusing things about current AI systems is how to reconcile their remarkable performance on evaluations with their relatively modest economic impact. The models do incredibly well on tests—sometimes outperforming human experts—yet the economy hasn't felt that shift yet.

Sutskever points out a striking example: when you use AI coding tools, something odd happens. You can ask a model to fix a bug, and it introduces a second bug. Then you ask it to fix that new bug, and somehow it brings back the first one. You can alternate between bugs forever.

How is this possible? The models seem smart enough for evaluations but struggle with basic tasks in the real world.

Sutskever offers two explanations. First, maybe real training has made the models too single-minded—unaware of broader contexts even while they're highly capable in narrow domains. Second, when companies do pre-training, they use essentially everything available. But during reinforcement learning training, they have to make choices about what environments to include.

The Two Students

Sutskever introduces a human analogy that makes this clearer:

Imagine two students wanting to become great programmers. Student one trains like a competitive programmer: 10,000 hours of practice, solving every problem, memorizing proof techniques, mastering algorithms. They become exceptional at coding competitions.

Student two does maybe 100 hours of practice but also explores broadly—does well in their career later on.

Which student succeeds more in their career? The second one.

The models today are much more like the first student. Companies say: we want our model to do great at coding competitions, so let's give it every single competitive programming problem ever created, augment the data, and train on that. Now you have an excellent competitive programmer—but this level of preparation doesn't necessarily generalize to other things.

What Pre-Training Actually Does

The distinction between pre-training and reinforcement learning matters more than most people realize.

Pre-training's main strength is sheer volume—you can include basically all the world's data without carefully selecting what goes in. It's like the 10,000 hours of practice you get for free because that knowledge already exists somewhere in the pre-training distribution.

But here's what's surprising: even with a tiny fraction of that data, humans know much more at age 15 than these models do. And they know it more deeply. The mistakes they make are different from what you'd expect from pure scale.

Sutskever also explores whether evolution is an analogy for pre-training—three billion years of search producing human intelligence. But there's a key difference: in the case of brain damage, when someone loses emotional processing, they can't make decisions even though they're articulate on tests. They make terrible financial decisions and struggle with basic choices.

This suggests emotions might be something you can't get from pre-training alone—some kind of value function that tells you what to optimize for.

The Problem With Value Functions

In reinforcement learning, when you train an agent, you give it a problem and let it take thousands of actions. Then you score the solution and use that signal to update every action in the trajectory.

The problem: if you're doing something that takes a long time to solve, you learn nothing until you actually finish solving it.

Value functions help here. In chess, when you lose a piece, you know immediately that what preceded it was bad—you don't need to play the entire game to update your behavior. The value function lets you short-circuit learning before reaching the end of a long chain of reasoning.

But in domains like math or programming, if you've spent a thousand steps pursuing a solution direction and conclude it's unpromising, you could already get that reward signal at step one—not at the very end when you finally give up. The value function isn't playing as prominent a role in current AI research.

Moving Forward

Sutskever's core argument is clear: we need to stop simply stacking environments and diversity. Instead, we should figure out approaches that let models learn from one environment and improve on something else. The age of scaling taught us important lessons about what works. But the next era requires genuine research into how these systems actually reason.

"If you combine this with generalization being inadequate, it explains a lot of what's going on—the disconnect between eval performance and real-world performance that we don't even fully understand yet."

Critics might note that focusing too narrowly on coding competitions or specific benchmarks risks missing broader capabilities. The evaluation metrics themselves might be too narrow, and expanding them might not capture what actually matters for deployment.

Bottom Line

Sutskever's strongest insight is recognizing that scale alone won't produce generalizable intelligence—the analogy of the 100-hour student suggests something more fundamental about how learning works. His vulnerability is less technical and more conceptual: we don't yet know what those broader capabilities should look like, or how to design environments that test for them instead of just stacking more of the same.

The age of research isn't just a new era—it's a confession that we have no idea how to get where we're going.