Rohit Krishnan cuts through the noise of a chaotic week in artificial intelligence to reveal a far more unsettling truth: the conflict isn't about who is the "good guy," but about the fundamental impossibility of a private company acting as the moral arbiter for the state's most lethal tools. While the media fixates on personality clashes between tech CEOs and the Department of War, Krishnan argues that the real story is the collision of two incompatible operating systems—one based on contractual red lines, the other on the absolute necessity of operational control.

The Illusion of the Red Line

Krishnan observes that the recent fallout between Anthropic and the Department of War was less a policy disagreement and more a breakdown in expectations regarding who holds the keys. "Anthropic said no we won't budge, DoW got angry, and threatened to cut them off and declare them a supply chain risk," he writes. This escalation highlights a critical friction point: the government views the technology as a sovereign asset, while the company views it as a product with ethical constraints.

The author suggests that the dispute centered on vague concepts like "mass surveillance" and "autonomous weapons," which are difficult to enforce in a live combat scenario. "You ask a dozen people, as Zvi did, you get a dozen different responses," Krishnan notes regarding the ambiguity of these terms. This lack of specificity creates a dangerous vacuum where legal loopholes can be exploited. Critics might note that the Department of War's insistence on "all lawful use" is a standard legal defense, but Krishnan rightly points out that legality does not always equate to ethical acceptability or safety.

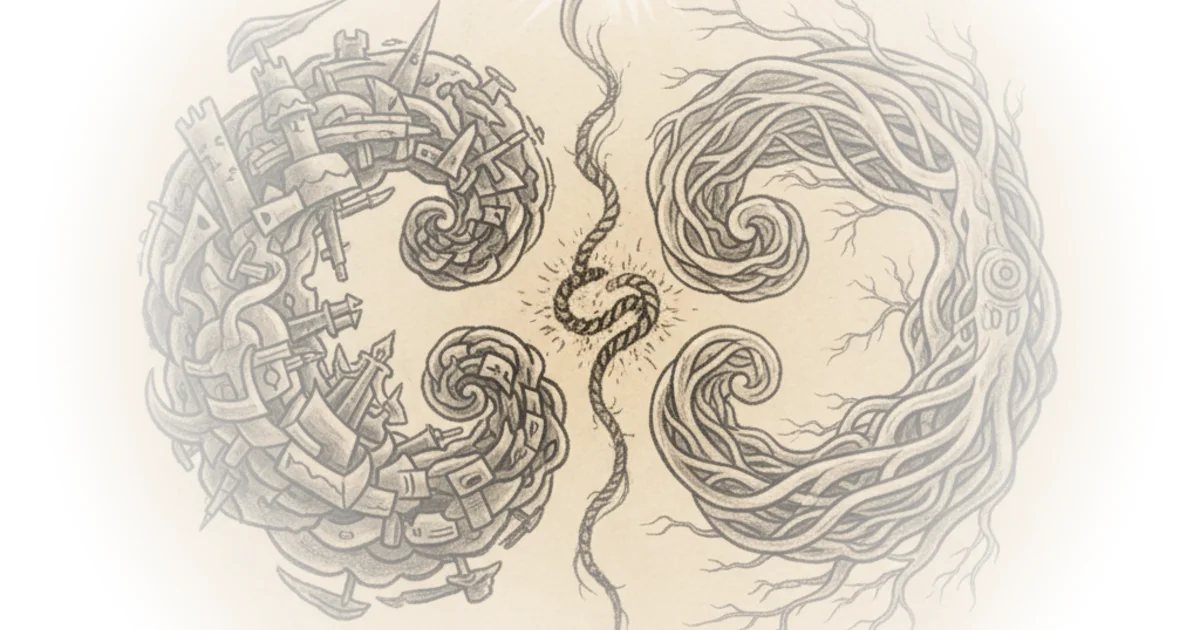

The core of the argument rests on the distinction between two models of deployment. One model relies on trust and upfront contracts, while the other relies on active, real-time oversight. "One has more contractual protections and limited operational visibility, the other has lower contractual protections and higher operational visibility," he explains. This distinction is crucial because it determines who actually controls the outcome when a missile is heading toward a target.

"You cannot call your technology a major national security risk in dire need of regulation and then not think the DoD would want unfettered access to it."

The Sovereignty of the Machine

Krishnan reframes the narrative from a corporate spat to a constitutional crisis of sorts, where the executive branch refuses to be bound by the terms of service of a private vendor. He draws a parallel to historical precedents where the government asserted dominance over critical infrastructure, noting that "the US has nationalised or regulated whole industries for simpler reasons. Telephone lines, rails, steel mill attempted seizure, these aren't small things." This historical context, reminiscent of the broad executive powers debated during the framing of Article One of the Constitution, suggests that the Department of War's reaction was not an anomaly but a predictable assertion of state power.

The author argues that the Department of War's position is rooted in a pragmatic, if chilling, reality: they cannot afford to pause a military operation to ask a CEO for permission. "The DoW would also want the power to determine courses of action, and can't leave operational control in the hands of another," Krishnan writes. This creates a paradox where the very companies calling for AI safety and regulation are the ones being asked to surrender their safety guardrails to the state.

Krishnan's analysis of OpenAI's contrasting approach is particularly insightful. While Anthropic tried to enforce red lines via contract, OpenAI seemingly opted for a model where they retain control over the deployment stack. "OpenAI said sure, we agree to all lawful use, but note these specific laws and regulations, and we will control the deployment of our models, using our people," he paraphrases. This shift from a permissions-based contract to an execution-based control mechanism may be the only viable path forward, yet it raises its own questions about the concentration of power in the hands of a few tech leaders.

The End of Privacy and the Genie

The commentary takes a darker turn as Krishnan considers the long-term implications for civil liberties. He posits that the era of digital privacy is effectively over, not because of a single policy, but because the technology itself has made anonymity impossible. "I am extremely uncomfortable with the fact that we can just purchase commercially available data on almost everyone," he admits. The ability to reverse-engineer identities and track individuals is no longer the exclusive domain of intelligence agencies.

The author warns that the genie cannot be put back in the bottle, regardless of the regulatory framework. "Genies don't tend to go back into bottles, and this one has powerful forces keeping it out," he writes. This inevitability forces a difficult question: if the technology is here to stay, how do we structure the relationship between the state and the private sector to prevent abuse without crippling national defense?

Krishnan suggests that the current tribal politics surrounding these issues are a distraction from the structural reality. "Unless we know what we want to do with the attention, tribal politics is going to overwhelm it all," he argues. The focus on whether a specific CEO is "opportunistic" or "virtuous" misses the point that the system itself is broken.

"Democracy is incredibly annoying but really, what other choice do we have!"

Bottom Line

Krishnan's strongest contribution is his refusal to romanticize the role of private companies as the guardians of democracy against the state, instead exposing the futility of trying to contractually bind the executive branch's operational needs. The argument's vulnerability lies in its somewhat fatalistic acceptance that privacy is gone, potentially underestimating the power of new regulatory frameworks to impose technical constraints. Readers should watch for how the Department of War's new contracts with other AI firms will codify these "execution control" mechanisms, as this will define the future of autonomous warfare.