Erik Hoel offers a diagnosis for our cultural malaise that bypasses the usual suspects of corporate greed or lazy audiences, proposing instead that we are suffering from a technical glitch: cultural overfitting. By applying a concept from machine learning to the history of art and media, Hoel suggests that our society has become so efficient at optimizing for specific rewards—box office returns, social media clicks, algorithmic approval—that it has lost the ability to generalize, resulting in a sterile, repetitive output that feels eerily like a copy of a copy. This is not just a complaint about sequels; it is a structural argument about how efficiency itself can kill creativity.

The Decline of Deviance

Hoel begins by dismantling the notion that cultural stagnation is a new phenomenon, noting that while people have always complained about culture becoming "stupid," the specific grievance that it has become "stagnant" is surprisingly recent. He leans heavily on Adam Mastroianni's theory that the decline of deviancy in daily life correlates with a decline in cultural risk-taking. As Hoel notes, "[People are] also less likely to smoke, have sex, or get in a fight, less likely to abuse painkillers, and less likely to do meth, ecstasy, hallucinogens, inhalants, and heroin." The argument posits that the "weirdos and freaks who actually move culture forward" have vanished because modern life is simply too comfortable and safe to encourage the kind of chaotic experimentation that breeds innovation.

This framing is compelling because it shifts the blame from a lack of talent to a surplus of safety. However, critics might argue that this view romanticizes the chaos of drug use and violence as necessary fuel for art, ignoring the many geniuses who created masterpieces without such self-destruction. Yet, the core insight—that a risk-averse society produces risk-averse art—resonates deeply in an era where every creative decision is vetted by focus groups and financial models.

The Efficiency Trap

The piece then pivots to the structural drivers of this stagnation, weaving together arguments about intellectual property, corporate consolidation, and the internet. Hoel cites Chris Dalla Riva's view that overly long copyright terms incentivize exploitation over production, and Andrew deWaard's observation that financial firms now dictate media output. "We are drowning in reboots, repurposed songs, sequels and franchises because of the growing influence of financial firms in the cultural industries," Hoel writes, highlighting how monopolies have reduced the number of record companies and studios to a handful of profit-obsessed entities.

This economic consolidation creates a feedback loop where safety is the only viable strategy. The internet, often touted as a democratizing force, is presented here as an engine of bland uniformity. Hoel references David Marx's Blank Space, describing the 21st century as a trend toward "bland uniformity and crass commercialism." The irony, as Hoel points out, is that the sheer volume of content online often feels like a "listicle of incredibly dumb things," yet this chaos is itself a form of overfitting to the algorithmic demands of engagement.

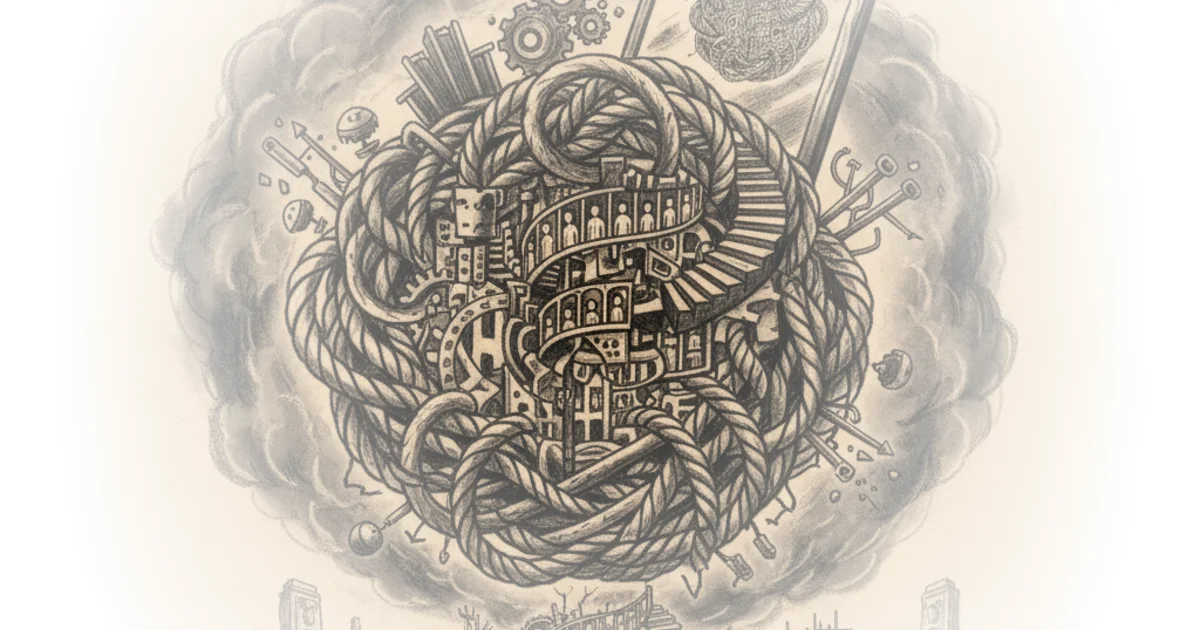

"Overfitting is amorphous and structural and haunting us. It's our cultural ghost."

The Neuroscience of Stagnation

The most distinctive contribution of Hoel's commentary is the application of the "Overfitted Brain Hypothesis" to culture itself. Drawing on his own 2021 research, Hoel explains that in machine learning, overfitting occurs when a model learns the training data too well, including its noise, and fails to perform on new data. He argues that culture is undergoing the same process. "Dreams, Hoel theorize, are augmented samples of waking experiences that guide neural representations away from overfitting waking experiences," he writes, suggesting that just as the brain needs dreams to generalize, culture needs chaos and inefficiency to remain robust.

Instead, we have replaced human oversight with algorithmic feeds and financial prediction markets. "The switch from an editorial room with conscious human oversight to algorithmic feeds... likely was a major factor in the 21st century becoming overfitted," Hoel asserts. This leads to "mode collapse," where the system produces highly similar outputs to satisfy a hypersensitive discriminator. In the cultural realm, this manifests as the "Instagram face" or the endless superhero franchise, where the drive for efficiency strips away the variance necessary for true creativity.

Critics might note that this analogy risks reducing complex human social dynamics to a mathematical error, potentially oversimplifying the role of human agency. Yet, the parallel holds weight when observing how quickly trends homogenize once an algorithm identifies a winning formula.

The Unconscious Factory

Hoel concludes with a haunting vision of a society that has optimized itself into unconsciousness. He describes a historical montage where human hands are gradually replaced by machines, until finally, "there are no more hands and it is just a whirring robotic factory of appendages and shapes." In this scenario, decisions are still made, but they are made by systems that have replaced "generalizable and robust human conscious learning" with artificial sensitivity.

The argument suggests that the complaints about "late-stage capitalism" are actually complaints about a system that has become "oppressively efficient and therefore overfitted." As Hoel puts it, "overfitted systems suck to live in." This reframing moves the conversation from political ideology to systemic design, suggesting that the solution isn't just more regulation, but a deliberate reintroduction of inefficiency and risk.

"We've been replacing generalizable and robust human conscious learning with some (supposedly) superior artificial system. But it may be superior in its sensitivity, but not in its overall robustness."

Bottom Line

Hoel's argument is at its strongest when it reframes cultural stagnation not as a failure of imagination, but as a failure of system design where efficiency has been mistaken for progress. The biggest vulnerability lies in the risk of technological determinism, potentially underestimating the resilience of human creativity in the face of algorithmic pressure. Readers should watch for how this "overfitting" dynamic plays out in emerging fields like AI-generated art, where the tension between optimization and originality will likely define the next decade of culture.