In an era where the industry chases ever-larger parameter counts, Sebastian Raschka offers a sobering, technical reality check: the fundamental architecture of large language models has barely changed in seven years. While the headlines scream about new breakthroughs, Raschka argues that beneath the hype, we are largely "polishing the same architectural foundations" rather than reinventing the wheel. This distinction is critical for any professional trying to separate genuine engineering progress from marketing noise.

The Illusion of Radical Change

Raschka begins by dismantling the assumption that recent models represent a structural revolution. He notes that despite the passage of time from GPT-2 to the latest 2025 releases, the core DNA remains strikingly consistent. "At first glance, looking back at GPT-2 (2019) and forward to DeepSeek V3 and Llama 4 (2024-2025), one might be surprised at how structurally similar these models still are," he writes. This observation is not merely nostalgic; it serves as a grounding mechanism for readers overwhelmed by the pace of AI announcements.

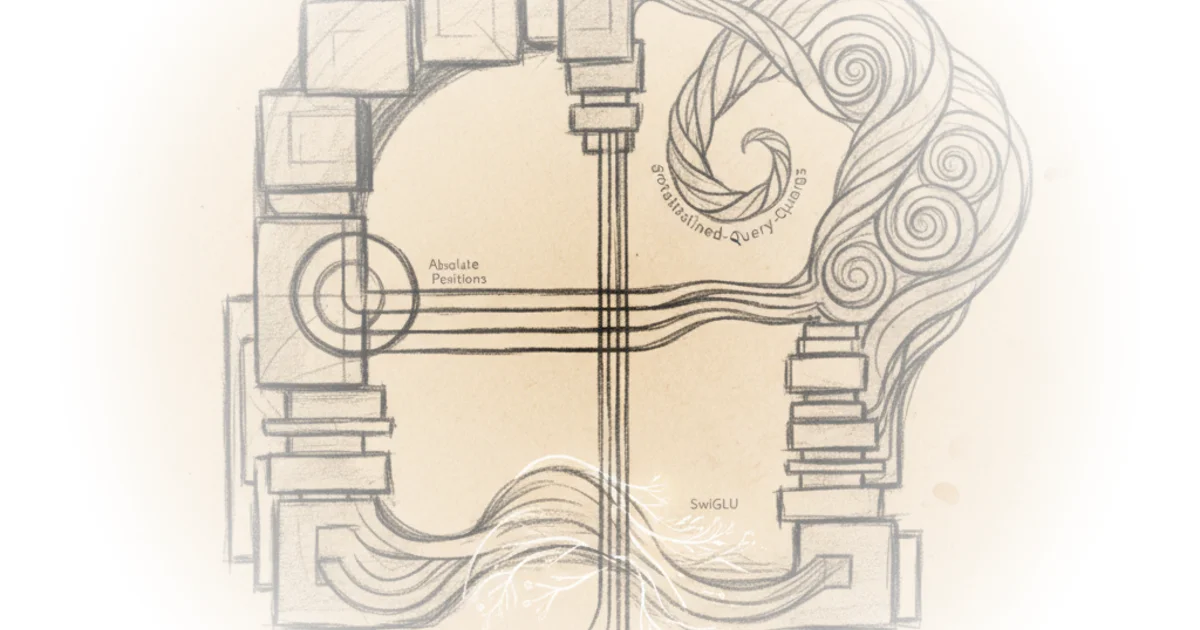

The author acknowledges that refinements exist, such as the shift from absolute to rotational positional embeddings or the replacement of GELU activation functions with SwiGLU. However, he frames these as incremental optimizations rather than paradigm shifts. "But beneath these minor refinements, have we truly seen groundbreaking changes, or are we simply polishing the same architectural foundations?" Raschka asks. This rhetorical question effectively challenges the reader to look past the surface-level marketing of "new" models and examine the actual engineering under the hood.

DeepSeek's Efficiency Gambit

The commentary then pivots to DeepSeek V3 and R1, which Raschka identifies as the most significant architectural deviations in the current landscape. The piece highlights two specific innovations: Multi-Head Latent Attention (MLA) and Mixture-of-Experts (MoE). Raschka explains that while Grouped-Query Attention (GQA) has become the standard for reducing memory usage by sharing key and value projections, DeepSeek chose a different path. "Multi-Head Latent Attention (MLA) offers a different memory-saving strategy that also pairs particularly well with KV caching," he notes.

The distinction is technical but vital. Unlike GQA, which groups heads to share data, MLA compresses the key and value tensors into a lower-dimensional space before storage. Raschka points out a crucial trade-off: "This adds an extra matrix multiplication but reduces memory usage." He further clarifies that this isn't just a theoretical exercise; empirical evidence suggests it yields better results. Citing ablation studies from the DeepSeek-V2 paper, he writes, "GQA appears to perform worse than MHA, whereas MLA offers better modeling performance than MHA, which is likely why the DeepSeek team chose MLA over GQA."

This section is particularly strong because it moves beyond the "bigger is better" narrative to focus on efficiency. Raschka details how DeepSeek V3 utilizes a massive 671-billion-parameter MoE architecture but only activates a fraction of those parameters during inference. "DeepSeek V3 has 256 experts per MoE module and a total of 671 billion parameters. Yet during inference, only 9 experts are active at a time," he explains. This sparsity allows the model to hold vast knowledge while remaining computationally feasible. A counterargument worth considering is whether this complexity introduces new failure modes or instability that simpler, dense models avoid, but Raschka's data suggests the efficiency gains currently outweigh these risks.

By compressing the key and value tensors, DeepSeek achieves a rare feat: reducing memory usage while actually improving modeling performance compared to standard alternatives.

The OLMo 2 Anomaly

Shifting focus to the non-profit Allen Institute for AI, Raschka examines OLMo 2, a model that stands out for its transparency rather than its raw scale. He describes OLMo 2 as a "great blueprint for developing LLMs" due to its open documentation of training data and code. Interestingly, OLMo 2 bucks the trend of adopting efficiency hacks like MLA or GQA, sticking instead to traditional Multi-Head Attention. Instead, its innovation lies in the placement of normalization layers.

Raschka details how OLMo 2 reverted to a form of Post-LN (Post-Norm), a design choice that had largely been abandoned in favor of Pre-LN in models like Llama. "In 2020, Xiong et al. showed that Pre-LN results in more well-behaved gradients at initialization," he writes, noting that OLMo 2's return to Post-LN was a deliberate move to enhance training stability. He further introduces the concept of QK-Norm, an additional normalization step applied to queries and keys within the attention mechanism. "QK-Norm is essentially yet another RMSNorm layer," Raschka clarifies, explaining that its placement helps stabilize the attention scores before they are processed.

The inclusion of OLMo 2 is a strategic choice by Raschka to show that innovation isn't monolithic. While DeepSeek pushes the boundaries of efficiency through complex compression, OLMo 2 explores the stability of foundational components. "Unfortunately this figure shows the results of the reordering together with QK-Norm, which is a separate concept. So, it's hard to tell how much the normalization layer reordering contributed by itself," he admits. This honesty about the limitations of current research data adds significant credibility to his analysis.

Critics might argue that focusing on architectural tweaks ignores the more significant driver of performance: the scale and quality of training data. However, Raschka's premise is that understanding the structural skeleton is essential for diagnosing why models behave the way they do, regardless of data volume.

Bottom Line

Sebastian Raschka's analysis provides a necessary corrective to the industry's tendency to conflate parameter count with architectural innovation. The strongest part of his argument is the evidence that efficiency gains, particularly through MLA and MoE, are now the primary battleground for model performance, not just raw size. The biggest vulnerability in the current landscape remains the opacity of training methodologies, which makes it difficult to fully isolate the impact of these architectural choices. As the field moves forward, professionals should watch whether these efficiency-focused designs can scale without hitting the same diminishing returns that have plagued previous generations of dense models.