Brad DeLong delivers a stinging rebuke to the paralysis induced by AI anxiety, arguing that the fear of an imminent, total societal overturn is a distraction from the very real, incremental creative destruction already reshaping the economy. While many policy writers freeze at the threshold of the unknown, DeLong insists that history offers a far more reliable map than the speculative forecasts of Silicon Valley evangelists.

The Burden of the Fork

The piece begins by addressing a specific anxiety plaguing modern journalism: the inability to write about policy when the future trajectory of technology is so uncertain. DeLong critiques Matthew Yglesias for feeling "transfixed, like Buridan's Ass," unable to choose a course of action because he cannot predict whether AI will plateau or explode into superintelligence. DeLong writes, "Matt thinks there is a genuine fork here, and stands transfixed... Matt thinks today's AI is roughly as capable as a generic literate person with broad knowledge of what's already written—which is a powerful research assistant, but just a powerful research assistant."

This framing is effective because it exposes the absurdity of waiting for certainty before acting. DeLong suggests that Yglesias has "half-drunk the AI-psychoactive koolaid," conflating the current hype cycle with genuine existential risk. He draws a sharp parallel to the cryptocurrency boom, noting that the "vibe is the same" as the current AI fervor, where "nobody sees [Bitcoin] as anything societally transformative, or indeed as having any other serious use case other than 'digital gold!' and 'number go up!'" By invoking the crypto bubble, DeLong grounds the abstract fear of AI in a tangible, recent failure of prediction.

Critics might argue that the comparison to Bitcoin is flawed because AI's impact on productivity is already measurable in ways that crypto never was. However, DeLong's point remains valid: the narrative of total societal collapse is often a smokescreen for the grifters and self-grifters who benefit from the chaos of uncertainty.

Maybe A.I. progress means we have a golden opportunity to launch a Police for America initiative and get a whole different group of people thinking about law enforcement careers, and maybe it means total loss of explicit human control over the future of our planet and our species. That's not a very good article!

Standing on Shoulders, Not Worshiping Gods

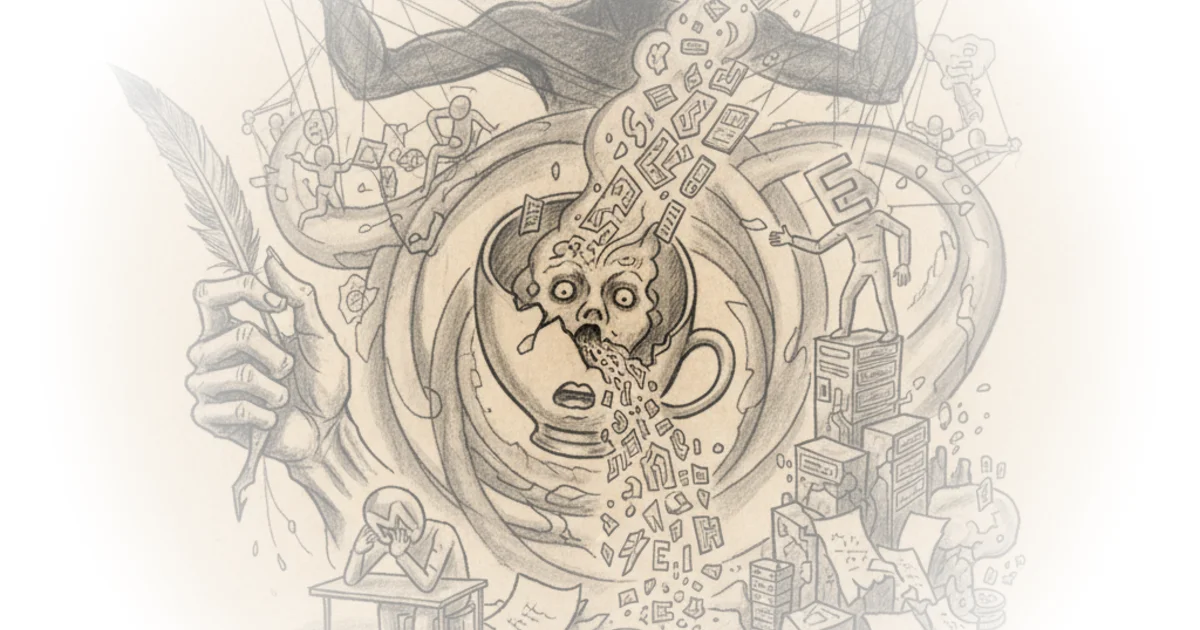

DeLong pivots from the immediate policy paralysis to a grand historical sweep, arguing that humanity has always experienced "successive leading-sector Schumpterian creative-destruction upending of orders and institutions." He rejects the notion that we are facing a unique, unprecedented singularity. Instead, he reframes the current moment as the latest chapter in a long story of "standing on the shoulders of ever-taller pyramids of giants." He writes, "The real ASI emerged. Not an Artificial Super-Intelligence constructed in a computer lab... But, rather, the distributed knowledge and thought base that is the Anthology Super-Intelligence that is humanity's collective mind."

This historical contextualization is the piece's intellectual anchor. DeLong traces the acceleration of human progress from the slow 1% per millennium of the Paleolithic era to the explosive 100% per century of the Industrial Revolution. He notes that since 1875, "a generation sees about 80% of the economy grow in technology by about 1/4 in efficiency. While about 20% is upended and revolutionized." This data-driven approach dismantles the idea that AI is a sudden, alien force; rather, it is the logical continuation of a trend where technology reshapes the division of labor every single generation.

The argument here is that the discomfort of being in the "bulls-eye" of this disruption is not new. DeLong recalls King Arkhidamos III, who lamented, "By Hercules! Man's bravery is ended!" upon seeing the Macedonian torsion catapult. This historical anecdote serves as a powerful reminder that every technological leap is met with fear by those whose roles are being displaced. The parallel to the current anxiety among intellectual professionals is striking and well-placed.

The Real Disruption

DeLong's most provocative claim is that the current wave of disruption is specifically targeting the "learned intellectual professions that Matt and I specialize in." He argues that the "gears shift" are not about a digital god taking over, but about natural-language front-ends to structured databases becoming the new standard for accessing human wisdom. He writes, "Much better ways at accessing and remixing the real ASI... is not a Digital God. It is natural-language front-ends to structured and unstructured databases."

This reframing is crucial. It moves the conversation away from the sci-fi fear of machine dominance and toward the practical reality of how work is being reorganized. The "Anthology Super-Intelligence" is not a rival to human intelligence but an amplifier of it, built on the accumulated knowledge of the past. DeLong suggests that the "vibe" of the current moment is less about the end of humanity and more about the end of the specific ways in which we have traditionally organized intellectual labor.

A counterargument worth considering is that the speed of AI advancement, driven by recursive self-improvement, may indeed outpace the historical patterns DeLong describes. If the gap between models grows exponentially rather than linearly, the "standing on shoulders" metaphor might break down. Yet, even if the pace accelerates, the fundamental dynamic of creative destruction remains the same.

Since 1875 we have seen: Steampower, Applied-Science, Mass-Production, Globalized Value-Chain, and now Attention Info-Bio Tech modes—of production, but also distribution, communication, and domination—equivalent scale transformations shake society every single generation, with societal superstructures always lagging far behind and desperately shaking themselves to pieces in attempts to cope.

Bottom Line

DeLong's strongest asset is his refusal to treat AI as a unique historical anomaly, grounding the debate in the long arc of technological disruption rather than the short-term panic of the tech sector. His biggest vulnerability lies in potentially underestimating the speed at which recursive self-improvement could alter the economic landscape, but his core message—that we must act within the uncertainty rather than waiting for a clear path—is essential for any policymaker or writer trying to navigate the next decade.